Abstract

In pursuit of proactive safety management in construction, accurate and real-time recognition of workers' cognitive states is essential for crafting targeted interventions to minimize safety risks. Unlike conventional manual and qualitative methods, electroencephalogram (EEG) offers a reliable and objective solution for cognitive state recognition. Notably, efforts that integrate EEG with deep learning have exhibited significant advancements. Nevertheless, certain constraints, such as experimental costs and limited participants, have caused a scarcity of high-quality data, which has impeded performance in recognition tasks. Although transfer learning demonstrates its capability in addressing this challenge, the lack of relevant explorations into EEG-based cognitive state recognition remains a significant research gap. Therefore, customizing pre-trained deep learning models for these tasks would be beneficial. This study aims to develop pre-trained models to advance future studies in this domain through transfer learning, encompassing: 1) extracting extensive and accurately labeled EEG data from the DEAP dataset, 2) selecting appropriate network architectures and implementing pre-trained model development, and 3) collecting EEG data for mental fatigue recognition and evaluating model effectiveness. Rigorous evaluation suggests a substantial improvement in model performance, with test accuracy increasing from 63.19% to 92.29% by leveraging the pre-trained convolutional neural networks (CNN)—long short-term memory (LSTM) model. In conclusion, this study significantly contributes to enhancing safety management for construction workers through EEG by providing validated pre-trained models to address challenges of data scarcity. Future research may advance by exploring additional network architectures, increasing sample size, and considering more specific recognition tasks for model evaluation.

Keywords

1. Introduction

The construction sector is known for its notable safety risks, stemming from its inherent complexity, unpredictability, and often chaotic work environments[1]. A significant component of these risks is the unsafe behaviors of construction workers, which account for over 70% of construction accidents that lead to injuries and even fatalities[2]. These behaviors often arise from cognitive factors, such as insufficient vigilance[3] and excessive fatigue[4]. Given the continuous and repetitive nature of construction tasks, a decline in cognitive state is particularly concerning[5]. The cognitive process behind unsafe behavior emphasizes the critical role of cognitive state in the occurrence of construction accidents[6]. Therefore, recognizing and evaluating workers' cognitive states is essential for implementing interventions, thereby facilitating proactive safety management.

In order to implement cognitive state recognition for workers, previous efforts have typically used qualitative methods such as questionnaires or self-reports[7]. These methods, however, tend to be subjective and imprecise. Recently, electroencephalogram (EEG) has emerged as a reliable means to detect construction workers' cognitive states[8], such as work stress[9] and mental fatigue[10]. Prior research has developed time-domain EEG features to reflect specific cognitive states, such as using the Engagement index to measure workers' mental workload[11]. Recently, data-driven assessment incorporating machine learning algorithms has represented a significant advancement and gained attention[9]. Nonetheless, the reliance on hand-crafted features may limit sufficient representativeness and, consequently, impair model performance[11].

Integrating deep learning into EEG analysis has been extensively explored, since it autonomously extracts features from original data[12]. This advanced technology effectively addresses the constraints of EEG features when utilizing machine learning, and enhances model performance[13]. However, the stringent need for high-quality EEG data for model training presents significant challenges. Due to experimental costs and other constraints, prior explorations for EEG-based cognitive state recognition for construction workers typically involve insufficient participants, averaging around 15, with a median of 12[11]. Moreover, previous data labeling has heavily relied on subjective tools, such as questionnaires based on National Aeronautics and Space Administration Task Load Index[1], with only a few adopting objective physiological indicators like skin temperature[14]. Notably, accurate data labeling methods, combined with sufficient sample size, are crucial for ensuring data quality and model performance[11].

The data scarcity for EEG- and deep learning-based cognitive state recognition of construction workers has become a significant limitation. This scarcity severely hinders training efficiency and model performance in recognition tasks[15]. However, there are abundant publicly available datasets for EEG-based cognitive research, such as Dataset for Emotion Analysis using Physiological Signals (DEAP)[16], SJTU Emotion EEG Dataset (SEED)[17], Brain-Computer Interface (BCI), PhysioNet EEG Motor Movement/Imagery Dataset[18], and others. These datasets hold potential to address challenges posed by data scarcity through utilization of transfer learning, which leverages established domain knowledge from source tasks to improve model performance in relevant tasks[19]. Transfer learning, as a sophisticated technology to enhance model performance in the context of data scarcity[20], has been extensively investigated in construction, improving tasks like object recognition[15] and text classification[21]. However, the exploration of this technology in EEG-based recognition for workers' cognitive states remains an unexplored area. Unlike image-related studies that adopt pre-trained models like EfficientNet[22] and VGG[23], there is a lack of appropriate pre-trained models for EEG-based tasks in construction, given the notable differences between EEG and image data formats. Therefore, developing pre-trained models based on established EEG datasets can effectively bridge the research gap, laying a solid foundation for various cognitive state recognition tasks in construction using EEG and transfer learning.

This study aims to develop pre-trained deep learning models applicable for various EEG-based cognitive state recognition tasks in construction. The development process includes the following steps: 1) extracting extensive and accurately labeled data from DEAP dataset; and 2) determining suitable deep learning network architectures tailored to the extracted data and implementing model training. Following model development, an indoor experiment will be conducted to collect EEG data for model evaluation. A rigorous analysis will comprehensively compare performance metrics, including Accuracy, Macro-Precision, Macro-Recall, and Macro-F1, to validate the effectiveness of the pre-trained models in enhancing performance for specific tasks like mental fatigue recognition.

2. Methodology

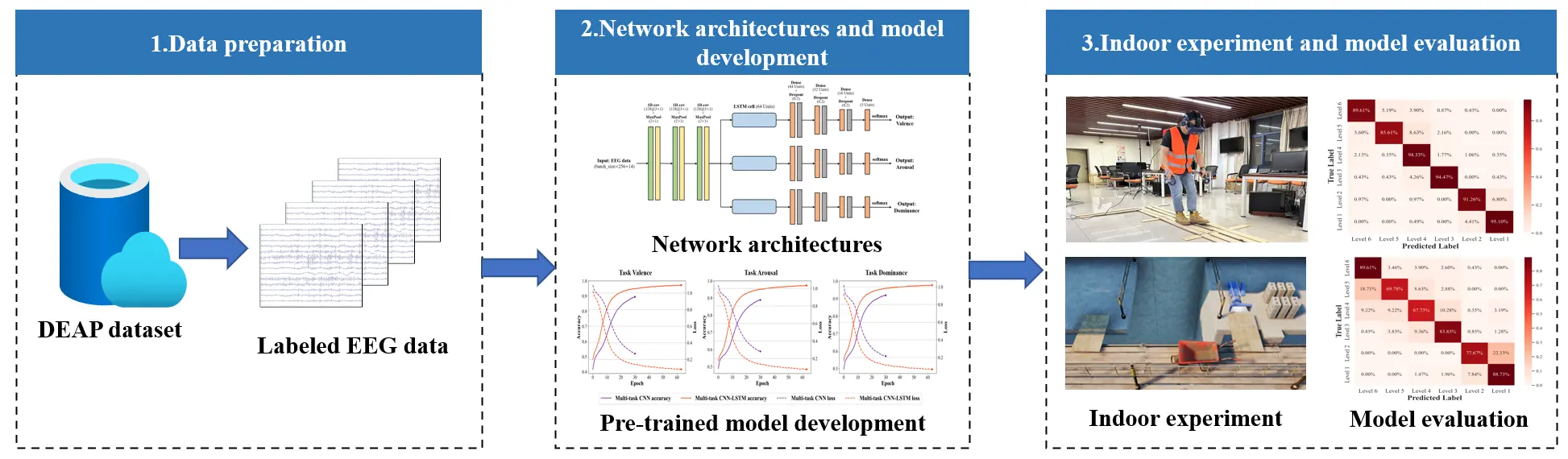

This section provides details on the preparation of EEG data, the architectures of considered networks, the parameters and settings for model training, as well as the design of the indoor experiment and the model evaluation process. Figure 1 presents a comprehensive overview of the research framework, encompassing data preparation, network architecture, and the model development process, along with the implementation of indoor experiments and the evaluation of model performance.

2.1 Data preparation

EEG data from the DEAP dataset are utilized to develop pre-trained models in this study[16]. Compared to other publicly available databases such as SEED, BCI, and PhysioNet EEG Motor Movement/Imagery Dataset, the well-established DEAP dataset encompasses EEG data collected from a sufficient number of participants, and offers verified labels for recognition tasks like vigilance, arousal, and dominance[24]. The high-quality EEG data provided by this dataset greatly facilitates the development of pre-trained models that capture useful feature representations. Moreover, these data labels align well with the valence-arousal-dominance (VAD) model[25], which comprehensively describes various cognitive states, such as alertness, anxious, dejection, and others, by collectively utilizing these data labels.

The EEG data from DEAP dataset have been preprocessed with a downsampling rate of 128 Hz, along with the removal of electrooculography artifacts and the application of band-pass frequency filtering (from 4.0 to 45.0 Hz) to ensure the purity of data. The resulting data shape is 32 × 40 × 32 × 7,680, where each dimension corresponds to the number of participants, trials, EEG channels, and involved data points, respectively. EEG data from 14 channels have been extracted to align with the experimental EEG data collected by Emotiv Epoc device for model evaluation (refer to Section 2.4). Subsequently, each trial's data (size: 14 × 7,680) have been segmented into 119 parts using a sliding window technique, featuring a 0.5-second step and a 2-second window length[26]. This windowing technique ensures continuous yet partially overlapping data segments within each window, minimizing data leakage by preventing future information from influencing the model during training. The three-dimensional numerical labels from DEAP have accordingly been transformed into three discrete levels, based on the ranges of 1-3 for low, 4-6 for medium, and 7-9 for high[27].

2.2 Network architectures

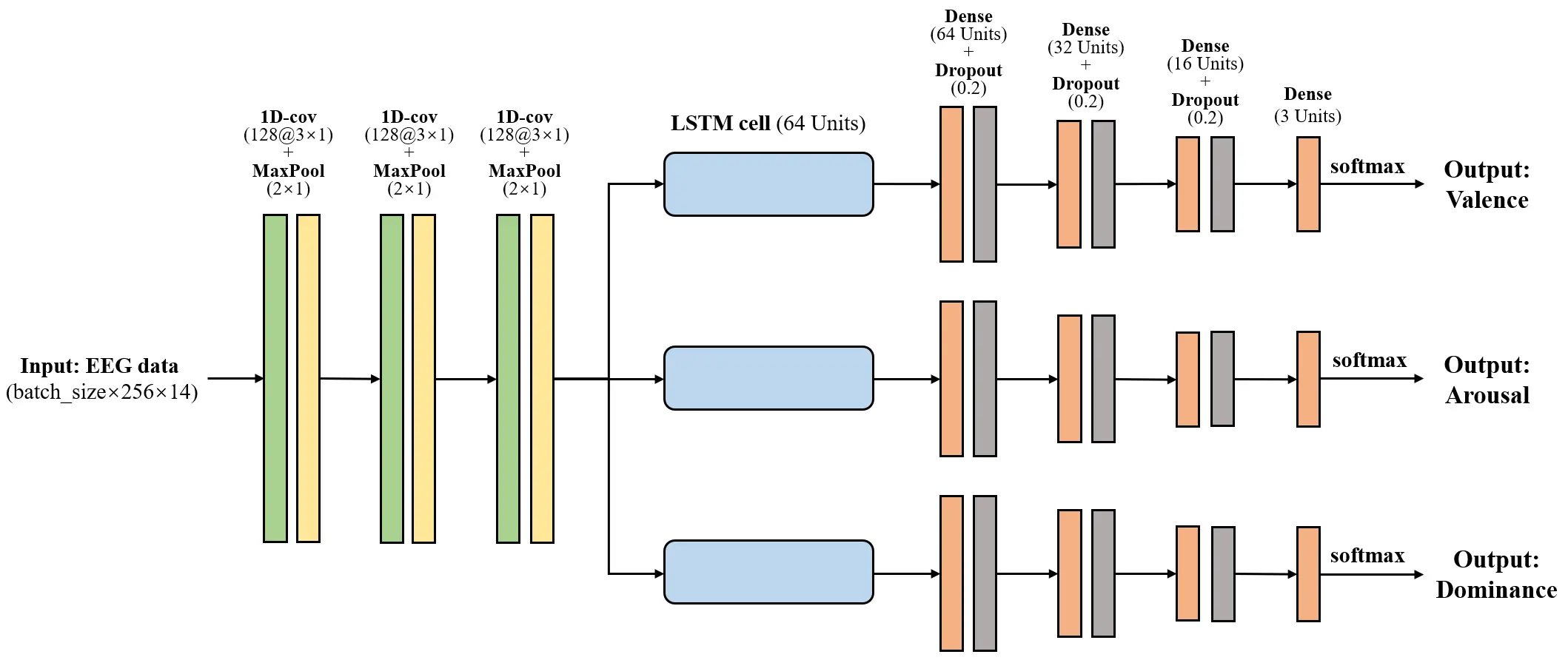

This paper focuses on using a multi-task CNN-LSTM architecture for pre-trained model development. The involved CNN layers effectively extract features from input data and contribute to relevant tasks[28]. The LSTM layer captures long-term dependencies in data series for each individual task[29]. A dense layer along with a dropout layer, enhances the robustness of model and mitigate potential overfitting. Finally, an output layer with three units is set up, utilizing a softmax activation function. Considering the three-dimensional data labels provided by DEAP dataset, as well as the VAD model for cognitive state recognition, the multi-task networks are primarily employed for model development. Unlike single-task learning, architectures based on multi-task learning leverage shared critical information from various recognition tasks and enhance generalizability[30]. The considered multi-task network architectures are described as follows.

Multi-task CNN-LSTM: Figure 2 illustrates the proposed architecture, which comprises multiple components. It begins with three sets of 1D convolutional layers (each consisting of 128 units), followed by max pooling layers with a pooling size of 2 × 1. These layers are then followed by three parallel LSTM cells, each containing 64 units. After each LSTM cell, three sets of dense layers (64, 32, and 16 units) are implemented, each followed by a dropout layer (rate: 0.2). Finally, a dense layer with three units and employing a softmax activation function produces the output for each recognition task.

Figure 2. Multi-task CNN-LSTM network architecture. CNN: convolutional neural network; LSTM: long short-term memory network; EEG: electroencephalogram.

Multi-task CNN: This network is driven from the multi-task CNN-LSTM architecture by removing the LSTM cells. It retains the same CNN layers, including three sets of 1D convolutional layers and max pooling layers. Following these layers are three sets of dense layers (64, 32, and 16 units), each followed by a dropout layer (rate: 0.2). Finally, an output layer with the same configurations is included.

To demonstrate the performance superiority achieved by multi-task architectures, the single-task versions of the above networks are tested.

Single-task CNN-LSTM: This architecture retains the same CNN layers as its multi-task version but includes only a single LSTM cell. The subsequent layers consist of dense layers (64, 32, and 16 units), each followed by a dropout layer (rate: 0.2). Finally, an output layer with three units utilizing a softmax activation function, generates the final predictions.

Single-task CNN: This network maintains the same CNN layers as the multi-task version but excludes LSTM cells. It features dense layers (64, 32, and 16 units), each followed by a dropout layer (rate: 0.2). Finally, this architecture incorporates an output layer identical to the one used in the single-task CNN-LSTM.

2.3 Development of pre-trained models

The EEG dataset is divided into three subsets: training, validation, and testing, with proportions of 80%, 10%, and 10%, respectively. To ensure consistent data partitioning, a random state of 2022 is set. The labels for the recognition tasks-valence, arousal, and dominance-are encoded using one-hot encoding. For model training, a batch size of 256 is selected, with a learning rate of 0.001, and the Adam optimizer is employed to facilitate rapid convergence. The model undergoes 100 epochs of training from scratch, incorporating an early stopping criterion based on validation loss, with a patience setting of 12 epochs. Training is conducted on a high-performance GPU (NVIDIA RTX A4000) using TensorFlow, with CUDA framework support to accelerate GPU processing. The development process is carried out using Python. To evaluate the performance of the trained models, test accuracy for the recognition tasks of valence, arousal, and dominance is computed and compared, enabling the identification of the best-performing architecture for future enhancements.

2.4 Indoor experiment and model evaluation

The previous sections have outlined the development of pre-trained models and their performance evaluation using four metrics on the training dataset. This section focus on validating the effectiveness of these models in facilitating specific cognitive state recognition tasks, particularly addressing the scarcity of high-quality EEG data through transfer learning. The focus is on the mental fatigue recognition task, as fatigue is included in the VAD model and can be accurately labeled using electrocardiography (ECG) data[31].

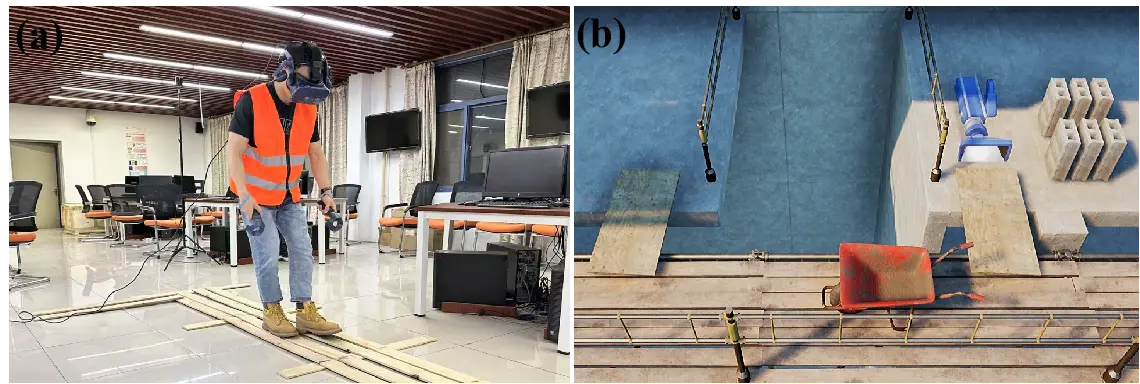

An indoor experiment was conducted to collect EEG data for model evaluation, utilizing an interactive virtual reality (VR) environment that simulates the task of moving concrete blocks on scaffold. A total of ten participants were involved in this EEG experiment, with ethical approval granted by the Institutional Ethics Committee of Southeast University (Number: 2024ZDKYSB423). Before the formal experiment, each participant signed the Informed Consent Form. The Emotiv Epoc X device and HTC VIVE Pro VR headset were employed, while a heart rate monitor simultaneously recorded ECG data to evaluate the participants' mental fatigue levels. Throughout the experiment, participants were tasked with transferring bricks back and forth for a duration of 10 minutes, intentionally inducing a decrease in their fatigue levels[5]. Figure 3 depicts the real-world experimental procedure involving a participant, as well as the layout of the constructed interactive VR scene.

Figure 3. VR-based experiment for collecting EEG data. (a) A participant during experimental procedure; (b) VR scene layout. VR: virtual reality; EEG: electroencephalogram.

The collected data were preprocessed using EEGLAB, applying a finite impulse response bandpass filter (4.0 Hz to 45.0 Hz) and independent component analysis to remove both extrinsic and intrinsic artifacts[32]. The R-R interval (RRI) data were extracted from the synchronously collected ECG data and transformed into two-dimensional data by calculating heart rate variability indicators, including the standard deviation of RRI (SDNN) and the root mean square of successive RRI differences (RMSSD)[33]. Following this, the ECG data were clustered through adaptive Gaussian Mixture Model (A-GMM) approach to accurately identify levels of mental fatigue[3]. Compared to other clustering algorithm like K-means[34], A-GMM does not require predefining the number of clusters, aligning better with the objective to learn directly from the data with minimal intervention. This approach, based on Gaussian distribution assumption, is particularly suited to the variability and distribution characteristics of the data collected in our study. The EEG data were then segmented through a uniform sliding window technique, as detailed in Section 2.1. For the probability density function of variables x1 (SDNN) and x2 (RMSSD), denoted as P(x1, x1), A-GMM transforms it into a superposition of M Gaussian distributions, as formula (1).

In formula (1), each GD can be represented as:

Among these parameters, ωi, μi1, μi2, Covi1, and Covi2 signify the amplitude, the expectation of variables x1 and x2, and the variances of variables x1 and x2, of the i-th Gaussian distribution. Covi refers the covariance of variables x1 and x2 in the i-th Gaussian distribution.

Several modifications were made to the pre-trained models' architectures are made, in order to transfer the knowledge from source domain and tailor the models for target task. These modifications included the addition of three concatenation layers designed to merge the outputs of Dense layers across varying dimensions, specially set to 192,96, and 48 units. Following each concatenation layer, a Dense layer with 32 units was incorporated. Finally, a concluding concatenation layer was established to combine the outputs of the newly added Dense layers, leading to an output layer configured with units that match the total number of fatigue levels, utilizing a softmax activation function.

The target model for mental fatigue recognition was developed through a fine-tuning approach, employing the same partition ratios, hyperparameter settings, and optimizer as previously used. The layers in the pre-trained models were sequentially unfrozen, including Dense, LSTM, and CNN layers, to identify the optimal configuration. Subsequently, the performance metrics of Accuracy, Macro-Precision, Macro-Recall, and Macro-F1 were calculated to evaluate the developed target model. The computation process for these metrics follows formulas (3) through (6), respectively.

Where TPi, FPi, FP, and FNi refer to the True Positive, True Negative, False Positive, and False Negative for class i, respectively. The value of n is equal to the number of divided fatigue levels.

3. Results

This section begins by presenting the development results of the pre-trained models. Following this, the evaluation results of the models are discussed, highlighting their roles in enhancing specific tasks (i.e., mental fatigue recognition) through transfer learning.

3.1 Results of pre-trained models' development

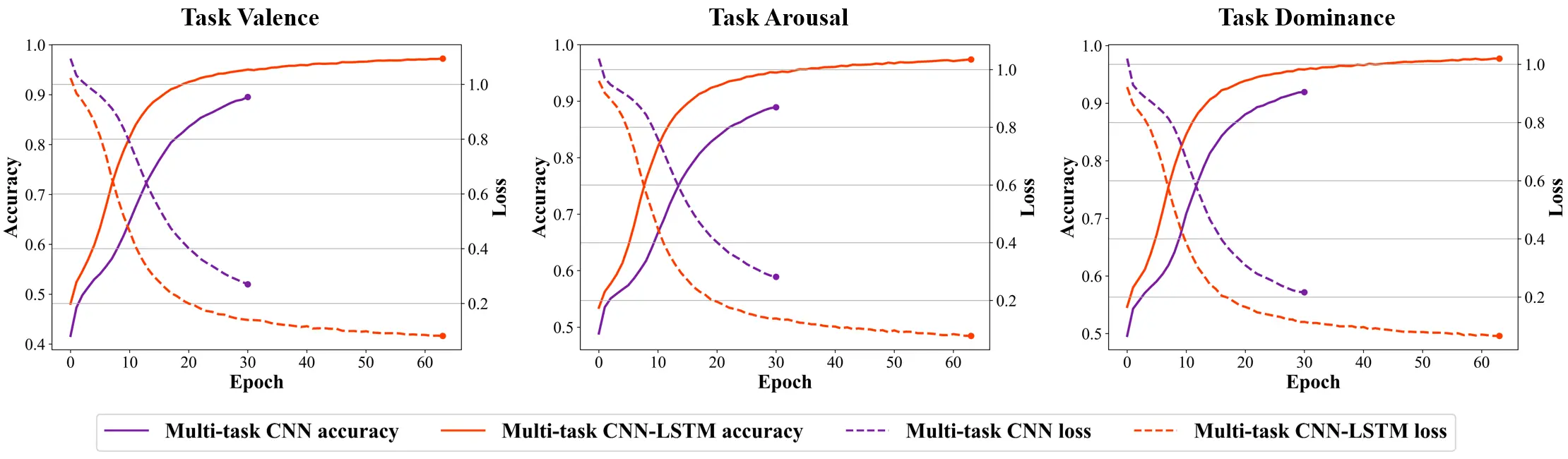

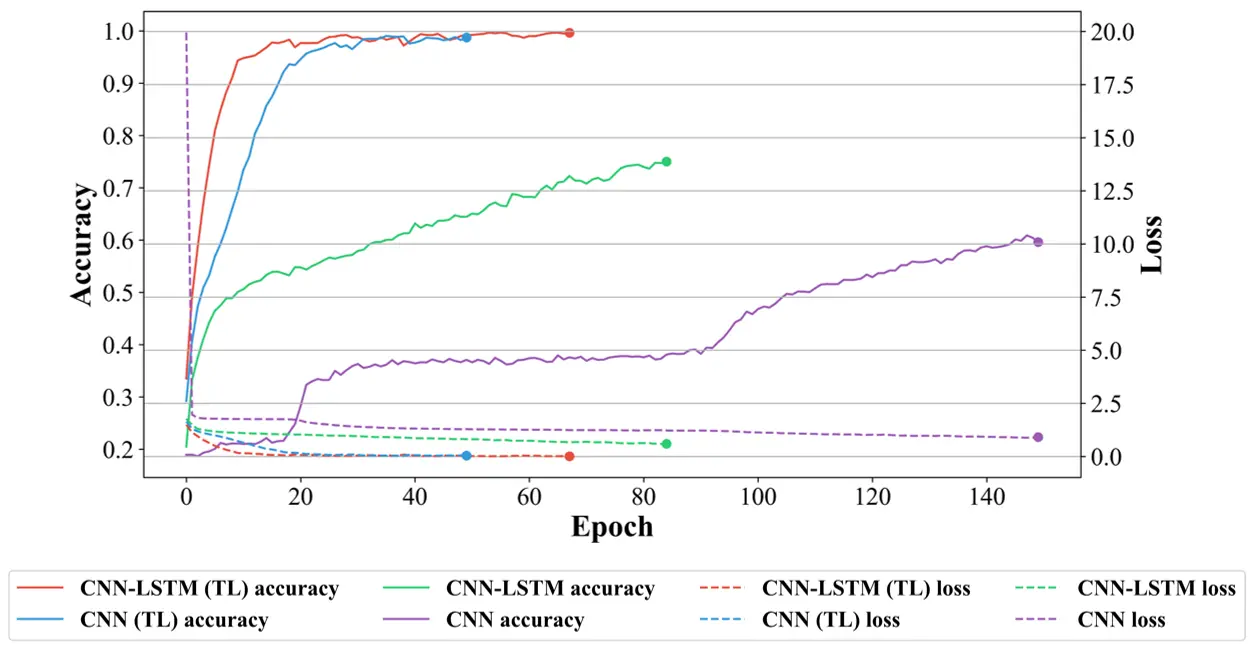

Figure 4 illustrates the training process of the pre-trained models, specifically highlighting the accuracy and loss trends of both the multi-task CNN-LSTM and multi-task CNN across training iterations. Each line in the graph marks the accuracy and loss progression over epochs, with the endpoint of each line indicating the epoch at which the early stopping mechanism was activated. This mechanism was implemented to prevent overfitting, halting the training once the model's performance on the validation set started to deteriorate. The curves demonstrate that the multi-task CNN-LSTM model consistently achieves higher accuracy and lower loss compared to the multi-task CNN model across source tasks, indicating that the former is more effective in learning meaningful patterns from the data. Moreover, the multi-task CNN-LSTM model takes longer to reach early stopping, requiring over 60 epochs to converge, which suggests that it undergoes more thorough learning and a deeper understanding of the underlying data compared to the other model. This extended training time could indicate better generalization ability, as the model explores the data more extensively before converging.

Figure 4. Pre-trained models' training process. CNN: convolutional neural network; LSTM: long short-term memory network.

Table 1 further compares the test accuracies of both multi-task and single-task models for the valence, arousal, and dominance tasks. The multi-task models outperform the single-task models, achieving test accuracies of 88.34%, 88.76%, and 89.28% for each respective task. These results are notably higher than those achieved by the single-task models, which underperformed in comparison. Furthermore, the multi-task CNN-LSTM model exhibits a relatively balanced performance across the three cognitive state recognition tasks, highlighting its ability to generalize well to different aspects of cognitive states. In contrast, other models show imbalanced accuracies, with some tasks being significantly more difficult to classify than others.

| Pre-trained models | Test accuracy | ||

| Task Valence | Task Arousal | Task Dominance | |

| CNN-LSTM (multi-task) | 88.34% | 88.76% | 89.28% |

| CNN (multi-task) | 70.18% | 70.40% | 74.81% |

| CNN-LSTM (single-task) | 75.25% | 75.78% | 77.28% |

| CNN (single-task) | 72.23% | 77.29% | 78.00% |

CNN: convolutional neural network; LSTM: long short-term memory network.

3.2 Results of pre-trained models' evaluation

Approximately 100 minutes of data were gathered from 10 participants during the indoor experiment. To analyze this data, we employed an adaptive GMM algorithm for clustering, which identified six distinct fatigue levels and assigned them to the corresponding data segments. These fatigue levels served as the foundation for developing target models focused on mental fatigue recognition. These models were created by fine-tuning pre-trained multi-task models, which had previously been trained on a large-scale dataset (such as DEAP) to recognize cognitive states.

The performance metrics of the fine-tuned models are shown in Table 2, alongside those of single-task models that were trained from scratch using the target dataset. The inclusion of both pre-trained multi-task models and single-task models highlights the effectiveness of using transfer learning to enhance model performance. The results indicate that the multi-task CNN-LSTM model consistently outperforms all other models, achieving a peak accuracy of 92.29% when all layers are unfrozen. In contrast, the single-task models, which did not benefit from transfer learning, achieved test accuracies of only 63.19% and 55.33%, respectively. This stark difference clearly demonstrates the ability of pre-trained deep learning models to overcome challenges posed by limited EEG data, significantly improving model performance in cognitive state recognition tasks.

| Pre-trained models | Frozen layers | Performance metrics | |||

| Accuracy | Macro-Precision | Macro-Recall | Macro-F1 | ||

| Multi-task CNN-LSTM | CNN+LSTM+Dense layers | 31.49% | 29.58% | 27.82% | 26.44% |

| LSTM+Dense layers | 38.27% | 37.95% | 35.12% | 34.35% | |

| Dense layers | 64.49% | 62.52% | 62.87% | 62.56% | |

| None | 92.29% | 91.94% | 91.73% | 91.80% | |

| Multi-task CNN | CNN+Dense layers | 26.64% | 24.73% | 19.88% | 22.30% |

| Dense layers | 59.72% | 57.42% | 55.81% | 56.61% | |

| None | 79.82% | 77.84% | 78.65% | 78.23% | |

| None (CNN-LSTM) | —— | 63.19% | 52.13% | 57.06% | 54.48% |

| None (CNN) | —— | 55.33% | 54.02% | 54.61% | 54.23% |

CNN: convolutional neural network; LSTM: long short-term memory network.

These findings underscore the importance of transfer learning, particularly when high-quality, domain-specific data is scarce. The use of pre-trained models enables the leveraging of knowledge learned from a larger, general dataset to improve performance on smaller, more specialized datasets. Furthermore, the superior performance of the multi-task model suggests that multi-task learning is an effective strategy for capturing complex patterns and relationships in EEG data, enhancing the model's ability to generalize across different cognitive states.

4. Discussion

This section provides an in-depth exploration of the pre-training and model evaluation processes, summarizes the core contributions to the existing body of knowledge, and outlines the limitations of this research.

4.1 Discussion on pre-training process

Firstly, the CNN layers employed in this study autonomously extract relevant features for model training, allowing the original segmented EEG data to be input directly into deep learning networks[11]. This approach marks a significant advancement over prior studies that relied on machine learning techniques and conventional feature extraction methods[9,35], which often require extensive manual feature engineering. Unlike traditional methods such as predefined solutions like PyEEG[36], which rely on manually selected features, the CNN-based approach eliminates the need for such interventions. By allowing the model to learn the most relevant features directly from the raw data, this method ensures improved performance and better generalization to new, unseen data.

A substantial body of research has utilized the DEAP dataset for various cognitive state recognition tasks, with many studies employing network architectures like CNN-LSTM and its variants[37]. In line with these established methodologies, our study focuses on the multi-task CNN-LSTM architecture for developing pre-trained models. This approach yielded impressive results, with test accuracies of 88.34%, 88.76%, and 89.28% for the valence, arousal, and dominance tasks, respectively. These results represent a notable improvement compared to the performance ranges summarized by Liu, Ding[38], highlighting the robustness of our model and its ability to capture complex cognitive state features. These results further demonstrate the strength of using pre-trained models in EEG-based cognitive state recognition tasks. In summary, the pre-trained models in this study successfully extract essential features from the original EEG data, which significantly enhances the performance of various cognitive state recognition tasks outlined in the VAD model.

Moreover, prior studies on transfer learning have often focused on fine-tuning pre-trained models like VGG, EfficientNet, and ResNet[39], which have primarily been developed for image-related tasks. However, EEG data does not have the same structure as images, particularly due to the absence of depth dimensions. In this study, to better suit the nature of EEG data, we utilize one-dimensional CNN layers, which are better aligned with the structure of time-series data. To assess the potential for transfer learning, EEG data is converted into grayscale image format to fine-tune more traditional image models, such as EfficientNet B3[22] and VGG-16[23]. The results of this experiment show an accuracy range of 70% to 80% for the mental fatigue recognition task, which, while promising, underscores the need for models specifically tailored to EEG data. This finding highlights the importance of developing dedicated pre-trained models that are optimized for EEG datasets, as such models would be more adaptable and effective for the unique challenges posed by EEG-based cognitive state recognition tasks.

4.2 Discussion on model evaluation process

According to the performance metrics presented in Table 2, models developed through transfer learning show significant improvements in overall performance compared to those without transfer learning, with the greatest improvement observed from 63.19% to 92.29%. Figure 5 illustrates the training process of these models, highlighting the changes in accuracy and loss over epochs. It is evident that models developed through transfer learning start at a higher performance level and converge more quickly, reaching convergence in approximately 50 to 67 epochs, whereas models without transfer learning take about 85 to 150 epochs. This faster convergence is a direct benefit of transfer learning, as it leverages prior knowledge learned from a large, general dataset to improve the learning process for a specific task. In conclusion, aspects of model performance, such as improved accuracy, faster convergence, and higher starting points, align with the key advantages of transfer learning, as summarized by Pan and Yang[19], who emphasized the ability of pre-trained models to provide a strong foundation for more efficient learning.

Figure 5. Training process of the target models. CNN: convolutional neural network; LSTM: long short-term memory network.

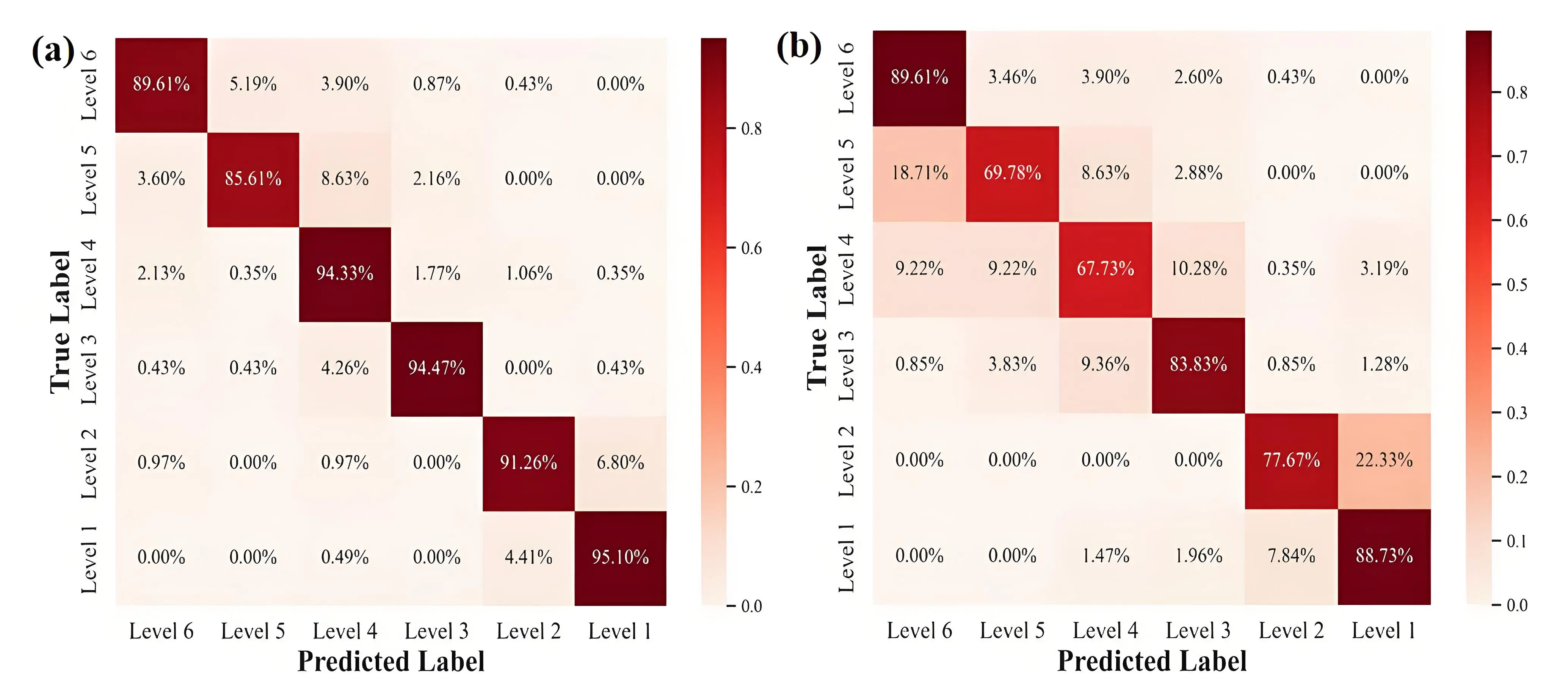

Furthermore, a comparison of the confusion matrices shown in Figure 6 reveals varying degrees of performance imbalance among the models developed using the pre-trained multi-task models. For instance, the multi-task CNN model exhibits notably low performance when recognizing certain fatigue levels, such as level 2 (77.67%), level 4 (67.73%), and level 5 (69.78%). These instances of underperformance can largely be attributed to inherent discrepancies in the data labels[40], which arise from the adaptive GMM-based clustering algorithm used for labeling, rather than from the developed pre-trained models themselves. These discrepancies may result from the clustering process, which can sometimes produce inaccurate or inconsistent labels, particularly when the data exhibits significant variability. This highlights a key challenge in EEG-based cognitive state recognition, namely the significant influence that the quality of EEG data and the accuracy of the assigned labels have on model performance. As such, improving the labeling process, and ensuring data consistency, will be crucial for future model development[11]. Additionally, it underscores the importance of incorporating advanced data preprocessing techniques, such as more robust noise reduction methods, to enhance the reliability of the input data used for training.

Figure 6. Confusion matrices of the target models developed by transfer learning. (a) Model developed on multi-task CNN-LSTM; (b) Model developed on multi-task CNN. CNN: convolutional neural network; LSTM: long short-term memory network.

4.3 Contributions

Theoretically, this study contributes validated, effective pre-trained models based on the DEAP dataset, which have demonstrated strong performance in cognitive state recognition tasks. These models extract valuable insights from large-scale EEG data, offering significant potential to advance future research in EEG-based cognitive state recognition, particularly in the construction domain. By leveraging large-scale datasets like DEAP, these models address the common challenge of data scarcity that often hinders the application of EEG in real-world settings. This scarcity is typically compounded by constraints such as limited experimental budgets, subjective labeling methods, equipment limitations, and the availability of participants. By overcoming these challenges, the pre-trained models developed in this study offer a robust framework for transfer learning, enhancing the performance of target models, especially when adapting to new, domain-specific data with minimal additional training.

Practically, the pre-trained models in this study provide a versatile solution for customizing cognitive state recognition systems tailored to a variety of construction scenarios. These include real-time detection of cognitive states like fatigue[10], stress[9], and distraction[41] among construction workers, including scaffolders, bar fixers, and general laborers. By accurately and promptly identifying workers' cognitive states, these models enable the timely implementation of appropriate interventions[42], improving overall workplace safety. For example, if a worker is detected as experiencing high mental fatigue, an automated safety alert can be triggered, promoting a schedule adjustment to ensure they take short, restorative breaks. This proactive approach to managing cognitive states in real time can significantly reduce safety risks on construction sites, fostering a safer and more productive working environment.

Moreover, for practical deployment, integrating lightweight and non-intrusive EEG devices (such as Muse and OpenBCI) with edge computing technology can facilitate real-time EEG monitoring. This combination allows for continuous tracking of workers' cognitive states, providing an efficient and scalable solution for real-time construction safety management. These advancements pave the way for more dynamic and responsive safety protocols in the construction industry, helping to prevent accidents and promote a proactive safety culture within the construction industry.

4.4 Limitations and future directions

Although the model development and evaluation processes are well-established, several limitations must be considered. This study, informed by prior experience with deep learning models on the DEAP dataset[38], primarily focuses on CNN-LSTM and relevant architectures. While the pre-training and evaluation process has demonstrated excellent performance, the models' effectiveness may still be influenced by data quality and architecture choice. Future research could explore cutting-edge deep learning techniques, such as utilizing Transformer layers, ResNet-1D architecture, Bayesian layers to enhance uncertainty estimation[18], and artificial neural networks deep learning auto-encoders[43]. Additionally, domain adaptation techniques could be explored to improve the transferability of models to new, domain-specific datasets[44].

For the indoor experiments aimed at collecting EEG data for model evaluation, increasing the sample size of recruited participants is essential for enhancing model generalizability[11]. Moreover, the immersive experience of the constructed 3D VR scene could be further enhanced to improve its effectiveness in simulating real-world scenarios[45,46]. The recruitment of only 10 participants, with an intentionally controlled sample size, may not be sufficient to account for the various conditions in construction-related EEG analysis. Increasing the sample size in future studies would help determine optimal settings and provide more robust validation. Furthermore, updating EEG signal noise removal techniques and combining diverse data labeling methods, such as expert annotation, hybrid EEG-ECG models, and others, would further improve the reliability of EEG data and the accuracy of the developed models[47].

Considering the unfreezing strategy of multiple layers of CNN, LSTM, and Dense, the analysis of layer-specific effects on performance remains incomplete, and a more in-depth exploration of the unfreezing strategy would be beneficial. It is also strongly recommended to investigate other cognitive state recognition tasks included in the VAD model[25], such as vigilance and anxiety recognition, to more thoroughly evaluate the effectiveness and applicability of the pre-trained models. Despite the validated performance of the proposed inductive transfer learning framework, notable risks of overfitting, computational costs, and constraints on real-time deployment remain. Future studies could benefit from incorporating prevention mechanisms such as cross-validation, network simplification, and the adoption of edge computing and lightweight EEG devices to address these challenges[48].

5. Conclusion

This study primarily contributes pre-trained models designed to EEG-based safety management for construction workers. The model development effectively leverages the well-established DEAP dataset, addressing the challenge of data scarcity for cognitive state recognition through transfer learning. The scarcity of high-quality EEG data often arises from constraints like limited experimental costs, subjective labeling methods, equipment limitations, and participant availability. To achieve the research objectives, this study involves: 1) extracting extensive and accurately labeled EEG data from DEAP dataset; 2) selecting appropriate network architectures and implementing pre-trained model development; and 3) collecting EEG data for mental fatigue recognition during indoor experiments, followed by evaluating the effectiveness of the pre-trained models. The evaluation results demonstrate the efficacy of these models in enhancing target model performance, as evidenced by a significant increase in accuracy from 63.19% to 92.29%, along with higher starting points and quicker convergence.

This study provides valuable guidance for future research and practical applications aimed at improving performance in various EEG-based cognitive state recognition tasks, particularly in addressing the scarcity of high-quality EEG data. Given the limitations in network architecture selection, experimental settings, and considered recognition tasks, future studies can address these constraints to achieve further advancements.

Acknowledgement

We extend our heartfelt thanks to the participants who willingly participated in the indoor experiment.

Authors contribution

Li Z: Revision, article writing, visualization, validation, methodology, investigation, data curation and conceptualization.

Tian F: Revision, methodology, investigation and conceptualization.

Chen G: Revision, validation and investigation.

Zhang S: Software and methodology.

Li Q: Supervision, resources, project administration, funding acquisition.

Conflicts of interest

The authors declare no conflicts of interest.

Ethical approval

This study has received ethical approval from the Institutional Ethics Committee (IEC) of Zhongda Hospital, Southeast University (Number: 2024ZDKYSB423)

Consent to participate

The authors declare that all participants signed the Informed Consent Form, ensuring that participants were fully informed about the study's purpose, procedures, risks, and benefits before consenting to participate.

Consent for publication

Not applicable.

Availability of data and materials

The data and materials could be obtained from the corresponding author.

Funding

We gratefully acknowledge the financial support provided by the National Natural Science Foundation of China (52378492) and SEU Innovation Capability Enhancement Plan for Doctoral Students (CXJH_SEU 25032)

Copyright

© The Author(s) 2025.

References

-

1. Mehmood I, Li H, Qarout Y, Umer W, Anwer S, Wu H, et al. Deep learning-based construction equipment operators' mental fatigue classification using wearable EEG sensor data. Adv Eng Inform. 2023;56(1):101978.

[DOI] -

2. Yang J, Ye G, Xiang Q, Kim M, Liu Q, Yue H. Insights into the mechanism of construction workers' unsafe behaviors from an individual perspective. Saf Sci. 2021;133:105004.

[DOI] -

3. Li Z, Yu Y, Tian F, Chen X, Xiahou X, Li Q. Vigilance recognition for construction workers using EEG and transfer learning. Adv Eng Inform. 2025;64:103052.

[DOI] -

4. Yu Y, Li H, Yang X, Kong L, Luo X, Wong AYL. An automatic and non-invasive physical fatigue assessment method for construction workers. Autom Constr. 2019;103:1-12.

[DOI] -

5. Wang D, Li H, Chen J. Detecting and measuring construction workers' vigilance through hybrid kinematic-EEG signals. Autom Constr. 2019;100:11-23.

[DOI] -

6. Xiang Q, Ye G, Liu Y, Miang Goh, Wang D, He T. Cognitive mechanism of construction workers' unsafe behavior: A systematic review. Saf Sci. 2023;159:106037.

[DOI] -

7. Hu Z, Chan WT, Hu H, Xu F. Cognitive Factors Underlying Unsafe Behaviors of Construction Workers as a Tool in Safety Management: A Review. J Constr Eng Manag. 2023;149(3):03123001.

[DOI] -

8. Saedi S, Fini AAF, Khanzadi M, Wong J, Sheikhkhoshkar M, Banaei M. Applications of electroencephalography in construction. Autom Constr. 2022;133:103985.

[DOI] -

9. Jebelli H, Hwang S, Lee S. EEG-based workers' stress recognition at construction sites. Autom Constr. 2018;93:315-324.

[DOI] -

10. Xing X, Zhong B, Luo H, Rose T, Li J, Antwi-Afari MF. Effects of physical fatigue on the induction of mental fatigue of construction workers: A pilot study based on a neurophysiological approach. Autom Constr. 2020;120:103381.

[DOI] -

11. Cheng B, Fan C, Fu H, Huang J, Chen H, Luo X. Measuring and Computing Cognitive Statuses of Construction Workers Based on Electroencephalogram: A Critical Review. IEEE Trans Comput Social Syst. 2022;9(6):1644-1659.

[DOI] -

12. Qin Y, Bulbul T. Electroencephalogram-based mental workload prediction for using Augmented Reality head mounted display in construction assembly: A deep learning approach. Autom Constr. 2023;152:104892.

[DOI] -

13. Wang Y, Huang Y, Gu B, Cao S, Fang D. Identifying mental fatigue of construction workers using EEG and deep learning. Autom Constr. 2023;151:104887.

[DOI] -

14. Aryal A, Ghahramani A, Becerik-Gerber B. Monitoring fatigue in construction workers using physiological measurements. Autom Constr. 2017;82(1):154-165.

[DOI] -

15. Jiang W, Ding L. Unsafe hoisting behavior recognition for tower crane based on transfer learning. Autom Constr. 2024;160:105299.

[DOI] -

16. Koelstra S, Muhl C, Soleymani M, Lee JS, Yazdani A, Ebrahimi T, et al. DEAP: A Database for Emotion Analysis Using Physiological Signals. IEEE Trans Affective Comput. 2012;3:18-31.

[DOI] -

17. Jiang WB, Liu XH, Zheng WL, Lu BL. SEED-VII: A Multimodal Dataset of Six Basic Emotions with Continuous Labels for Emotion Recognition. IEEE Trans Affective Comput. 2024.

[DOI] -

18. Huang W, Yan G, Chang W, Zhang Y, Yuan Y. EEG-based classification combining Bayesian convolutional neural networks with recurrence plot for motor movement/imagery. Pattern Recognit. 2023;144:109838.

[DOI] -

19. Pan SJ, Yang Q. A Survey on Transfer Learning. IEEE Trans Knowl Data Eng. 2010;22(10):1345-1359.

[DOI] -

20. Wan Z, Yang R, Huang M, Zeng N, Liu X. A review on transfer learning in EEG signal analysis. Neurocomputing. 2021;421:1-14.

[DOI] -

21. Wang N, Issa RRA, Anumba CJ. Transfer learning-based query classification for intelligent building information spoken dialogue. Autom Constr. 2022;141:104403.

[DOI] -

22. Koonce B. EfficientNet. In: Koonce B, editor. Convolutional Neural Networks with Swift for Tensorflow: Image Recognition and Dataset Categorization. USA: Apress Berkeley; 2021. p. 109-123.

-

23. Chaki J, Woźniak M. Deep learning for neurodegenerative disorder (2016 to 2022): A systematic review. Biomed Signal Process Control. 2023;80:104223.

[DOI] -

24. Rahman MM, Sarkar AK, Hossain MA, Hossain MS, Islam MR, Hossain MB, et al. Recognition of human emotions using EEG signals: A review. Comput Biol Med. 2021;136:104696.

[DOI] -

25. Gannouni S, Aledaily A, Belwafi K, Aboalsamh H. Adaptive Emotion Detection Using the Valence-Arousal-Dominance Model and EEG Brain Rhythmic Activity Changes in Relevant Brain Lobes. IEEE Access. 2020;8:67444-67455.

[DOI] -

26. Lashgari E, Liang D, Maoz U. Data augmentation for deep-learning-based electroencephalography. J Neurosci Methods. 2020;346:108885.

[DOI] -

27. Khateeb M, Anwar SM, Alnowami M. Multi-Domain Feature Fusion for Emotion Classification Using DEAP Dataset. IEEE Access. 2021;9:12134-12142.

[DOI] -

28. Kwak Y, Kong K, Song WJ, Min BK, Kim SE. Multilevel Feature Fusion With 3D Convolutional Neural Network for EEG-Based Workload Estimation. IEEE Access. 2020;8:16009-16021.

[DOI] -

29. Xiahou X, Li Z, Xia J, Zhou Z, Li Q. A Feature-Level Fusion-Based Multimodal Analysis of Recognition and Classification of Awkward Working Postures in Construction. J Constr Eng Manag. 2023;149(12):04023138.

[DOI] -

30. Zhang Y, Yang Q. A Survey on Multi-Task Learning. IEEE Trans Knowl Data Eng. 2022;34(12):5586-5609.

[DOI] -

31. Anwer S, Li H, Umer W, Antwi-Afari MF, Mehmood I, Yu Y, Haas C, Wong AYL. Identification and Classification of Physical Fatigue in Construction Workers Using Linear and Nonlinear Heart Rate Variability Measurements. J Constr Eng Manag. 2023;149(7):04023057.

[DOI] -

32. Delorme A, Makeig S. EEGLAB: an open source toolbox for analysis of single-trial EEG dynamics including independent component analysis. J Neurosci Methods. 2004;134:9-21.

[DOI] -

33. Nwaogu JM, Chan APC. Work-related stress, psychophysiological strain, and recovery among on-site construction personnel. Autom Constr. 2021;125:103629.

[DOI] -

34. Likas A, Vlassis N, Verbeek JJ. The global k-means clustering algorithm. Pattern Recognit. 2003;36(2):451-461.

[DOI] -

35. Jeon J, Cai H. Multi-class classification of construction hazards via cognitive states assessment using wearable EEG. Adv Eng Inform. 2022;53:101646.

[DOI] -

36. Bao FS, Liu X, Zhang C. PyEEG: An Open Source Python Module for EEG/MEG Feature Extraction. Comput Intell Neurosci. 2011;1:406391.

[DOI] -

37. Sheykhivand S, Mousavi Z, Rezaii TY, Farzamnia A. Recognizing Emotions Evoked by Music Using CNN-LSTM Networks on EEG Signals. IEEE Access. 2020;8:139332-139345.

[DOI] -

38. Liu Y, Ding Y, Li C, Cheng J, Song R, Wan F, et al. Multi-channel EEG-based emotion recognition via a multi-level features guided capsule network. Comput Biol Med. 2020;123:103927.

[DOI] -

39. Munien C, Viriri S. Classification of Hematoxylin and Eosin-Stained Breast Cancer Histology Microscopy Images Using Transfer Learning with Efficient Nets. Comput Intell Neurosci. 2021;17:5580914.

[DOI] -

40. Thabtah F, Hammoud S, Kamalov F, Gonsalves A. Data imbalance in classification: Experimental evaluation. Inf Sci. 2020;513:429-441.

[DOI] -

41. Ke J, Zhang M, Luo X, Chen J. Monitoring distraction of construction workers caused by noise using a wearable Electroencephalography (EEG) device. Autom Constr. 2021;125:103598.

[DOI] -

42. Li Z, Xiahou X, Chen G, Zhang S, Li Q. EEG-based detection of adverse mental state under multi-dimensional unsafe psychology for construction workers at height. Dev Built Environ. 2024;19:100513.

[DOI] -

43. Abbas N, Umar T, Salih R, Akbar M, Hussain Z, Haibei X. Structural Health Monitoring of Underground Metro Tunnel by Identifying Damage Using ANN Deep Learning Auto-Encoder. Appl Sci. 2023;13(3):1332.

[DOI] -

44. Zhang N, Lu J, Li K, Fang Z, Zhang G. Source-Free Unsupervised Domain Adaptation: Current research and future directions. Neurocomputing. 2024;564:126921.

[DOI] -

45. Umeokafor N, Umar T, Windapo A, Ibrahim CKIC. Drivers of Immersive Technologies in Construction Health and Safety Education and Training. In: Umeokafor N, Emuze F, Ibrahim CKIC, Sunindijo RY, Umar T, Windapo A,Teizer J, editors. Handbook of Drivers of Continuous Improvement in Construction Health, Safety, and Wellbeing. England: Routledge; 2024. p. 90-102.

-

46. Umar T. Key factors influencing the implementation of three-dimensional printing in construction. Manag Procure Law. 2021;174(3):104-117.

[DOI] -

47. Srinivasan S, Duela Johnson. A novel approach to schizophrenia Detection: Optimized preprocessing and deep learning analysis of multichannel EEG data. Expert Syst Appl. 2024;246:122937.

[DOI] -

48. Khan WZ, Ahmed E, Hakak S, Yaqoob I, Ahmed A. Edge computing: A survey. Futur Gener Comput Syst. 2019;97:219-235.

[DOI]

Copyright

© The Author(s) 2025. This is an Open Access article licensed under a Creative Commons Attribution 4.0 International License (https://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, sharing, adaptation, distribution and reproduction in any medium or format, for any purpose, even commercially, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

Share And Cite