Abstract

Aims: To give a comprehensive understanding of current research on immersive empathic computing, this paper aims to present a systematic review of the use of Virtual Reality (VR), Mixed Reality (MR), and Augmented Reality (AR) technologies in empathic computing, to identify key research trends, gaps, and future directions.

Methods: The PRISMA methodology was applied using keyword-based searches, publishing venue selection, and citation thresholds to identify 77 papers for detailed review. We analyze these papers to categorize the key areas of empathic computing research, including emotion elicitation, emotion recognition, fostering empathy, and cross-disciplinary applications such as healthcare, learning, entertainment and collaboration.

Results: Our findings reveal that VR has been the dominant platform for empathic computing research over the past two decades, while AR and MR remain underexplored. Dimensional emotional models have influenced this domain more than discrete emotional models for eliciting, recognizing emotions and fostering empathy. Additionally, we identify perception and cognition as pivotal factors influencing user engagement and emotional regulation.

Conclusion: Future research should expand the exploration of AR and MR for empathic computing, refine emotion models by integrating hybrid frameworks, and examine the relationship between lower body postures and emotions in immersive environments as an emerging research opportunity.

Keywords

1. Introduction

This paper provides a systematic review of the use of immersive technologies in Empathic Computing experiences. According to Clark[1], Alfred Adler described empathy as “seeing with the eyes of another, listening with the ears of another, and feeling with the heart of another”. Empathic computing is an emerging field that explores how technology can be used to create shared understanding or empathy between users[2]. According to Piumsomboon et al.[2], empathic computing systems involve a combination of natural collaboration between users, user experience and environment capture, and implicit understanding of user emotion and context. The focus is on creating shared emotional experiences, and so it extends the earlier work by Picard on Affective Computing[3] and similar systems where the focus is on creating interfaces that recognize emotion from individual users. Picard stated affective computing as a type of computing that “related to, arises from, or influence emotions”. They have defined emotion as a pivotal mechanism for expressing human feelings, delineating perceptions and cognitive states, fostering empathy, and facilitating interactions among individuals. Subsequently, advancements in technological innovation within the realm of human-computer interaction have transformed affective computing, culminating in the emergence of empathic computing as the prospective evolution of this field. Empathic computing advances prior Human-Computer Interaction (HCI) research on emotion recognition by enhancing the integration of emotional data and promoting richer human-machine interactions.

Empathic computing encompasses several key elements, including Emotion Recognition, Context Awareness, Adaptive Interaction, Feedback Mechanisms, User Modelling, and Ethics and Privacy[4]. In such systems, emotion can be implicitly recognized through a variety of different channels, including spoken words, facial expressions, behavioral patterns, and physiological responses[5]. Virtual Reality (VR) can be used to enable users to feel “present” in a synthetic 3D scene[6], and so is an ideal technology for sharing experiences. For example, a VR display can be used with live 360 video streaming to enable a remote person to feel like they are sharing the same location as a remote collaborator. Similarly, Augmented Reality (AR) seamlessly blends digital content with the physical environment and provides an intuitive way to share communication cues that enhance natural collaboration between remote people. For example, AR is used to enable a remote user to overlay gaze and gesture cues over a local user’s workplace and so help them to significantly improve their performance on a physical task. Both VR and AR provide full immersion and presence to users to experience emotions more vividly and authentically compared to traditional emotion elicitation/recognition tools and methods. AR/VR systems can integrate real-time feedback loops, responding to users’ emotional and physiological cues like heart rate and skin conductance. This dynamic interaction is far more advanced than static computational tools, enabling adaptive experiences that are critical for emotional elicitation and regulation. Moreover, users can navigate and interact within a 3D space, which creates a more engaging and contextually rich experience, that is often missing in other conventional methods. Thus, AR and VR technologies provide an ideal medium to induce, study, and understand emotions, moods, feelings, reasoning, deliberation, and planning[7,8].

Systematic reviews for AR/VR research are crucial since they provide a comprehensive and unbiased synthesis of existing studies, identifying trends, gaps, and inconsistencies. They also help researchers understand the current state of knowledge, guide future research directions, and ensure that new studies build on a solid foundation of previous works. There have been previous systematic reviews and surveys of the use of AR/VR technologies for enhancing face-to-face and remote collaboration[9] and evaluating collaborative AR systems[10-14], but not of empathic computing. For example, Schäfer et al. conducted a literature survey from the viewpoint of the three pillars of the remote collaboration system: namely Environment, Avatars, and Interaction[13]. Similarly, Ens et al. covered 30 years of collaborative AR or Mixed Reality (MR) research and integrated it with established theories from computer-supported cooperative work, aiming to guide MR researchers toward productive future endeavors[11]. These reviews have helped researchers understand previous work in the field, the technologies and methods typically used, and most importantly, the research gaps and opportunities for future work. However, Bernal et al. explained that empathy research in immersive virtual environments using devices like Galea encompasses (1) emotion elicitation, (2) emotion recognition, and (3) fostering empathy[15]. There has been a wide range of research using AR and VR focusing on each of these three aspects separately, however, there have been no previous systematic reviews that address all three elements together to address the role of immersive technologies in empathic computing.

Motivated by previous systematic reviews and relevant gaps in this field, our systematic review of the use of immersive technologies (AR/VR) for empathic computing is designed to identify research trends and knowledge gaps to advance the field. The novel contributions of the paper are to:

• Explore the research trends in empathic computing and immersive technologies from the last two decades.

• Explore the use of immersive technologies for emotion elicitation, recognizing emotions, and fostering empathy in various application domains.

• Investigate the various emotion models in immersive empathic computing.

• Explore the perception and cognition related to immersive empathic computing.

• Identify the research gaps and future opportunities for the use of immersive technologies in empathic computing.

2. Method

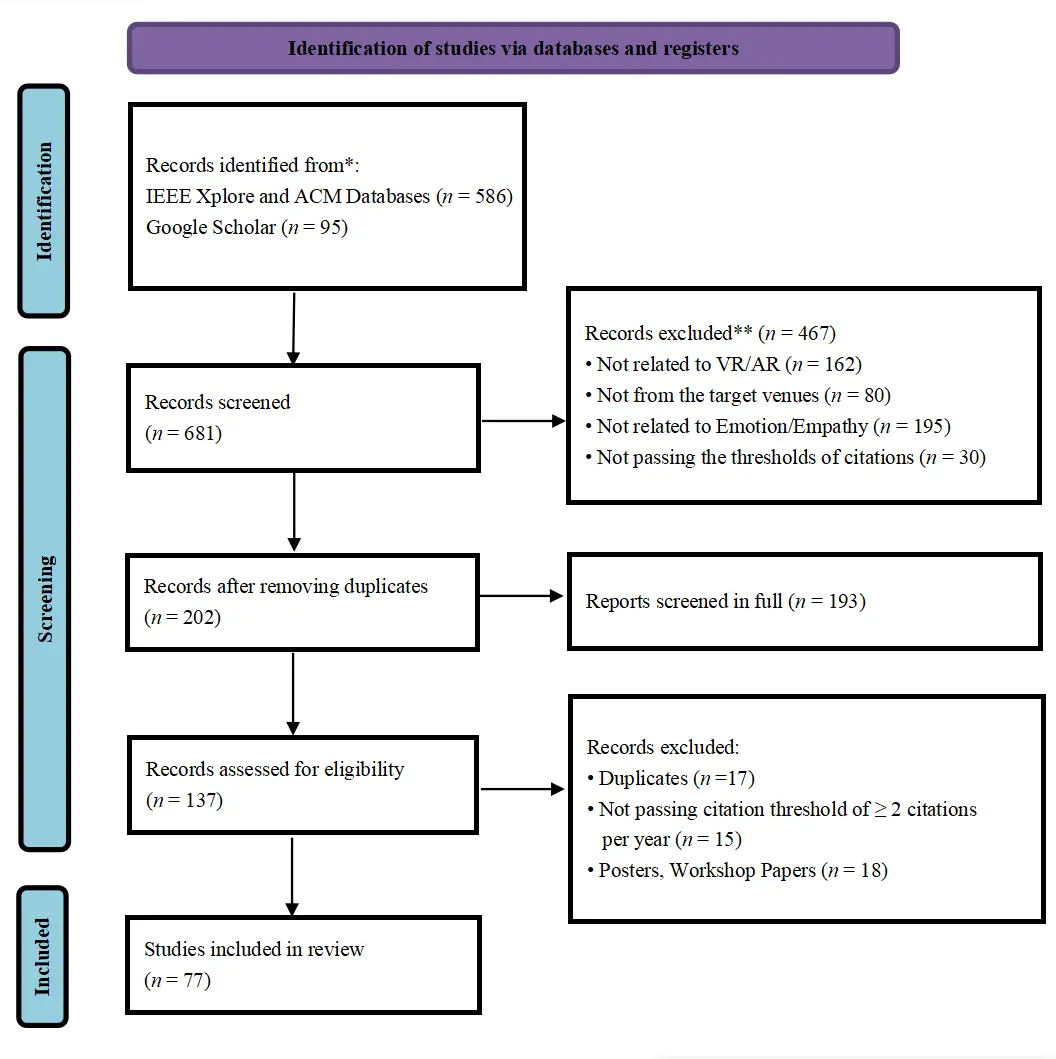

In this study, we followed the preferred reporting items for systematic reviews and meta-analyses (PRISMA) as a reporting guideline[16]. PRISMA is widely used for systematic reviews and meta-analyses as it guides researchers in reporting their findings transparently and systematically by providing a checklist and a flow diagram. Following the PRISMA guidelines, we collected papers that integrated empathic computing research in immersive technologies from the leading VR/AR conferences and journals. This included IEEE Virtual Reality and 3D User Interfaces (VR), IEEE International Symposium on Mixed and Augmented Reality (ISMAR), ACM Virtual Reality Software and Technology, IEEE Transactions on Visualization and Computer Graphics, and the ACM Conference on Human-computer Interaction. We restricted the search to the past two decades from 2000-2024 due to significant advances in AR/VR technologies during that time. The past two decades have seen key publications, frameworks, and innovations aligning with industry trends and ensuring a focus on contemporary technologies relevant to current and future developments. So, focusing on this period avoids outdated technologies, capturing relevant innovations aligned with present and future trends[17]. We chose to search the conferences and journals for papers with the keywords (“Empathic” OR “Emotion”) AND (“Virtual Reality” OR “Augmented Reality” OR “Mixed Reality” OR “Extended Reality”) in their title or abstract. Doing this, we ended up with 681 papers in total, with 586 unique papers from the IEEE and ACM databases, and 95 from the Google Scholar search.

The papers were fully reviewed by two authors independently following the PRISMA approach. As shown in the PRISMA diagram (Figure 1), 467 records were excluded from the initial screening as they were not related to immersive technology or emotion/empathy-related research. Then, we excluded 12 more duplicate papers and ended up with 202 papers. Among them, 193 papers were chosen for screening in full. Following the approach of Dey et al.[18], we then applied minimum citation criteria to ensure that we were reviewing papers that had an impact. For the years from 2000-2018, we required papers to have an Average Citation Count of greater than 2.0 per year, while for papers from 2019-2024, we removed the minimum citation requirement. We then excluded posters, workshop papers, and extended abstracts (EA) from the records, leaving 77 papers for full review. Among these 77 papers, 35 papers study emotion elicitation, 19 papers study emotion recognition, 19 papers employ fostering empathy, 1 paper on both emotion recognition and empathy building, 1 paper focuses on recognition and classification, 1 paper on emotion representation, and 1 paper on emotion influence in cybersickness in immersive environments. After multiple rounds of filtering, the final selection consisting of 77 papers was from the last decade, specifically from 2003 to 2024.

Figure 1. PRISMA approach for selecting relevant papers from 2000-2024. PRISMA: preferred reporting items for systematic reviews and meta-analyses; VR: Virtual Reality; AR: Augmented Reality.

We categorized the collected data accordingly to capture the diverse facets of empathic computing in immersive technologies, reflecting both the technical and psychological dimensions. This multi-dimensional approach ensures that the review holistically examines key areas of immersive empathic computing research. The categories used are (1) Applications and interdisciplinary approach, (2) Empathy design strategies and assistive technologies, (3) Framework of emotions in immersive environments, and (4) Perception/Cognition in immersive environments. The summary table of 77 reviewed articles is provided in Table 1.

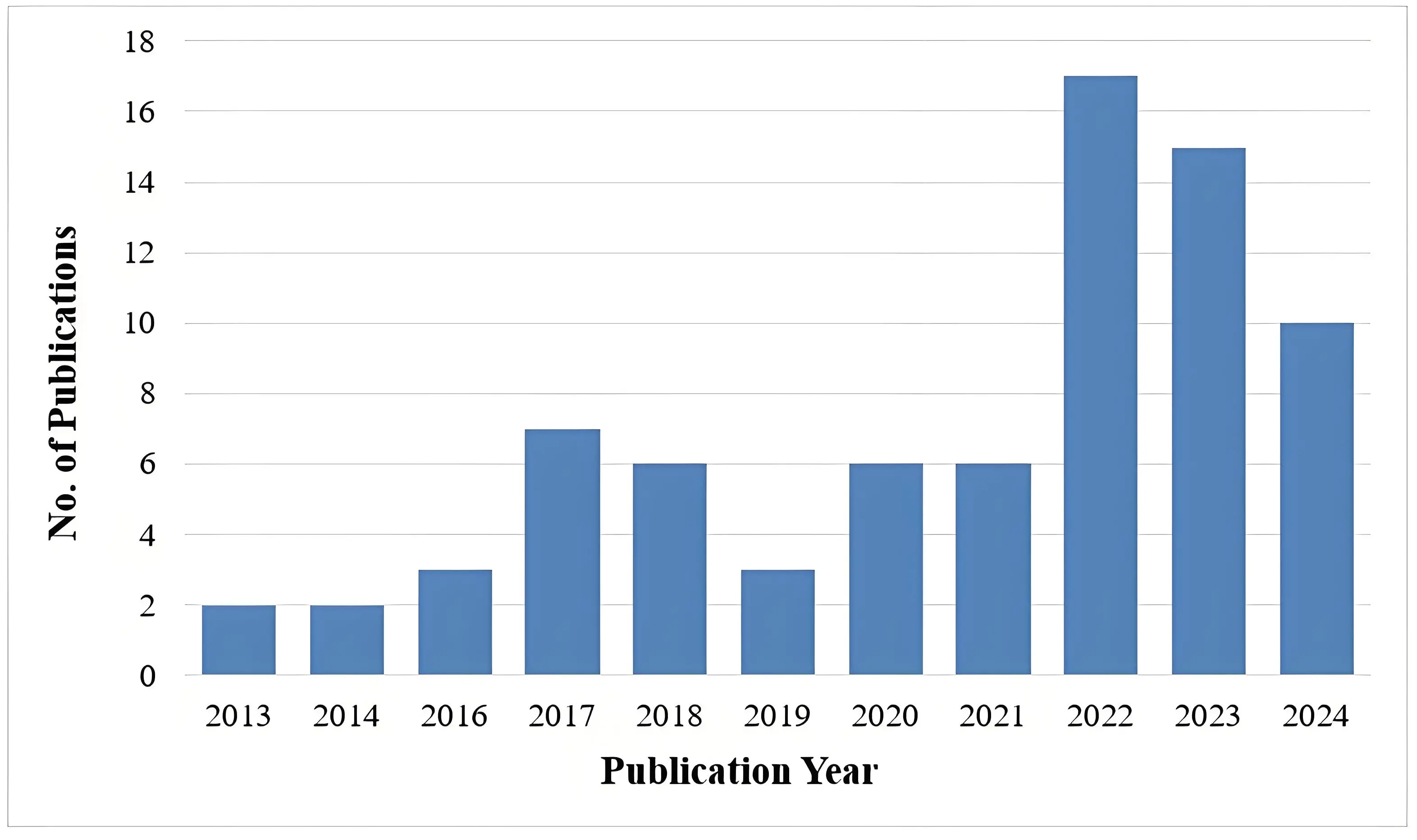

| Published Year | 2013 | 2014 | 2016 | 2017 | 2018 | 2019 | 2020 | 2021 | 2022 | 2023 | 2024 | Total |

| Number of papers | 2 | 2 | 3 | 7 | 6 | 3 | 6 | 6 | 17 | 15 | 10 | 77 |

| Application of Immersive Emotion | ||||||||||||

| Healthcare and Therapy | 2 | 0 | 1 | 1 | 2 | 2 | 2 | 1 | 8 | 2 | 1 | 22 |

| Education and Training | 2 | 1 | 1 | 2 | 3 | 3 | 2 | 3 | 12 | 8 | 3 | 40 |

| Entertainment and Gaming | 0 | 1 | 0 | 6 | 3 | 1 | 5 | 2 | 5 | 7 | 4 | 34 |

| Remote Collaboration | 0 | 0 | 1 | 1 | 0 | 0 | 2 | 1 | 1 | 4 | 0 | 10 |

| Empathy and Emotion Design Strategies in Immersive Environments | ||||||||||||

| Emotion Elicitation | 0 | 0 | 0 | 1 | 2 | 0 | 1 | 1 | 8 | 5 | 3 | 21 |

| Emotion Recognition | 0 | 0 | 1 | 2 | 0 | 0 | 1 | 0 | 3 | 2 | 4 | 13 |

| Fostering Empathy | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 7 | 3 | 12 |

| Representation | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 0 | 1 |

| Perception and Cognition | ||||||||||||

| Perception | 1 | 0 | 2 | 7 | 4 | 0 | 5 | 4 | 9 | 10 | 3 | 45 |

| Cognition | 1 | 2 | 1 | 0 | 0 | 3 | 1 | 2 | 6 | 5 | 6 | 27 |

| Perception and Cognition | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 2 | 0 | 1 | 5 |

3. Applications and Interdisciplinary Approach

Research in empathic computing using immersive technologies has grown continuously over the last 10 years, with a higher volume of research being published in the last three years (Figure 2). This study can be categorized into the application areas of healthcare, education, training, entertainment, and remote collaboration.

3.1 Healthcare and therapy

Immersive environments can be used for emotional regulation, exposure therapy, and treatment of conditions such as post-traumatic stress disorder (PTSD), anxiety disorders, and phobias. These applications allow for controlled simulations of stress-inducing scenarios that are used for therapeutic purposes. Guarese et al. experimented with participants, who were sighted and blindfolded and used various sonification methods to locate and place objects in a simulated kitchen setting[19]. Feedback from the blind and visually impaired (BVI) community was collected to assess the perceived benefits of such empathy-inducing experiences, with results indicating the potential for new assistive technologies. Their findings include that immersive AR experiences could effectively increase empathy toward the BVI community.

Immersive empathic computing is an excellent platform for therapeutic applications as it helps measure stress levels and emotional states including increasing positive and releasing negative emotions[20]. For example, Dietz et al. showed how to maintain a dynamic balance in stressful situations to improve postural control and reduce the risk of injury[21]. They evaluated the stress response by using physiological measures of stress and workload and used a ghost avatar to help participants concentrate on tasks. The outcome of these studies conducted by Luong et al. and Weiß et al.[22,23] can be applied to healthcare-related training[19], rehabilitation, psychological well-being[24], and therapeutic applications.

3.2 Education

Education is another prominent application area to enhance emotional engagement and empathy. Using perspective-taking, embodied learning, and increasing empathy through a narrative-based VR application, Ponto et al. demonstrated how immersive technologies can promote education and informal learning[25]. Using a VR experience with visuomotor congruence, they allowed users to experience life as an Adélie penguin, experience dispositional empathy with nature scale, avatar embodiment questionnaire, and informal learning through open-ended questions. Another study conducted by Gu et al. involved 234 middle and high school students participating in an experiment, aiming to determine if a role-exchange strategy can enhance empathy, moral judgment, and a commitment to stop bullying[26]. They found that experiencing both perspectives (bully and victim) helped participants develop a more comprehensive understanding of the impacts of bullying and fostered a more profound emotional and moral response. Schaper et al. ran an experimental case study focusing on enhancing students’ social and emotional learning (SEL) through projective AR in an educational virtual heritage context[27]. Bian et al. showed that a positive emotional feedback strategy led to a better learning experience and performance including the personality types having a significant effect on learning[28].

3.3 Training

Virtual training aims to simulate real-world scenarios to practice and improve specific skills and competencies. Li et al. conducted an experiment to show that the immersive nature of VR and the ability to connect with the experience emotionally play critical roles in the effectiveness of VR training[29]. Nam et al. reflected training intertwined with the pediatric nursing application in their study where the emotions (happiness, satisfaction, neutrality, anxiety, anger) of a responsive virtual parent are dynamically updated based on the trainee’s actions[30]. The outcome of the study also shows enhanced perceived realism, learning efficacy, and usefulness of the VR simulation compared to a static emotional model.

3.4 Entertainment and gaming

Video games, movies, music, and virtual concerts are using immersive technologies, especially VR to provide more engaging and emotionally resonant experiences. Ito et al. investigated how multisensory audio-visual stimuli in virtual environments (indoor and outdoor) affected the perception of wind comfort and emotional responses[31]. Shijo et al. investigated the emotions expressed by the posture of “Kemo-mimi”; animal-like ears on humanoid characters[32]. Kors et al. developed a VR game “Permanent” to foster empathy where coding of empathic states revealed that participants reported other-oriented empathic states more than twice as often as self-oriented empathic states[33]. Peng et al. used the idea of the emotional challenge to understand the player’s experience[34]. The study developed by Bevan et al. encompasses both education and entertainment where a series of ethnographic and experimental studies were conducted to explore VR non-fiction for real-life storytelling to evoke empathy[35]. Other studies that use the application of immersive empathic research in entertainment including interactive gaming, virtual embodiment, role-playing, music, and art include[36-39].

3.5 Remote collaboration

While being interlaced with other major application fields, there are collaborative AR/VR applications that support emotion and empathy research. Choudhary et al. investigated how mismatched facial and vocal emotional expressions from virtual avatars in VR impact users’ interpretations of emotions and consequent social influence[40]. Jing et al. developed a bi-directional emotion-sharing system using nine visual representations to enhance the communication of emotions in virtual spaces[41]. They explored the effect of interacting gaze behaviors, hand-pointing gestures, and heart rate visualizations to affect remote collaborations in empathic MR environments. Semertzidis et al. used MR to improve interpersonal communication of emotions using a neuro-responsive system called “Neo-Noumena”[42]. Finally, empathy glasses provided empathic visual information exchanges between remote collaborators in the study conducted by Masai et al.[43].

4. Empathy Design Strategies and Assistive Technologies

Immersive empathy research encompasses (1) Emotion Elicitation, (2) Emotion Recognition, and (3) Fostering Empathy. These components form a comprehensive empathy design strategy by providing a structured approach to provoke, measure, enhance, and accurately depict emotional experiences in immersive environments. We discuss each of the areas in this section.

4.1 Emotion elicitation

4.1.1 Illusion and engagement

One of the most effective ways to elicit empathy is by placing users in the “shoes” of others. In immersive platforms, this is achieved through embodiment, where users inhabit a virtual avatar representing someone else’s perspective. Also, multiple users can also have the same avatar body. This can be particularly powerful when the avatar differs from the user in terms of race, gender, age, or ability. Bian et al. identified embodiment interactions for enhancing social and emotional learning through projective AR in an educational virtual heritage context. VR and AR can tell compelling stories where users interact with characters and influence the narrative[28]. Engaging narratives that focus on social issues or personal experiences can evoke empathetic responses from users[23,35].

4.1.2 Environmental immersion

4.1.2.1 Simulations

Simulating real-world situations where users can experience what it’s like to face challenges or adversities faced by others can help elicit empathy. This could include scenarios like being a refugee, experiencing discrimination, or living with disabilities, as per the findings from Guarese et al.[44]. However, a delicate balance is required when using simulations to evoke empathy. There is a risk that such simulations could lead to patronizing attitudes rather than genuine understanding and empathy[45-47]. Ito et al. ran a simulation of natural environments with accompanying natural wind sounds that led to a reduction in mental stress and improvements in emotional responses[31]. In another study by Dietz et al., emotion was elicited due to VR simulation along with other physiological stimuli[21]. Nam et al. illustrated the usefulness of the VR simulation compared to a static emotional model[30]. Additionally, Wang et al. developed a VR simulation where users interacted with virtual humans (VHs) under different emotional and language settings[48]. Benvegnù et al. revealed that participants experience a high sense of presence in the simulation and showcase their different reactions to driving mode, greater arousal, and more negative valance[49].

4.1.2.2 360-degree video

Immersive platforms can use 360-degree videos to present narratives from another person’s viewpoint, allowing users to see what they see and hear, thereby promoting empathy through perspective-taking. Bindman et al. made use of 360 immersion in both high (industry VR headset) and low (smartphone) fidelity headsets, in terms of engagement and empathy[36]. Tse et al. used 360 immersive mobile VR to arouse empathy in a fire Rescue scenario. They focused more on the immersive experience of 360 videos to elicit arousal emotions[50].

4.1.2.3 Sensory feedback

Incorporating physical sensations like haptic feedback or environmental effects (e.g., temperature changes) can enhance the sense of presence and make experiences more relatable, thereby eliciting empathy. A positive emotional feedback strategy led to a better learning experience and performance[28]. Guarese et al. explores the integration of audio cues and visual feedback through AR and VR devices, aiming to enhance the guidance experience and provide a more intuitive understanding of the physical space for both users[44]. Kono et al. develops a novel system called ‘in-pulse’ for exploring the potential of bringing emotional feedback to users[51]. Dey et al. showed that displaying heart rate feedback tended to enhance the observer’s understanding of the active player’s emotional state[37]. Another study by Guarese et al. showed that feedback from the blind and visually impaired (BVI) community could be used to assess the perceived benefits of empathy-inducing experiences[19].

4.2 Emotion recognition

4.2.1 Biometric monitoring

4.2.1.1 Physiological data analysis

The most common physiological cues for empathy research are Heart Rate Monitoring, electrodermal activity (EDA), and electroencephalography (EEG). heart rate (HR) and heart rate variability (HRV) are measured to indicate emotional arousal and empathetic engagement. Ito et al. measured HRV in response to multisensory audio-visual stimuli in virtual environments (indoor and outdoor) and found out how it affects the perception of wind comfort and emotional responses[31]. The study conducted by Jing et al. used HR as a visual stimulus to affect remote collaborations in empathic MR environments[41]. Also, Valente et al. used both HRV and EDA in their experiment to recognize participants’ emotional states and visualize through the AR system[52]. Voigt-Antons et al. used HR and HRV were used to elicit emotions by repeatedly playing horror games[53]. Lee et al. visualized the HR and respiration rate data in a social VR experience to understand emotion[54]. In another study by Prachyabrued et al., HR and Galvanic Skin Response (GSR) were measured to develop a story-driven virtual environment for stress inoculation training in the context of emergency healthcare personnel[55]. Dey et al. used the Empatica device[56] to monitor HR and other physiological signals and investigate the effects of sharing real-time HR data between players in collaborative VR games and if it enhanced emotional connection and gameplay experience[37]. Li et al. investigated the effectiveness of using VR as a tool for emotion induction, by assessing both subjective (Self-Assessment Manikin (SAM) and In-group Presence Questionnaire) and neurophysiological responses (EEG data) in participants[57]. Semertzidis et al. developed a neuroresponsive system called “Neo-Noumena” combining EEG data analysis with procedural content generation to visualize emotions as dynamic fractal patterns in an MR environment[42]. Hou et al. used the Emotiv EEG sensor to develop a haptic-based serious game for post-stroke rehabilitation[58].

4.2.1.2 Eye tracking systems

Several studies implement eye-tracking technology to determine emotional focus and engagement, aiding in empathy recognition through eye movements and attention. These studies mainly measure eye movements, gaze direction, and pupil dilation. For example, Jing et al. used eye-tracking data to investigate user perceptions of visual designs combining communication cues with heart rate biofeedback[41]. The study conducted by Kourtesis et al. measured changes in pupil size to investigate the influence of emotion on cybersickness[59]. The authors in their experiment[22] utilized pulmonary and pupillary responses to physiological states such as fear, frustration, and insight and found the difference between fear and frustration pupillary respiratory along with EDA responses. The authors[48] assessed how native and non-native language communication affects the non-verbal (eye gaze) behaviors of users interacting with emotionally expressive VH crowds in VR. Jhan et al. explored the presence of small talk affected users’ non-verbal behaviors, such as gaze and interpersonal distance[60]. Volonte et al. used eye gaze as a non-verbal cue to explore how language familiarity affects emotional responses in VR interactions with a crowd of digital humans displaying various emotions[61]. Another study by Volonte et al. also used participants’ eye gaze as a non-verbal cue while they interacted with VH crowds exhibiting different emotional expressions[62]. In this study[43], the authors used eye-tracking data for empathic visual information exchanges between the collaborators.

4.2.1.3 Facial expression analysis

Immersive technologies are effective mediums for recognizing emotions and empathetic reactions based on facial movements. Pan et al. used avatar facial expressions with audio stimuli to elicit specific emotions[63]. Wang et al. developed a database for reconstructing the emotion reflected in facial expressions[64]. Suzuki et al. proposed a method to map facial expressions from a user wearing a Head-Mounted Device (HMD) to a virtual avatar using embedded photo-reflective sensors[65]. They also addressed the challenge of facial expression recognition when significant parts of the face are occluded by an HMD. Bekele et al. also utilized facial expression recognition using avatars for measuring arousal[66]. Choudhary et al. investigated how mismatched facial and vocal emotional expressions from virtual avatars in VR impact users’ interpretations of emotions and consequent social influence[40]. Lohesara et al. developed a novel dataset for Extended Reality teleconferencing, facial expression recognition, and volumetric video[67]. It included specific applications like facial expression classification, HMD removal, and visual quality assessment of 3D models. This study by Nam et al. integrated multimodal inputs including facial expressions to analyze the emotions of the virtual parent which are dynamically updated based on the trainee’s actions[30]. Wang et al. used some predefined emotional expressions for VHs to capture real human emotional responses[48]. Other studies[60-62] also used facial expressions with other verbal and non-verbal cues for emotion elicitation. Baloup et al. explored non-isomorphic methods that use input devices to control avatars’ facial expressions in VR environments[68]. In another study, Choudhary et al. utilized the emotional facial expressions (Happy, Sad, Angry, Skeptic) for manipulating the avatar head size in VR[69]. Kono et al. also developed a novel system exploring the potential of bringing emotional feedback to users through EMS-induced facial expressions[51]. The experiment by Bönsch et al. provided virtual agents with facial expressions to study how personal space is affected by virtual agents’ emotions[70]. Waltemate et al. also used avatar facial expressions for emotional arousal in their study[71]. In another study by Norouzi et al., facial expression was analyzed for using a virtual pet (or non-humanoid robot) to study social emotion in AR[72]. Though not a direct facial expression analysis, the study by Shijo et al. explored how different postures of the ears can convey various emotional states[32].

4.2.2 Behavioral analysis

Several studies have used body gesture recognition and analysis to infer emotion and empathy. Such systems track body movements and gestures and analyze non-verbal cues related to empathy and emotion. This study by Ponto et al. used hand tracking as part of upper body gestures recognized to foster empathy by leveraging embodied learning, perspective-taking, and increasing empathy through narrative-based VR application[25]. Another study used hand gesture analysis along with HR signals as part of emotion elicitation[53]. Nam et al. also incorporated hand gestures along with facial expressions for emotion recognition[30]. These authors utilized body postures in general along with other verbal, and non-verbal cues for emotion elicitation in their respective studies[48,60]. This study by Volonte et al. also incorporated hand gestures and head movement as non-verbal cues for emotion elicitation[61]. In another study, Volonte et al. used many non-verbal cues were used to elicit emotion in their study which tracked body postures, head movement, and hand pointing[62]. Finally, Bian et al. conducted a physiological experiment involving two personality types and two emotional feedback strategies where they utilized upper body movement[28].

4.3 Fostering Empathy

4.3.1 Repeated exposure

Repeated exposure to empathetic scenarios in immersive environments can enhance empathetic abilities over time. Iterative experiences with varying scenarios can deepen understanding and emotional connection. Immersive platforms can be used for empathy training, such as through role-playing exercises or guided interventions, which help foster empathy by building emotional intelligence and perspective-taking skills. The role-exchange method proves more effective in modifying participants’ attitudes towards bullying compared to traditional role-playing techniques that do not involve switching perspectives[26]. Providing feedback on users’ empathetic behaviors or emotional responses, such as through virtual coaches or interactive feedback, can help foster and reinforce empathy.

4.3.2 Social interaction

Creating shared or collaborative experiences in immersive platforms, where users engage with others empathetically, can foster empathy through social connection and shared understanding. Moustafa et al. designed an exploratory study to understand how social groups use VR for collaborative activities[73]. Dey et al. developed collaborative VR games to enhance emotional connection and gameplay experience among players[37]. Guarese et al. proposed a novel immersive system for a collaborative, immersive tele-guidance experience between sighted and visually impaired individuals[44]. Gupta et al. also promoted remote collaborations in empathic MR environments in their study[74]. Enhancing social presence, or the sense of being with others in a virtual space, and measuring personal space in social interaction, and interpersonal distance in social environments can foster empathy by making interactions more realistic and emotionally engaging. This can be achieved through realistic avatars, voice chat, or shared tasks[60,70,73,75].

5. Emotion Models in Immersive Environments

In immersive technologies, emotion models provide frameworks for understanding and representing emotional states, which are essential for creating and interpreting emotional responses in AR/VR environments. Emotion models, such as dimensional or discrete models, provide insight into how users experience and interpret emotions in immersive environments. Understanding various emotion models lays the foundation for future research and innovation in empathic computing. These models play a pivotal role in empathic computing by facilitating emotion recognition, guiding emotion elicitation, supporting adaptation in immersive environments in real-time and designing experiences that foster empathy. For instance, Ekman’s six basic emotions such as happiness, sadness, fear, anger, surprise, and disgust offer an outset for designing algorithms for facial emotion recognition.

5.1 Discrete emotion models

Discrete models are typically based on specific discrete emotions, e.g. Ekman’s Six Basic Emotions[76]. These models categorize emotions into distinct types, each associated with unique facial expressions and physiological responses. In our review, one study used Ekman’s model to represent seven discrete emotions (Enjoyment, Surprise, Contempt, Sadness, Fear, Disgust, Anger) to evaluate the emotion recognition performance of real autism spectrum disorder (ASD) participants with the help of an avatar[66].

5.2 Dimensional emotion models

Dimensional models such as the Circumplex Model of Affect or Valence-Arousal-Dominance (VAD) model categorize emotions based on continuous dimensions[77,78]. Dimensional models can be sub-categorized into the following:

5.2.1 2D emotion models

The Circumplex Model of Affect[79] is a popular 2D model used to gauge emotional responses based on valence (positive or negative) and arousal (activation or deactivation). This model has been used in immersive environments to monitor and influence users’ emotional states, enhancing the effectiveness of immersive experiences. In our review, most 2D model types referred to the valence-arousal (VA) model to represent continuous emotions. Valente et al. conducted a study where the participants’ emotional states were visualized through the AR system based on physiological signals (HRV and EDA), and the recognized emotions were mapped into the VA model[52]. This study also utilized the VA model while inducing emotional states measured by subjective scales and heart rate parameters using virtual environments[53]. While most of the studies[31,57,67,80] addressed the VA model to represent various emotions, other distinct types of 2D emotions can be observed from our review. Moustafa et al. the authors mentioned the Geneva Emotion Wheel (GEW) model[81] to assess the emotional states in their study[73]. Although the GEW model is related to the VA model, these are two distinct models, with the GEW having control instead of arousal to represent the high-low dimension. This study addressed valence-dominance in 2D to represent the personalized avatar impacts on body ownership, presence, and dominance[71]. Jicol et al. conducted a user study where they utilized the TAP-fear model to address fear in different types or stages in 2D[82]. TAP stands for Threat, Anxiety, and Panic. These components are aligned in two dimensions: one related to the type of fear and the other related to the intensity or acuteness of the fear response. Bian et al. addressed a unique type of 2D model based on Eysenck’s personality theory[28]. Eysenck’s model categorizes personality into four types based on the dimensions of Extraversion (E), Neuroticism (N), and Psychoticism (P). Melancholic (introverted and neurotic), Choleric (extroverted and neurotic), Phlegmatic (introverted and emotionally stable), and Sanguine (extroverted and emotionally stable) are the personalities classified based on the combination of these dimensions.

5.2.2 3D Emotion models

The VAD model adds one more dimension, called Dominance, to the 2D Valence-Arousal model. This model has been applied in AR/VR environments to capture complex emotional responses and tailor experiences accordingly. Freeling et al. addressed the VAD model in their study where a higher sense of embodiment impacted emotion both negatively and positively[83]. Hou et al. addressed the VAD model and showed that a game could be used for patients suffering from stroke to recover even at home and without accessing a nurse[58]. The Pleasure-Arousal-Dominance (PAD) model[84] provides a more nuanced understanding of emotions and adds pleasure instead of valence in the 3D VAD model. These authors[32] utilized the PAD model to investigate the emotions expressed by the posture of “Kemo-mimi”, which refers to animal-like ears on humanoid characters. The Happy, Relaxed, Glad, Excited, Delighted, Serene, Sleepy, Astonished, Neutral, Tired, Angry, Disgustful, Sad, Afraid, Frustrated, Bored, and Miserable emotions were categorized using this model. Another study[68] addressed Plutchik’s Wheel of Emotions[85] to explore non-isomorphic methods that use input devices to control avatars’ facial expressions in VR environments.

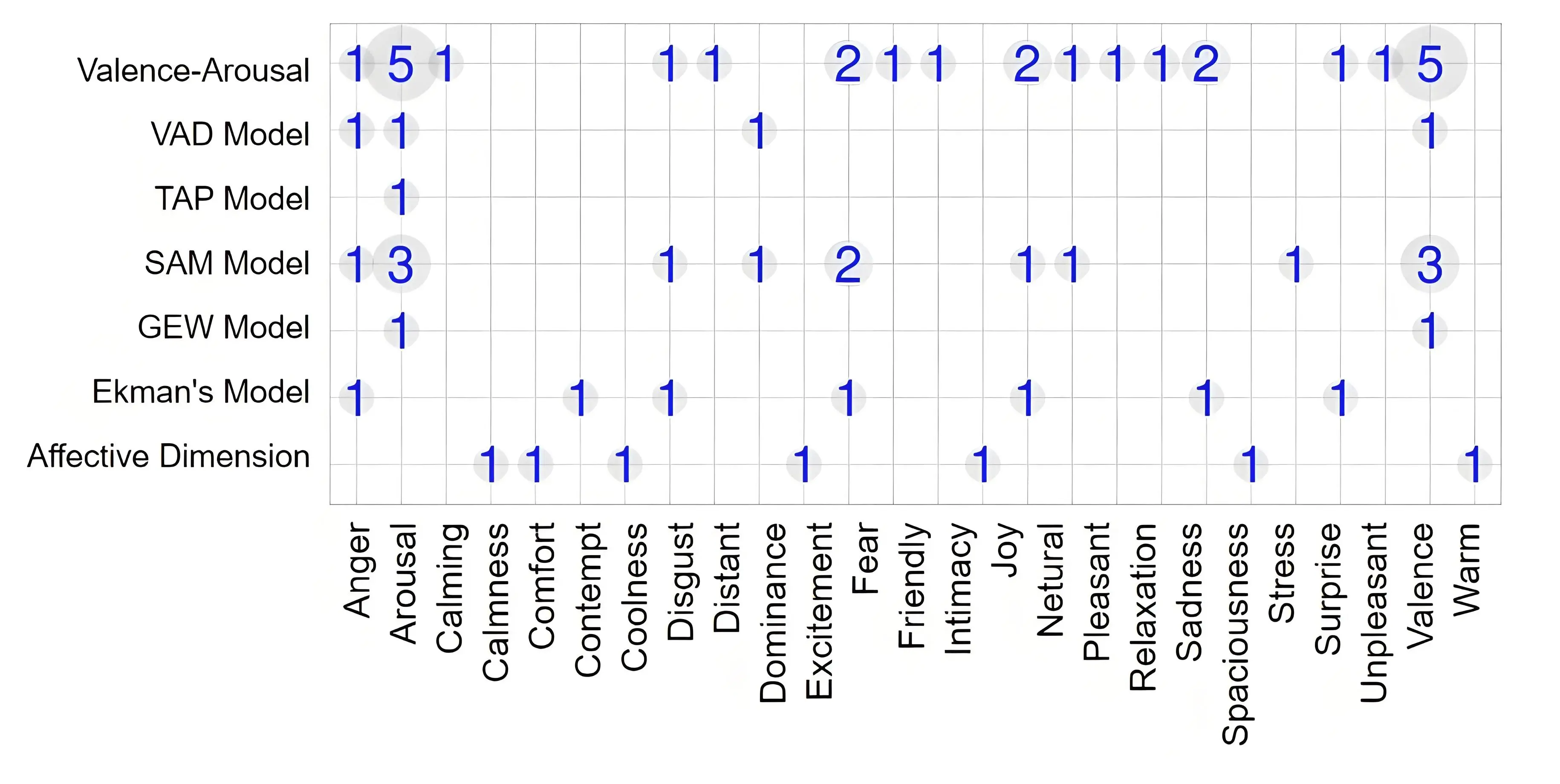

Overall, the immersive empathic studies for eliciting, recognizing emotions, and fostering empathy utilized different kinds of emotion models to accurately represent the emotion states. We have demonstrated the emotion models mentioned in the studies and their correspondence with various human emotions in Figure 3. This figure provides a visual summary of how different emotion models map to specific human emotions in the selected papers over the years. The numbers represent the frequency of a specific emotion being addressed under an individual emotion model.

Figure 3. Emotion Models and Emotion Types addressed in the selected publications over the years. VAD: Valence-Arousal-Dominance; TAP: Threat, Anxiety, and Panic; SAM: Self-Assessment Manikin; GEM: Geneva Emotion Wheel.

6. Perception/Cognition in Immersive Environments

Perception and Cognition are integral to the development and effectiveness of immersive empathic computing research as they contribute to enhancing empathy and emotional engagement. These domains utilize VR/AR technologies to provide seamless immersive empathic experiences by leveraging various sensory inputs and managing cognitive processes.

6.1 Perception

6.1.1 Visual perception

Immersive environments mostly rely on visual cues to create a sense of presence and realism. High-resolution displays and realistic rendering of virtual environments are crucial for conveying contextual and emotional information accurately. Findings in this study conducted by Jicol et al.[86] show that visually sharing emotions leads to better emotional consensus and positive interdependent behaviors. These authors also used the perception of visualized AR/VR environments for recognizing and fostering empathy in their respective studies[14,75].

6.1.2 Auditory perception

Sound design, including spatial audio, helps create immersive experiences. Auditory cues can enhance the perception of emotions and empathy by simulating realistic environments and interactions. Multi-sensory integration in AR environments for fostering empathy for visually impaired people is reflected in this study[19] to assess the perceived benefits of such empathy-inducing experiences. Along with multi-sensory cues, they used various sonification methods to locate and place objects in a simulated kitchen setting. The authors[44] developed a novel AR/VR system that incorporates the innovative use of audio cues for navigation, reflecting the effectiveness of audio-based guidance over visual cues. The authors[31] investigated how multisensory audio-visual stimuli in indoor and outdoor virtual environments affect the perception of wind comfort and emotional responses.

6.1.3 Embodied perception

6.1.3.1 Sense of presence and immersion level

Jicol et al. performed a systemic investigation to find the correlation between VR and human factors in terms of the sense of presence[82]. Their findings show that visual realism induces fear easily and thus higher presence in VR environments. Moreover, these studies[72,83] demonstrate how the sense of presence and embodiment influence human emotion in VR. In addition, the study by Jicol et al. portrayed the impact of emotion and agency on the sense of presence in VR[86].

6.1.3.2 Virtual avatar

Advanced systems use facial recognition technology to detect and respond to users’ emotions in real-time. This study used emotional voice puppetry utilizing the virtual avatar’s facial expression with audio to express several discrete emotions[63]. Another study also utilized various facial expressions in the posture of “Kemo-Mimi” to see what emotion they perceived from different ear postures[32]. The gestures and body movements of the virtual avatar are also crucial for conveying emotions, as this study finds. Nam et al. utilized both speech and hand gestures to demonstrate that the dynamic emotional responses of the virtual parent enhance perceived realism[30].

6.1.4 Haptic feedback

Tactile sensations can be provided through haptic devices, allowing users to feel virtual objects or interactions, a direct measure of user perception. This feedback can enhance emotional engagement and empathy by making experiences more tangible. In the experiment conducted by Ju et al., 7 Participants created vibration patterns to represent four emotions, and the patterns were played back to them in a random order to see if they could recognize the intended emotions[80].

6.2 Cognition

6.2.1 Cognitive load management

Immersive environments can be overwhelming due to the richness of stimuli. Effective design strategies aim to manage cognitive load, ensuring users can process information without becoming overwhelmed. Kourtesis et al. examined defective cybersickness on cognitive motor skills and reading performance[59]. Kim et al. measured the stress levels and emotional states including increasing positive and decreasing negative emotions[20]. The study by Dietz et al. evaluated the stress response by using physiological measures of stress workload and user experience in a VR environment[21]. This study also implemented a virtual ICU patient scenario to learn about the effect of virtually replicated emotional stressors[23]. Prachyabrued et al. developed a story-driven virtual environment for stress inoculation training in a healthcare setting[55].

6.2.2 Attention and focus

Guiding user attention through design elements like lighting, sound, and movement helps focus on key aspects of the experience, enhancing the understanding of emotional and empathic cues. Schaper et al. employed a multi-method analysis including observations, questionnaires, and interviews to assess emotional and educational impacts to evaluate students’ engagement and learning and qualitative analysis focusing on their interactions, emotional responses, and understanding of historical contexts[27].

6.2.3 Empathy and perspective-taking

Immersive environments often involve role-playing scenarios where users can experience situations from different perspectives, thereby fostering empathy. Lowy et al. simulated the neurodivergent (ND) perspectives in neurotypical (NT)-structured environments to bridge the empathy gap and foster empathy between the two groups[87]. Gu et al. showed that experiencing both perspectives (bully and victim) helps participants develop a more comprehensive understanding of the impacts of bullying and fosters a more profound emotional and moral response[26]. The study by Jhan et al. also demonstrated how small talk influences more nuanced or complex emotions in virtual environments[60]. Gil et al. developed a 3D AR storybook to foster the empathic behavior of children through roleplaying, storytelling, and perspective-taking[39]. Moreover, Bian et al. showed that empathy is driven by 10 categories of cognitive perspectives: viewer role, point of view, visual composition, audio composition, gaze manipulation, evidence of embodiment, interaction, locomotion, interpersonal space, and the manipulation of time[28].

6.2.4 Neurocognitive aspects

The use of brain-computer interface to monitor and influence cognitive and emotional states in real-time is correlated to the neurocognitive aspect of empathic immersive research. Immersive environments can activate specific brain regions associated with empathetic processing. Hou et al. developed a haptic-based game for post-stroke rehabilitation where they utilized EEG signals[58]. Li et al. explored brain wave patterns associated with different emotional states, revealing cognitive processing of emotional stimuli[57]. This paper represents appraisal theory which is central to understanding how participants cognitively interpret VR scenarios and subsequently experience emotions. However, this study is also related to perception as the participants in the experiment are exposed to VR videos, which are designed to elicit specific emotional responses based on their visual content. Moreover, the subjective measurement through SAM scales captures participants’ perceived arousal, valence, and dominance, which are direct indicators of their emotional perception of the VR stimuli. Although the study conducted by Semertzidis et al. is mostly related to auditory and visual perception, participants engaged in introspection and reflection on their emotional states to better understand their own and their partners’ emotions using this neuroresponsive system[42].

7. Discussion

7.1 Applications

The applications of immersive emotion research highlight the transformative potential of immersive technologies. Notable studies in immersive empathic computing showcase some major application areas of this research realm[88-91]. In healthcare, real-time emotion recognition enhances therapeutic outcomes through adaptive interventions. In this domain, we see the emerging trend of using VR/AR in exposure therapy for phobias, PTSD, and anxiety, and in rehabilitation for stroke patients. VR simulations are widely used to train healthcare professionals in empathic care by recreating patient experiences like dementia and autism. Regarding the education and training domains, immersive experiences foster empathy and understanding by engaging students with diverse perspectives and by allowing students to experience historical events or the lives of individuals from different backgrounds. Here we notice the upward trend of providing personalized feedback based on users’ emotional responses in various emotion recognition systems. The entertainment industry pushes the boundaries of emotional engagement with VR experiences that adapt to users’ emotions, creating personalized narratives. This domain is being taken over by VR games and experiences, adaptive storytelling that evolves based on players’ emotional states, and raising awareness about social issues like anti-bullying. Finally, emotion recognition tools maintain human connections and effective communication in remote collaboration. Remote collaboration is becoming more popular over time, facilitated by virtual meetings with emotion recognition systems providing real-time feedback on participants’ emotional states, improving communication and understanding. Moreover, the integration of AI and personalization trends reflects the evolving sophistication of these technologies, promising more effective applications. These advancements emphasize the importance of continued research and innovation in immersive empathic computing to develop environments that entertain, educate, and foster deeper human connections.

7.2 Empathy design strategies

We have explored various strategies for emotion elicitation, recognition, and fostering empathy using immersive technologies that can manipulate emotional states, using a combination of verbal and non-verbal cues, as well as diverse stimuli. The analysis of emotion recognition techniques involving deep and recurrent neural networks[52,63,67] showcases the potential for future innovation in emotion classification and early emotion prediction. This could lead to the possibility of mitigating cybersickness[92], stress, and cognitive load.

7.3 Emotion models for measuring emotions

From our review, the majority of the papers used the VA model as a prime 2D model to map the emotions elicited or recognized in the immersive empathic systems. However, some papers also introduced other 2D models like the TAP-fear model to map fear emotion, the GEW model to describe control instead of arousal-valence, and the valence-dominance model. Furthermore, we see the use of 3D models mentioned in some of the studies. For example, these two studies[58,83] used the VAD model while Mehrabian et al.[84] introduced PAD model and Plutchik et al.[85] utilized Plutchik’s wheel of emotions in their respective studies. Only one study[66] utilized Ekman’s discrete emotion model to represent emotions. This indicates a trend toward capturing a wider range of emotional states over time. The application of discrete emotion models, like Ekman’s or Plutchik’s, suggests an emerging interest in representing specific, distinct emotions within immersive environments. This diversity in emotion modeling reflects a broader trend toward tailoring emotional representations to specific applications and user experiences. Future research may continue to explore and develop context-specific emotion models that align with the unique characteristics and needs of different immersive environments. As empathic computing evolves, there may be an increased emphasis on hybrid models that combine both dimensional and discrete frameworks to offer even more comprehensive representations of human emotion.

7.4 Body postures for emotion representation: a new perspective

An intriguing aspect of emotion/empathy research using immersive technologies involves gesture recognition. While some studies have explored hand tracking[25,30,53], head movements[61,62], and upper body tracking[48,60] for recognizing emotions, few have investigated lower body postures, particularly leg patterns, to determine how they correlate with emotional states. This highlights a potential avenue for future research, specifically focusing on gait pattern analysis and its relationship with emotional states in immersive environments. Notably, some researchers have addressed this aspect of body behavior analysis within the context of immersive empathic domains[93-99]. However, rigorous empirical studies are needed to explore lower body behavior analysis for emotion recognition. Analyzing gait patterns in immersive environments could be valuable for applications such as rehabilitation, virtual therapy[98] and assisting individuals with ASD.

7.5 Research trend in perception/cognition

Over the last decade, significant progress has been made in understanding the role of perception and cognition in immersive empathic computing[89,100,101]. Recent research underscores the critical role of advanced sensory integration, visual and auditory enhancements, haptic feedback, and emotional cues in immersive empathic computing. Concurrently, optimizing cognitive load, managing attention, and leveraging neurocognitive insights have been pivotal in enhancing empathy and emotional engagement. These developments collectively advance the potential of immersive technologies in fostering empathy across diverse applications. We notice recent research highlighting the importance of synchronizing multiple sensory inputs (visual, auditory, haptic) to enhance user presence and emotional engagement[14,80,86]. Advances in high-resolution displays, realistic rendering, and spatial audio have significantly improved immersion. These enhancements contribute to emotional engagement, with studies emphasizing first-person perspectives and dynamic audio for greater empathy. Haptic technology now offers sophisticated tactile feedback, enhancing emotional and empathic experiences in VR. In addition, incorporating real-time emotional cues and facial recognition enhances interactive and emotionally intelligent virtual agents. Regarding cognition, optimizing cognitive load through simplified interfaces and balanced sensory inputs ensures user engagement without overwhelming in recent research[20,59]. Effective attention management, using visual and auditory cues, is crucial for directing user focus in immersive environments[27]. Moreover, VR’s potential to foster empathy by allowing users to experience different perspectives is well-addressed in recent research[26,60,87]. VR has transcended traditional human interaction by enabling deeper connections with the animal world, effectively fostering empathy across species and its impact on human perception and cognition together. Recent VR simulation study on stray animals showed how to foster empathy for stray animals and promote awareness and behavioral change toward animal welfare by designing a Virtual Reality Perspective-Taking (VRPT) system[102]. Finally, neuroimaging studies have provided insights into the brain’s response to immersive experiences, informing the design of environments that maximize cognitive and emotional impact[42,57,58,103].

7.6 Limitations

The systematic review method we adopted limits our study by applying strict inclusion criteria, thereby excluding relevant research that falls outside those criteria. The reliance on PRISMA-based selection criteria has contributed to the dominance of VR studies over AR/MR, potentially overlooking relevant but unpublished or industry-based findings. Furthermore, this systematic review is inherently constrained by its dependence on published literature up to a certain point in time, which may exclude ongoing advancements in immersive empathic computing. Future reviews can incorporate a broader range of sources, including gray literature and preprints, and explore alternative review methodologies that allow for a more dynamic synthesis of emerging trends. The dominance of dimensional emotion models and variability in methodologies across studies points to both a trend and a challenge: while these models provide flexibility, their prevalence may overlook applications that benefit from discrete emotion frameworks. Additionally, publication biases and methodological inconsistencies complicate direct comparisons across studies. Furthermore, the role of perception and cognition in terms of sensory integration, cognitive load management, the interplay among visual, auditory, and haptic modalities, as well as neurocognitive processes have been identified as pivotal factors that influence user engagement and emotional regulation. While significant progress has been made in understanding these processes within VR, future research should extend these insights to AR and MR, investigating areas such as peripheral awareness, implicit emotional processing, and the long-term cognitive effects of immersion.

8. Conclusion

This systematic review offers a comprehensive overview of empathic computing in immersive technologies, highlighting key research trends, evaluating the effectiveness of current approaches, and identifying opportunities for future exploration. Over the last two decades, research has increasingly focused on VR environments, revealing their potential for emotion elicitation, recognition and empathy enhancement. However, we found that the articles selected through PRISMA were mostly in VR rather than AR/MR, and these immersive areas remain insufficiently explored, which limits insights into their unique affordances. Future immersive empathic computing research is strongly encouraged to explore the potential of AR and MR, refining emotion models, and integrating hybrid frameworks for emotion representation. Advancements in multimodal emotion recognition and real-time feedback systems hold promise for more personalized empathic computing experiences. In addition, the correlation between lower body postures and emotion in immersive environments should be rigorously studied to bring out new aspects of body behavior research in this emerging field. This review not only identifies these challenges but also provides actionable insights to guide future research, fulfilling its aim to advance the understanding and application of empathic computing across immersive technologies.

Authors contribution

Jinan UA: Ideation, methodology, investigation, data analysis, data curation, visualization, writing original draft.

Jung S: Ideation, methodology, investigation, writing, review, editing, supervision.

Heidarikohol N: Data analysis, data curation, visualization, writing original draft.

Borst CW, Billinghurst M: Writing, review, editing, supervision.

All authors approved the final version of the manuscript.

Conflicts of interest

Mark Billinghurst is the Editor-in-Chief of Empathic Computing. Other authors declared that there are no conflicts of interest.

Ethical approval

Not applicable.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Funding

None.

Copyright

© The Author(s) 2025.

References

-

1. Clark AJ. Empathy and Alfred Adler: an integral perspective. J Individ Psychol. 2016;72(4):237-253.[DOI]

-

2. Piumsomboon T, Lee Y, Lee GA, Dey A, Billinghurst M. Empathic mixed reality: sharing what you feel and interacting with what you see. In: 2017 International Symposium on Ubiquitous Virtual Reality (ISUVR); 2017 Jun 27-29; Nara, Japan. Piscataway: IEEE; 2017. p. 38-41. [DOI: 10.1109/ISUVR.2017.20].[DOI]

-

3. Picard RW. Affective computing. Cambridge: The MIT Press; 1997.[DOI]

-

4. Calvo RA, D’Mello S. Affect detection: an interdisciplinary review of models, methods, and their applications. IEEE Trans Affect Comput. 2010;1(1):18-37.[DOI]

-

5. Bosch E, Bethge D, Klosterkamp M, Kosch T. Empathic technologies shaping innovative interaction: future directions of affective computing. In: NordiCHI '22: Nordic Human-Computer Interaction Conference; 2022 Oct 8-12; Aarhus, Denmark. New York: Association for Computing Machinery; 2022. p. 1-3.[DOI]

-

6. Slater M, Usoh M, Steed A. Depth of presence in virtual environments. Presence: Teleoper Virtual Environ. 1994;3(2):130-144.[DOI]

-

7. Bernhardt KA, Poltavski D, Petros T, Ferraro FR, Jorgenson T, Carlson C, et al. The effects of dynamic workload and experience on commercially available EEG cognitive state metrics in a high-fidelity air traffic control environment. Appl Ergon. 2019;77:83-91.[DOI]

-

8. Scherer KR. What are emotions? And how can they be measured? Soc Sci Inf. 2005;44(4):695-729.[DOI]

-

9. Nesenbergs K, Abolins V, Ormanis J, Mednis A. Use of augmented and virtual reality in remote higher education: A systematic umbrella review. Edu Sci. 2020;11(1):8.[DOI]

-

10. Christoff N, Neshov NN, Tonchev K, Manolova A. Application of a 3D talking head as part of telecommunication AR, VR, MR system: systematic review. Electronics. 2023;12(23):4788.[DOI]

-

11. Ens B, Lanir J, Tang A, Bateman S, Lee G, Piumsomboon T, et al. Revisiting collaboration through mixed reality: the evolution of groupware. Int J Hum Comput Stud. 2019;131:81-98.[DOI]

-

12. Parekh P, Patel S, Patel N, Shah M. Systematic review and meta-analysis of augmented reality in medicine, retail, and games. Vis Comput Ind Biomed Art. 2020;3:21.[DOI]

-

13. Schäfer A, Reis G, Stricker D. A survey on synchronous augmented, virtual, and mixed reality remote collaboration systems. ACM Comput Surv. 2022;55(6):1-27.[DOI]

-

14. Turan Z, Karabey SC. The use of immersive technologies in distance education: a systematic review. Educ Inf Technol. 2023;28(12):16041-16064.[DOI]

-

15. Bernal G, Hidalgo N, Russomanno C, Maes P. Galea: a physiological sensing system for behavioral research in virtual environments. In: 2022 IEEE Conference on Virtual Reality and 3D User Interfaces (VR); 2022 Mar 12-16; Christchurch, New Zealand. Piscataway: IEEE; 2022. p. 66-76.[DOI]

-

16. Moher D, Liberati A, Tetzlaff J, Altman DG. Preferred reporting items for systematic reviews and meta-analyses: the PRISMA statement. Ann Intern Med. 2009;151(4):264-269.[DOI]

-

17. Bailenson J. Experience on demand: what virtual reality is, how it works, and what it can do. New York: WW Norton & Company; 2018.

-

18. Dey A, Billinghurst M, Lindeman RW, Swan JE. A systematic review of 10 years of augmented reality usability studies: 2005 to 2014. Front Robot AI. 2018;5:37.[DOI]

-

19. Guarese R, Pretty E, Fayek H, Zambetta F, van Schyndel R. Evoking empathy with visually impaired people through an augmented reality embodiment experience. In: 2023 IEEE Conference Virtual Reality and 3D User Interfaces (VR), 2023 Mar 25-29; Shanghai, China. Piscataway: IEEE; 2023. p. 184-193.[DOI]

-

20. Kim I, Azimi E, Kazanzides P,Huang CM. Active engagement with virtual reality reduces stress and increases positive emotions. In: 2023 IEEE International Symposium on Mixed and Augmented Reality (ISMAR); 2023 Oct 16-20; Sydney, Australia. Piscataway: IEEE; 2023. p. 523-532.[DOI]

-

21. Dietz D, Oechsner C, Ou C, Chiossi F, Sarto F, Mayer S, et al. Walk this beam: iImpact of different balance assistance strategies and height exposure on performance and physiological arousal in VR. In: Koike T, Koizumi N, Bruder G, Roth D, Takashima K, Hiraki T, Ban Y, Piovarci M, editors. Proceedings of the 28th ACM Symposium on Virtual Reality Software and Technology; 2022 Nov 29; Tsukuba, Japan. New York: Association for Computing Machinery; 2022. p. 1-12.[DOI]

-

22. Luong T, Holz C. Characterizing physiological responses to fear, frustration, and insight in virtual reality. IEEE Trans Vis Comput Graph. 2022;28(11):3917-3927.[DOI]

-

23. Weiß S, Busse S, Heuten W. Inducing emotional stress from the intensive care context using storytelling in VR. In: 2022 IEEE Conference on Virtual Reality and 3D User Interfaces (VR); 2022 Mar 12-16; Christchurch, New Zealand. Piscataway: IEEE; 2022. p. 196-204.[DOI]

-

24. Wagener N, Niess J, Rogers Y, Schöning J. Mood worlds: a virtual environment for autonomous emotional expression. In: Barbosa S, Lampe C, Appert C, Shamma DA, Drucker S, Williamson J, Yatani K, editors. Proceedings of the 2022 CHI Conference on Human Factors in Computing Systems, 2022 Apr 29; New Orleans, USA. New York: Association for Computing Machinery; 2022. p. 1-16.[DOI]

-

25. Ponto K, Tredinnick R, Verbeke M, Kopp K, Swanson L, Gagnon D. et al. Waddle: using virtual penguin embodiment as a vehicle for empathy and informal learning. In: Proceedings of the 29th ACM Symposium on Virtual Reality Software and Technology, 2023 Oct 9-11; Christchurch, New Zealand; New York: Association for Computing Machinery; 2023. p. 1-2.[DOI]

-

26. Gu X, Li S, Yi K, Yang X, Liu H, Wang G. Role-exchange playing: an exploration of role-playing effects for anti-bullying in immersive virtual environments. IEEE Trans Vis Comput Graph. 2022;29(10):4215-4228.[DOI]

-

27. Schaper MM, Pares N. Enhancing students’ social and emotional learning in educational virtual heritage through projective augmented reality. In: Barbosa S, Lampe C, Appert C, Shamma DA, editors. CHI EA '22: Extended Abstracts of the 2022 CHI Conference on Human Factors in Computing Systems; 2022 Apr 29; New Orleans, USA. New York: Association for Computing Machinery; 2022. p. 1-9.[DOI]

-

28. Bian Y, Yang C, Guan D, Xiao S, Gao F, Shen C, et al. Effects of pedagogical agent’s personality and emotional feedback strategy on Chinese students’ learning experiences and performance: A study based on virtual Tai Chi training studio. In: Kaye J, Druin A, editors. Proceedings of the 2016 CHI conference on human factors in computing systems; 2016 May 7-12; California, USA. New York: Association for Computing Machinery; 2016. p. 433-444.[DOI]

-

29. Li C, Kon ALL, Ip HHS. Use virtual reality to enhance intercultural sensitivity: a randomised parallel longitudinal study. IEEE Trans Vis Comput Graph. 2022;28(11):3673-3683.[DOI]

-

30. Nam H, Kim C, Kim K, Yeo JY, Park J. An emotionally responsive virtual parent for pediatric nursing education: a framework for multimodal momentary and accumulated interventions. In: 2022 IEEE International Symposium on Mixed and Augmented Reality (ISMAR); 2022 Oct 17-21; Singapore, Singapore. Piscataway: IEEE; 2022. p. 365-374.[DOI]

-

31. Ito K, Hosoi J, Ban Y, Kikuchi T, Nakagawa K, Kitagawa H. Wind comfort and emotion can be changed by the cross-modal presentation of audio-visual stimuli of indoor and outdoor environments. In: 2023 IEEE Conference Virtual Reality and 3D User Interfaces (VR); 2023 Mar 25-29; Shanghai, China. Piscataway: IEEE; 2023. p. 215-225.[DOI]

-

32. Shijo R, Sakurai S, Hirota K, Nojima T. Research on the emotions expressed by the posture of Kemo-mimi. In: Koike T, Koizumi N, Bruder G, Roth D, Takashima K, Hiraki T, Ban Y, Piovarci M, editors. Proceedings of the 28th ACM Symposium on Virtual Reality Software and Technology; 2022 Nov 29; Tsukuba, Japan. New York: Association for Computing Machinery; 2022. p. 1-5.[DOI]

-

33. Kors MJ, van der Spek ED, Bopp JA, Millenaar K, van Teutem RL, Ferri G, et al. The curious case of the transdiegetic cow, or a mission to foster other-oriented empathy through virtual reality. In: Proceedings of the 2020 CHI conference on human factors in computing systems; 2020 Apr 25-30; Honolulu, USA. New York: Association for Computing Machinery; 2020. p. 1-13.[DOI]

-

34. Peng X, Huang J, Denisova A, Chen H, Tian F, Wang H. A palette of deepened emotions: exploring emotional challenge in virtual reality games. In: Bernhaupt R, Mueller F, Verweij D, Andres J, editors. Proceedings of the 2020 CHI conference on human factors in computing systems; 2020 Apr 25-30; Honolulu, USA. New York: Association for Computing Machinery; 2020. p. 1-13.[DOI]

-

35. Bevan C, Green DP, Farmer H, Rose M, Cater K, Fraser DS, et al. Behind the curtain of the “ultimate empathy machine” on the composition of virtual reality nonfiction experiences. In: Brewster S, Fitzpatrick G, editors. Proceedings of the 2019 CHI conference on human factors in computing systems; 2019 May 4-9; Scotland, UK. New York: Association for Computing Machinery; 2019. p. 1-12.[DOI]

-

36. Bindman SW, Castaneda LM, Scanlon M, Cechony A. Am I a bunny? The impact of high and low immersion platforms and viewers’ perceptions of role on presence, narrative engagement, and empathy during an animated 360 video. In: Mandryk R, Hancock M, editors. Proceedings of the 2018 CHI conference on human factors in computing systems; 2018 Apr 21-26; Montreal, Canada. New York: Association for Computing Machinery; 2018. p. 1-11.[DOI]

-

37. Dey A, Piumsomboon T, Lee Y, Billinghurst M. Effects of sharing physiological states of players in a collaborative virtual reality gameplay. In: Mark G, Fussell S, editors. Proceedings of the 2017 CHI conference on human factors in computing systems; 2017 May 6-11; Denver, USA. New York: Association for Computing Machinery; 2017. p. 4045-4056.[DOI]

-

38. Gerry LJ. Paint with me: stimulating creativity and empathy while painting with a painter in virtual reality. IEEE Trans Vis Comput Graph. 2017;23(4):1418-1426.[DOI]

-

39. Gil K, Rhim J, Ha T, Doh YY, Woo W. AR Petite Theater: augmented reality storybook for supporting children’s empathy behavior. In: 2014 IEEE International Symposium on Mixed and Augmented Reality-Media, Art, Social Science, Humanities and Design (ISMAR-MASH’D); 2014 Sep 10-12; Munich, Germany. Piscataway: IEEE; 2014. p. 13-20.[DOI]

-

40. Choudhary Z, Norouzi N, Erickson A, Schubert R, Bruder G, Welch GF. Exploring the social influence of virtual humans unintentionally conveying conflicting emotions. In: 2023 IEEE Conference Virtual Reality and 3D User Interfaces (VR); 2023 Mar 25-29; Shanghai, China. 2023 .p. 571-580.[DOI]

-

41. Jing A, Gupta K, McDade J, Lee GA, Billinghurst M. Comparing gaze-supported modalities with empathic mixed reality interfaces in remote collaboration. In: 2022 IEEE International Symposium on Mixed and Augmented Reality (ISMAR); 2022 Oct 17-21; Singapore, Singapore. Piscataway: IEEE; 2022. p. 837-846.[DOI]

-

42. Semertzidis N, Vranic-Peters M, Andres J, Dwivedi B, Kulwe YC, Zambetta F, et al. Neo-noumena: augmenting emotion communication. In: Bernhaupt R, Mueller F, Verweij D, Andres J, editors. Proceedings of the 2020 CHI conference on human factors in computing systems; 2020 Apr 25-30; Honolulu, USA. New York: Association for Computing Machinery; 2020. p. 1-13.[DOI]

-

43. Masai K, Kunze K, Sugimoto M, Billinghurst M. Empathy glasses. In: Kaye J, Druin A, editors. Proceedings of the 2016 CHI Conference Extended Abstracts on Human Factors in Computing Systems; 2016 May 7-12; California, USA. New York: Association for Computing Machinery; 2016. p. 1257-1263.[DOI]

-

44. Guarese R, Polson D, Zambetta F. Immersive tele-guidance towards evoking empathy with people who are vision impaired. In: 2023 IEEE International Symposium on Mixed and Augmented Reality Adjunct (ISMAR-Adjunct); 2023 Oct 16-20; Sydney, Australia. New York: Association for Computing Machinery; 2023. p. 809-814.[DOI]

-

45. Yong S, Cui L, Suma Rosenberg E, Yarosh S. A change of scenery: transformative insights from retrospective VR embodied perspective-taking of conflict with a close other. In: Mueller FF, Kyburz P, Williamson JR, Sas C, Wilson ML,A Dugas PT, Shklovski I, editors. Proceedings of the CHI Conference on Human Factors in Computing Systems; 2024 May 11-16; Honolulu, USA. New York: Association for Computing Machinery; 2024. p. 1-18.[DOI]

-

46. Sora-Domenjó C. Disrupting the “empathy machine”: the power and perils of virtual reality in addressing social issues. Front Psychol. 2022;13:814565.[DOI]

-

47. Vorauer JD, Sucharyna TA. Potential negative effects of perspective-taking efforts in the context of close relationships: Increased bias and reduced satisfaction. J Pers Soc Psychol. 2013;104(1):70.[DOI]

-

48. Wang CC, Volonte M, Ebrahimi E, Liu KY, Wong SK, BabuSV. An evaluation of native versus foreign communicative interactions on users’ behavioral reactions towards affective virtual crowds. In: 2022 IEEE Conference on Virtual Reality and 3D User Interfaces (VR); 2022 Mar12-16; Christchurch, New Zealand. New York: Association for Computing Machinery; 20222. p. 340-349.[DOI]

-

49. Benvegnù G, Pluchino P, Garnberini L. Virtual morality: using virtual reality to study moral behavior in extreme accident situations. In: 2021 IEEE Virtual Reality and 3D User Interfaces (VR), 2021 Mar 27; Lisboa, Portugal. New York: Association for Computing Machinery; 2021. p. 316-325.[DOI]

-

50. Tse A, Jennett C, Moore J, Watson Z, Rigby J, Cox AL. Was I there? Impact of platform and headphones on 360 video immersion. In: Mark G, Fussell S, editors. Proceedings of the 2017 CHI conference extended abstracts on human factors in computing systems, 2017 May 6-11; Colorado, USA. New York: Association for Computing Machinery; 2017. p. 2967-2974.[DOI]

-

51. Kono M, Miyaki T, Rekimoto J. In-pulse: inducing fear and pain in virtual experiences. In: Spencer SN, editor. Proceedings of the 24th ACM Symposium on Virtual Reality Software and Technology; 2018 Nov 28; Tokyo, Japan. New York: Association for Computing Machinery; 2018. p. 1-5.[DOI]

-

52. Valente A, Lopes DS, Nunes N, Esteves A. Empathic aurea: exploring the effects of an augmented reality cue for emotional sharing across three face-to-face tasks. In: 2022 IEEE conference on virtual reality and 3D user interfaces (VR); 2022 Mar 12-16; Christchurch, New Zealand. New York: Association for Computing Machinery; 2022. p. 158-166.[DOI]

-

53. Voigt-Antons JN, Spang R, Kojić T, Meier L, Vergari M, Möller S. Don’t worry be happy-using virtual environments to induce emotional states measured by subjective scales and heart rate parameters. In: 2021 IEEE Virtual Reality and 3D User Interfaces (VR); 2021 Mar 27; Lisboa, Portugal. New York: Association for Computing Machinery; 2021. p. 679-686.[DOI]

-

54. Lee S, El Ali A, Wijntjes M, Cesar P. Understanding and designing avatar biosignal visualizations for social virtual reality entertainment. In: Barbosa S, Lampe C, Appert C, Shamma DA, Drucker S, Williamson J, Yatani K, editors. Proceedings of the 2022 CHI Conference on Human Factors in Computing Systems; 2022 Apr29; New Orleans, USA. New York: Association for Computing Machinery; 2022. p. 1-15.[DOI]

-

55. Prachyabrued M, Wattanadhirach D, Dudrow RB, Krairojananan N, Fuengfoo P. Toward virtual stress inoculation training of prehospital healthcare personnel: A stress-inducing environment design and investigation of an emotional connection factor. In: 2019 IEEE Conference on Virtual Reality and 3D User Interfaces (VR); 2019 Mar 23-27; Osaka, Japan. Piscataway: IEEE; 2019. p. 671-679.[DOI]

-

56. empatica [Internet]. Milan: Empatica Inc. Available from: https://www.empatica.com/

-

57. Li M, Pan J, Gao Y, Shen Y, Luo F, Dai J. Neurophysiological and subjective analysis of VR emotion induction paradigm. IEEE Trans Vis Comput Graph. 2022;28(11):3832-3842.[DOI]

-

58. Hou X, Sourina O. Emotion-enabled haptic-based serious game for post stroke rehabilitation. In: Thalmann NM, Wu E, Tachi S, editors. Proceedings of the 19th ACM symposium on virtual reality software and technology; 2013 Oct 6-9; Singapore. Singapore. New York: Association for Computing Machinery; 2013. p. 31-34.[DOI]

-

59. Kourtesis P, Amir R, Linnell J, Argelaguet F, MacPherson SE. Cybersickness, cognition, & motor skills: the effects of music, gender, and gaming experience. IEEE Trans Vis Comput Graph. 2023;29(5):2326-2336.[DOI]

-

60. Jhan XD, Wong SK, Ebrahimi E, Lai Y, Huang WC, Babu SV. Effects of small talk with a crowd of virtual humans on users’ emotional and behavioral responses. IEEE Trans Vis Comput Graph. 2022;28(11):3767-3777.[DOI]

-

61. Volonte M, Wang CC, Ebrahimi E, Hsu YC, Liu KY, Wong SK. Effects of language familiarity in simulated natural dialogue with a virtual crowd of digital humans on emotion contagion in virtual reality. In: 2021 IEEE Virtual Reality and 3D User Interfaces (VR); 2021 Mar 23-27; Lisboa, Portugal. Piscataway: IEEE; 2021. p. 188-197.[DOI]

-

62. Volonte M, Hsu YC, Liu KY, Mazer JP, Wong SK, Babu SV. Effects of interacting with a crowd of emotional virtual humans on users’ affective and non-verbal behaviors. In: 2020 IEEE Conference on Virtual Reality and 3D User Interfaces (VR), 2020 Mar22-26; Atlanta, USA. Piscataway: IEEE; 2020. p. 293-302.[DOI]

-

63. Pan Y, Zhang R, Cheng S, Tan S, Ding Y, Mitchell K. Emotional voice puppetry. IEEE Trans Vis Comput Graph. 2023;29(5):2527-2535.[DOI]

-

64. Wang Z, Ling J, Feng C, Lu M, Xu F. Emotion-preserving blendshape update with real-time face tracking. IEEE Trans Vis Comput Graph. 2020;28(6):2364-2375.[DOI]

-

65. Suzuki K, Nakamura F, Otsuka J, Masai K, Itoh Y, Sugiura Y. Recognition and mapping of facial expressions to avatar by embedded photo reflective sensors in head mounted display. In: 2017 IEEE Virtual Reality (VR); 2017, Mar 18-22; Los Angeles, USA. Piscataway: IEEE; 2017. p. 177-185.[DOI]

-

66. Bekele E, Zheng Z, Swanson A, Crittendon J, Warren Z, Sarkar N. Understanding how adolescents with autism respond to facial expressions in virtual reality environments. IEEE Trans Vis Comput Graph. 2013;19(4):711-720.[DOI]

-

67. Lohesara FG, Freitas DR, Guillemot C, Eguiazarian K, Knorr S. HEADSET: Human emotion awareness under partial occlusions multimodal dataset. IEEE Trans Vis Comput Graph. 2023;29(11):4686-4696.[DOI]

-

68. Baloup M, Pietrzak T, Hachet M, Casiez G. Non-isomorphic interaction techniques for controlling avatar facial expressions in VR. In: Itoh Y, Takashima K, Punpongsanon P, Sra M, Fujita K, Yoshida S, Piumsomboon T, editors. Proceedings of the 27th ACM Symposium on Virtual Reality Software and Technology; 2021; Dec 8-10; Osaka, Japan. New York: Association for Computing Machinery; 2021. p. 1-10.[DOI]

-

69. Choudhary Z, Kim K, Schubert R, Bruder G, Welch GF. Virtual big heads: analysis of human perception and comfort of head scales in social virtual reality. In: 2020 IEEE Conference on Virtual Reality and 3D User Interfaces; 2020 Mar 22-26; Atlanta, USA. Piscataway: IEEE; 2020. p. 425-433.[DOI]

-

70. Bönsch A, Radke S, Overath H, Asché LM, Wendt J, Vierjahn T. Social VR: how personal space is affected by virtual agents’ emotions. In: 2018 IEEE Conference on Virtual Reality and 3D User Interfaces (VR); 2018 Mar 18-22; Tuebingen/Reutlingen, Germany. Piscataway: IEEE; 2018. p. 199-206.[DOI]

-

71. Waltemate T, Gall D, Roth D, Botsch M, Latoschik ME. The impact of avatar personalization and immersion on virtual body ownership, presence, and emotional response. IEEE Trans Vis Comput Graph. 2018;24(4):1643-1652.[DOI]

-

72. Norouzi N, Gottsacker M, Bruder G, Wisniewski PJ, Bailenson J, Welch G. Virtual humans with pets and robots: exploring the influence of social priming on one’s perception of a virtual human. In: 2022 IEEE Conference on Virtual Reality and 3D User Interfaces (VR); 2022 Mar 12-16; Christchurch, New Zealand. Piscataway: IEEE; 2022. p. 311-320.[DOI]

-

73. Moustafa F, Steed A. A longitudinal study of small group interaction in social virtual reality. In: Spencer SN, editor.Proceedings of the 24th ACM symposium on virtual reality software and technology; 2018, Nov 28; Tokyo, Japan. New York: Association for Computing Machinery; 2018. p. 1-10.[DOI]

-

74. Gupta K, Lee GA, Billinghurst M. Do you see what I see? The effect of gaze tracking on task space remote collaboration. IEEE Trans Vis Comput Graph. 2016;22(11):2413-2422.[DOI]

-