Abstract

This paper explores two virtual child simulators, BabyX and the VR Baby Training Tool, which provide immersive, interactive platforms for child-focused research and training. These technologies address key ethical and practical constraints, enabling the systematic study of caregiver-infant interactions and the development of professional relational skills with young children. We examine their design, features, and applications, as well as their future trajectories, highlighting their potential to advance research and improve training methodologies.

Keywords

1. Introduction

In her 2021 TED Talk, seven-year-old Molly Wright posed a simple but profound question: “What if I were to tell you that a game of peek-a-boo could change the world?” Her sincerity resonated with audiences worldwide, and the video quickly went viral. Yet Molly’s presentation was not merely endearing—it was rooted in science. Decades of research have demonstrated that early caregiver-child interactions play a pivotal role in shaping developmental outcomes. Even seemingly simple, play-based exchanges such as peek-a-boo contribute to the formation of the fundamental building blocks of human cognition[1-3].

Understanding the dynamics of early caregiver-child interactions, both theoretically and practically, is essential to ensuring that parents, caregivers, and professionals working with young children are equipped with the relational competencies necessary to promote child well-being and optimal developmental outcomes[4,5]. However, research and training in child-focused fields face significant challenges. Ethical and behavioural considerations, while necessary, substantially constrain experimental designs, making it difficult to systematically examine early interactions and draw causal inferences. Similar limitations exist in training contexts such as early childhood education (ECE) and paediatric healthcare, where providing structured, hands-on, and immersive experiences is logistically demanding and may pose risks to both trainees and children, especially infants[6]. Although mannequin-based simulators have been widely used in such settings, they primarily emphasize anatomical and physiological accuracy, lacking the cognitive, emotional, and interactive components essential for studying and practising complex adult-infant relational dynamics.

This paper examines two innovative virtual child simulation platforms—BabyX and the Virtual Reality Baby Training Tool (VRBTT)—developed to address the aforementioned challenges. Unlike traditional mannequin-based simulators, these virtual platforms incorporate complex social behaviours within lifelike, interactive agents, thereby shifting the focus of training and research from purely procedural competencies to include relational skills as well. BabyX simulates cognitive and emotional processes, enabling researchers to systematically manipulate variables that would be ethically or practically impossible to alter in studies involving human infants. This capability allows for causal investigations into caregiver-child interactions and mechanisms of socially situated learning. In contrast, the VRBTT immerses professionals in embodied, relational interactions with a virtual infant endowed with individualised personalities and temperamental traits. Rather than modelling the behaviour of a generic or "universal" infant, the VRBTT enhances practitioners’ abilities to perceive, interpret, and respond to nuanced infant cues. This supports sensing pedagogies[6,7] and fosters the development of responsive caregiving and pedagogical expertise. Moreover, the VRBTT offers trainees immersive, interactive, and adaptive learning environments that more closely replicate the complexity of real-world caregiving and professional settings, thus providing a more robust foundation for relational skill development.

By overcoming longstanding barriers in both child-focused research and training, virtual child simulators offer novel tools within the domain of empathic computing that both complement and extend traditional methodologies. These technologies enable new insights into child development while enhancing the capacity of caregivers and professionals to cultivate essential adult-child relational skills.

2. Challenges in Child-Focused Research and Training

Research involving children presents inherent challenges, largely due to strict ethical guidelines that, rightly, prioritise child well-being. These regulations place clear limitations on what can be studied and require adherence to specific protocols to ensure the continuous safety and autonomy of young participants[8]. In addition to these ethical constraints, children’s inherent unpredictability as research participants further complicates experimental design. Unlike adults, infants and young children may disengage from tasks, fail to follow instructions, or respond in unexpected ways. Furthermore, many fundamental developmental questions remain difficult to investigate experimentally, as researchers are unable to control or elicit infant behaviour with precision.

For instance, studying how infant communicative cues influence caregiver responsiveness is particularly difficult, given that such cues cannot be reliably or systematically evoked in research settings. Likewise, experimentally examining the effects of adverse childhood experiences on brain development and behaviour is ethically unfeasible. As a result, much of the existing knowledge in these domains is derived from correlational studies and case reports. While valuable, these methodologies do not permit causal inference, thereby limiting our understanding of the active role that infants play in shaping their social environments through early interactions.

While these challenges are significant in research contexts, they also extend to professional training environments. In ECE settings, trainee teachers often struggle to gain sufficient hands-on experience with infants and young children[5,9], particularly in complex or high-stress situations. Moreover, the diverse and unpredictable nature of young children’s behaviour means that practical training experiences can vary widely among trainees, depending on the individual child and the specific circumstances they encounter.

Effective ECE environments depend on strong teacher-child relationships, therefore developing relational expertise is crucial. This expertise is best cultivated through embodied learning-experiencing and practising relationship-building through sensory and emotional engagement[5-7,10]. However, variability or inadequacy in practical experience during training poses significant challenges to achieving this goal.

Moreover, when working with children, there is often little opportunity to pause, reflect on decisions, try alternatives, or collaborate with fellow students during the learning process. This applies to medical fields as well, where students need training for situations involving infants and young children with developmental challenges, illnesses, or those who may be victims of abuse. These situations cannot be easily reproduced in real-life settings. Even in less challenging contexts, assessing infants’ and young children’s development and well-being is heavily influenced by their temperament—an aspect that cannot be reliably simulated in real-world training scenarios.

While these challenges are significant in research contexts, they also extend into professional training environments. In ECE settings, trainee teachers often struggle to obtain adequate hands-on experience with infants and young children[5,9], particularly in complex or high-stress situations. Furthermore, the diverse and unpredictable nature of young children’s behaviour means that practical training experiences can vary greatly among trainees, depending on the individual child and the specific circumstances they encounter.

Effective ECE environments depend heavily on the quality of teacher-child relationships; thus, the development of relational expertise is essential. This expertise is best cultivated through embodied learning—experiencing and practising relationship-building through sensory and emotional engagement[5-7,10]. However, inconsistency or insufficiency in practical experience during training presents a significant barrier to achieving this objective.

In addition, when working with children, there is often limited opportunity to pause, reflect on one’s decisions, explore alternative approaches, or collaborate with peers during the learning process. This challenge is equally relevant in medical fields, where students must be trained to manage situations involving infants and young children with developmental challenges, illnesses, or those who may be victims of abuse. Such sensitive and high-risk scenarios are inherently difficult to replicate in real-life training environments. Even in less demanding contexts, the assessment of infants’ and young children’s development and well-being is strongly influenced by individual temperament—a factor that cannot be consistently or realistically simulated in conventional training settings.

3. Simulated Children

The concept of simulated children has a long-standing history in both research and training, particularly within the field of medicine[11]. In Western societies, infant-like mannequins—historically referred to as “obstetrical phantoms”—have been used since at least the 18th century to train midwives and physicians in childbirth techniques[12]. Simulated children have also featured prominently in children’s play. Through engagement with dolls and figurines, children rehearse social interactions, fostering the development of empathy, relational skills, and caregiving behaviours[13,14].

Today, simulation is widely used in medical training, including in paediatrics[15], and constitutes a dynamic area of ongoing research[16]. Advances in patient simulation technologies have resulted in high-fidelity mannequin simulators (or “manikins”) that represent a wide range of age groups, from premature infants to elderly adults. Entry-level manikins reproduce anatomical features and basic physiological functions such as respiration, heartbeat, pulse, and pupil dilation. More advanced models simulate clinical symptoms, including respiratory distress or seizures, making them essential tools for specialised paediatric training in areas such as neonatal resuscitation, airway management, and advanced life support. These simulators allow learners to acquire critical skills in safe, controlled environments before interacting with human patients.

In parallel, low-fidelity infant mannequins are commonly used in ECE training to provide low-risk opportunities for students to practise essential infant care tasks—such as feeding, diaper changing, and safe handling. These tools serve as a valuable initial step in preparing trainee teachers before they engage with real infants. Beyond professional contexts, infant mannequins are also utilised in parenting education and teen pregnancy prevention programs, offering users a realistic approximation of the demands associated with infant care.

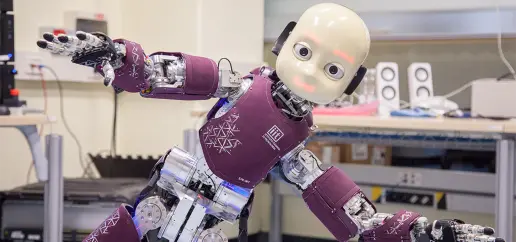

Another domain where simulated children have been developed is developmental robotics—an interdisciplinary field that seeks to advance robotics through insights from developmental psychology[17], while simultaneously using robotic models to better understand human social and cognitive development[18].

For example, researchers at the Istituto Italiano di Tecnologia developed “iCub” (Figure 1), a humanoid robot platform designed to investigate embodied cognitive development through autonomous exploration and social interaction[20]. The iCub, approximately the size of a five-year-old child, is equipped with an extensive suite of sensory, cognitive, and motor capabilities, including binocular vision, speech recognition and generation, full-body tactile sensitivity, emotional expression recognition, and complex movements such as walking, crawling, and sitting. The iCub can also learn motor tasks through imitation, reinforcement learning, and human feedback. As an open-source platform, the iCub has been employed across a wide range of research domains—from cognitive development to human-robot collaboration[21]. As of 2024, more than 40 iCubs are in use in laboratories across Europe, the United States, and Asia[22].

Evidently, simulated children, ranging from obstetrical phantoms to humanoid robots, have long been developed and employed to help individuals prepare for and better understand scenarios that are difficult or unethical to replicate using real children. However, current approaches exhibit notable limitations. In particular, mannequin-based simulators typically prioritise the physical and physiological simulation of children, while placing less emphasis on behavioural and cognitive dimensions. Even the most advanced models offer restricted behavioural repertoires and lack the capacity for authentic, interactive engagement with humans. This limitation significantly curtails their utility in research and training contexts that demand a nuanced understanding of social, emotional, and cognitive processes.

While child-like humanoid robots such as iCub are capable of advanced interactivity and cognitive modelling, their appearance and physical design do not closely resemble human children. This discrepancy reduces their ecological validity for studying adult-child interactions. Furthermore, their prohibitive cost (approximately $275,000 USD) renders them inaccessible to most educational and research institutions.

As a result, there is a clear and growing need for accessible simulation technologies that integrate physical realism with sophisticated behavioural and cognitive functionality. Such tools could facilitate more comprehensive training and empirical investigation, while helping to overcome longstanding methodological barriers in child-focused disciplines.

The following section introduces two such initiatives, BabyX and the VRBTT, which seek to address these challenges. These projects explore the potential of virtual simulated children to enhance adult-child interactions and open up new avenues for research, education, and professional training.

4. Virtual Infants: BabyX and the VRBTT

4.1 BabyX

4.1.1 Background

BabyX (Figure 2) is a highly realistic and interactive virtual model of a two-year-old child, developed at the Laboratory for Animate Technologies within the Auckland Bioengineering Institute at the University of Auckland, in collaboration with the spin-off company Soul Machines and researchers from the Early Learning Lab (ELLA)[23-25]. This ongoing project aims to create an experimental platform capable of embodying a range of biologically based computational models that (a) contribute to scientific understanding of brain function, and (b) autonomously generate expressive behaviours and support social learning in virtual agents for potential commercial applications.

Guided by principles from developmental robotics, the project adopts a developmental and embodied approach, seeking to embed the core cognitive processes underlying early social learning and communication within situated experiences. Previous research on computational models of infant development has often been constrained to specific domains, such as motor development[26], language acquisition[27-29], or cognitive processes studied in isolation from the body[30,31].

In contrast, the BabyX project recognises the interconnectedness of cognitive systems[32], emphasising the importance of holistic models that capture how multiple cognitive processes dynamically interact with each other and with the environment to produce behaviour[33-35]. In addressing this complexity, the BabyX system seeks to simulate both the brain and body of a child, along with their reciprocal interactions, in order to more accurately model early development in a realistic and integrated manner.

4.1.2 Features and applications

BabyX integrates a graphical representation of an 18- to 24-month-old infant’s physical form, including a movable head, eyes, trunk, and limbs, with a neural network environment designed to simulate cognitive processes. Situated within an immersive 3D environment, BabyX interacts with its surroundings through simulated haptic feedback mechanisms.

Crucially, BabyX’s neural architecture processes visual and auditory inputs delivered via a webcam and microphone, enabling real-time, face-to-face interaction through a standard laptop or computer. BabyX is sensitive to users’ tone of voice, facial expressions, and visual attention, allowing for naturalistic social exchanges. Additionally, BabyX exhibits a sophisticated emotional repertoire, conveyed through dynamic facial expressions, vocalisations, and internally modelled neural states, including fluctuations in artificial neurotransmitters such as dopamine and cortisol.

Users can engage BabyX in a range of interactive tasks, including cognitive games like block-stacking and puzzles, as well as social exchanges such as peek-a-boo. These interactions contribute to the training of BabyX’s cognitive models in a manner that seeks to closely replicate developmental learning processes in human infants.

Although conceptualised within the fields of virtual agents and social robotics, BabyX presents unprecedented opportunities for research in developmental psychology. Unlike human infants, BabyX’s behaviours can be systematically manipulated, enabling researchers to experimentally test key hypotheses regarding the influence of infants on their interactive partners in ways that are both novel and ethically viable.

For instance, while decades of research have highlighted the importance of contingently responsive interactions in infant development[36-46], these studies have largely focused on how caregivers shape infant behaviour, often framing caregivers as the primary initiators in the interaction—treating infants “as if” they were active participants. However, seminal work by Tronick et al.[47] demonstrated that both infants and caregivers are active contributors in these social exchanges. Therefore, a comprehensive understanding of bi-directional caregiver-infant dynamics requires exploring not only how caregivers influence infant behaviour, but also how infants shape caregiver responses.

4.1.3 Upcoming developments for BabyX

In 2024, researchers at the Early Learning Lab (https://www.earlylearning.ac.nz/) launched the first study utilising BabyX to explore the bi-directional dynamics of caregiver-infant interactions. ELLA’s Completing the Loop project aims to investigate how and under what conditions caregivers are sensitive to infants’ communicative signals. The overarching goal is to isolate the effects of specific infant signals on caregiver behaviour, thereby advancing our understanding of the reciprocal processes central to early relational development. Findings from this study are expected to contribute to theoretical frameworks that emphasise the mutual influence within caregiver-infant interactions by specifying how infants actively shape these exchanges. Additionally, data gathered through this research will be used to test, refine, and validate BabyX’s cognitive models.

Building on this initial study, a long-term objective of the Completing the Loop project is to support caregivers in developing effective communication and emotional regulation strategies, particularly in challenging scenarios, for instance, when a child is uncooperative or distressed. Such situations require caregivers to remain calm under pressure, manage their own emotional responses, and employ techniques that de-escalate conflict while maintaining positive, supportive relationships with the child. To this end, the ELLA team will investigate approaches to improve caregiver sensitivity and responsiveness to infant cues, facilitating more effective adult-child communication and interaction.

Beyond its immediate applications, BabyX will continue to be used in experimental developmental psychology, providing a platform to further investigate the underlying mechanisms of learning, emotion, and social interaction.

Although still in its early stages, BabyX represents a promising research platform for addressing previously inaccessible questions in child development. However, one key limitation of the current system is its reliance on a 2D interface, which restricts the level of immersion and embodied interaction. In contrast, a related initiative from Aotearoa New Zealand adopts a more immersive approach, this will be explored in the following section.

4.2 VRBTT

4.2.1 Background

Recent advancements in virtual reality (VR) technology have led to its increasing adoption across domains beyond gaming and entertainment. In particular, the immersive, interactive, and multisensory capabilities of VR[48-50] offer novel and engaging opportunities for education and training[51]. Researchers increasingly recognise VR’s potential to enhance learning outcomes in diverse settings[52]. A widely acknowledged advantage of VR is its ability to evoke a strong sense of presence, a heightened subjective experience in which users feel they are genuinely interacting within a virtual environment. This sense of presence can elicit realistic and ecologically valid user responses[53]. As a result, VR-based experiences have proven particularly effective at promoting empathic responses and perspective-taking, skills that are critical in people-focused professions, such as teaching and healthcare[52].

One recent initiative leveraging these capabilities is the VRBTT (Figure 3), developed by the Faculty of Education and the Human Interface Technology Lab NZ at the University of Canterbury (https://www.hitlabnz.org/), in collaboration with the university’s Child Well-being Research Institute. The project aims to create a highly realistic VR simulation of a six-month-old infant for use in professional education. In contrast to the 2D screen-based interaction of BabyX, the VRBTT enables users to engage with an embodied infant simulation within a shared, immersive virtual environment. The VRBTT seeks to expand the educational toolkit for fields such as ECE and healthcare, offering a safe and interactive platform for professionals to develop and refine fundamental relational skills.

Like the BabyX project, a central objective of the VRBTT is to improve the quality of care provided to infants, though it is specifically tailored to professional practice contexts. At the heart of the VRBTT is the concept of sensorial and embodied responsivity, or sensing pedagogies[6,7,10], which emphasise intuitive, body-based engagement as a foundation for relational learning. Rather than simulating a generic or “universal” infant, the VRBTT encourages educators to attune to each infant’s individualised communicative and affective cues, aligning with relational pedagogical and developmental frameworks that view learning as a co-constructed, dynamic, and dyadic process[54,55]. As White[54] notes, effective caregiving does not rely on predetermined responses, but rather involves the moment-to-moment negotiation of meaning within specific relational and contextual dynamics.

In this way, the VRBTT offers a structured yet immersive training environment in which professionals can hone their pedagogical competencies through embodied interaction. Trainees must attend closely to both verbal and non-verbal cues, developing the capacity to establish trusting, reciprocal relationships with virtual infants. Each virtual infant responds in a unique manner, requiring caregivers to engage in adaptive and relational attunement, reinforcing this as a core competency not only in early childhood education, but in adult-infant interactions more broadly.

4.2.2 Features and applications

The VRBTT prototype was developed using Unity and designed for deployment on the Oculus Quest 2 HMD, supported by a mid- to high-performance PC equipped with a dedicated GPU (e.g., Intel Core i7, 16-32 GB RAM, NVIDIA RTX 2080 GPU, Windows 10). This configuration ensures a balance between high-fidelity immersion and cost-effectiveness. The system features a fully embodied, interactive, and lifelike virtual infant situated within a digitally rendered nursery environment that includes realistic objects such as a changing table, toys, and a cot. The virtual infant can be picked up, held, repositioned, or put down, and it responds to user interaction in a realistic and contingent manner. For instance, it reacts to stimuli such as waving objects in front of its face or rhythmic rocking motions.

The infant also exhibits a range of expressive and communicative behaviours, including facial expressions (e.g., crying, laughing), gaze shifts, and more subtle cues such as rubbing its eyes when tired or babbling contentedly. Furthermore, it can be programmed to display rudimentary preferences, such as favouring a specific toy (e.g., a duck) or responding more positively to certain features (e.g., the colour yellow).

Initial user studies have demonstrated the VRBTT’s potential to elicit authentic caregiver-infant interactions[6]. Users frequently responded to the virtual infant in ways comparable to real-world interactions, engaging in playful exchanges, soothing it when distressed, and responding intuitively to its cues. Notably, most participants spoke or even sang to the virtual baby, despite being informed that such behaviours would not influence its responses. In particular, incidents of accidental mishandling or dropping of the virtual infant evoked strong emotional reactions from both participants and observers. These findings are consistent with prior work[56] and suggest that the sense of presence and interactivity achieved through the VRBTT makes it a compelling platform for relational skill development.

4.2.3 Upcoming developments for the VRBTT

Following receipt of funding from New Zealand’s Ministry of Business, Innovation and Employment Endeavour Fund, the VRBTT project is now entering a new phase focused on expanding its industry applications. A primary goal is to incorporate increasingly sophisticated behavioural models that reflect individual differences in infant temperament and personality. For example, one virtual infant model may demonstrate higher levels of surgency, characterised by enjoyment of novelty, frequent smiling and laughter, and quicker recovery from distress or stimulation. Another may display greater negative affectivity, evidenced by heightened sensitivity to intense or unexpected stimuli, reduced positive emotionality, and slower recovery. Additionally, users will need to adapt to each infant’s unique preferences, such as favouring specific objects or responding more positively to certain communication styles. These refinements will enhance the dispositional realism and behavioural nuance of the simulation, further strengthening users’ capacity to interpret and respond to complex infant cues[6].

Currently, the VRBTT environment simulates a standard ECE context, focusing on one-on-one interactions between a single caregiver and an infant. Moving forward, the project will collaborate with industry partners to co-design tailored application frameworks suited to a range of professional learning contexts. For example, ECE students might use the system to practise interpreting infants’ communicative signals and responding with appropriate relational strategies. In contrast, healthcare professionals could use the platform to refine their observational and diagnostic skills, while also maintaining rapport with the infant. The system will also support the integration of high-stress or complex scenarios to better reflect real-world challenges. For instance, ECE trainees may need to manage interactions with multiple children simultaneously, while medical students might need to handle emergency procedures while keeping the infant calm and regulated.

In parallel, full empirical validation of the VRBTT is a critical next step. Future research will assess the tool’s effectiveness in supporting learning outcomes and decision-making in training scenarios, while also identifying key barriers to implementation, such as system accessibility and user adoption. Specific investigations will examine how VRBTT impacts the development of relational and “sensing” skills among ECE and healthcare professionals. Additionally, comparative studies will explore which interaction modalities, such as controllers, haptic gloves, or hand-tracking interfaces, best support user experience and learning effectiveness when engaging with the virtual infant.

Beyond these immediate applications, the VRBTT represents a promising platform for advancing higher-order learning within VR-based education. As noted by Hamilton et al.[52], VR has thus far been primarily used to teach lower-level skills and knowledge, whereas the promotion of higher-level cognitive processes, as defined by Bloom[57], remains limited. Higher-level learning involves the ability to analyse, synthesise, and evaluate, thereby enabling learners to deepen their understanding and transfer knowledge across contexts.

As a virtual training tool, the VRBTT offers learners the ability to pause, reflect, and retry interactions, capabilities that are not always feasible in real-world settings. For instance, after applying a particular soothing technique with the virtual infant, learners can reflect on its effectiveness, receive feedback, and re-engage with the interaction using an alternative approach. This structure not only supports individual experiential learning, but also fosters a collaborative educational environment, wherein students can collectively reflect and discuss moment-to-moment experiences to deepen their relational competence.

5. Future Applications and Considerations for Simulated Children in Research and Training

The projects outlined above showcase two innovative, empathic computing initiatives that leverage immersive and interactive technologies to overcome challenges in research and training involving children.

As these technologies continue to evolve, we anticipate that they will open new avenues for exploration, broadening the range of applications and advancing the field of empathic computing. Below, we discuss possible future directions for the BabyX and VRBTT projects, and explore additional applications and research areas involving simulated children.

5.1 Integrating cognitive modelling and immersive interaction

Although the BabyX and VRBTT projects have distinct objectives, they share a common goal: enhancing adults’ relational skills with young children through embodied, interactive simulations. Both initiatives aim to develop tools that improve users’ ability to interpret and respond to children’s communicative behaviors in real time. These projects are mutually informative, with each offering unique strengths that complement the other (Table 1).

| Feature | BabyX | VRBTT |

| Simulation Environment Target Age SimulationCost Factors | 2D interactive model 18 to 24-month-old infant High computational costs; requires advanced AI processing infrastructure or deployment from such machine | Fully immersive VR environment 6-month-old infant Moderate hardware cost; consumer- level systems |

| Required Hardware/Software | High-performance computing system with GPU acceleration; webcam, microphone | VR system (e.g., Oculus Quest 2) & mid-high spec PC with dedicated graphics |

| Key User Experiences | Currently designed for research; allows precise manipulation of variables in developmental psychology experiments; real-time, screen-based interactions with avatar | Currently designed for professional training; enables real-time, immersive interactions and hands-on relational skill development |

| Interactivity | Webcam and microphone enable face- to-face interactions; sensitive to tone, facial expressions, and visual attention | Users interact through VR controllers, haptic gloves, or hand tracking; responds to physical touch and movement |

| Focus Areas | Cognitiv and emotional modelling with neural networks; social contingency in early communicative development | Embodied, relational skill-building with a focus on professional education |

| Applications | Developmental psychology research, especially bi-directional dynamics in caregiver-infant interactions | Training in professional contexts for relational expertise |

| Behavioural Customisation | Customisation of cognitive and emotional processes, as well as affective characteristics | Customisation of infant characteristics (such as personality and temperament) and care contexts |

| Limitations | Restricted to 2D interface, limiting immersion and embodied interaction | Limited cognitive and emotional complexity compared to BabyX |

| Innovative Features | Neural network-driven dynamic responses; can “train” BabyX through social interactions | Embodied interactions in shared virtual spaces; ability to pause, reflect, and retry training scenarios |

| Development Teams | Auckland Bioengineering Institute’s Laboratory for Animate Technologies (UoA); Soul Machines; Early Learning Lab (UoA) | HIT Lab NZ (UC); Faculty of Education (UC) |

VRBTT: Virtual Reality Baby Training Tool; VR: virtual reality; 2D: two-dimensional; HIT: Human Interface Technology.

BabyX is built on advanced cognitive modeling, enabling realistic emotional responses and adaptive interactions based on artificial intelligence. These capabilities allow users to engage with a virtual infant that displays nuanced facial expressions, emotional shifts, and responsive behaviors. However, while BabyX is embodied, users do not share the same environmental space. User interactions with BabyX are limited to a two-dimensional, screen-based interface, restricting the spatial aspects of adult-child interactions that are essential for developing embodied “sensing” skills.

In contrast, the VRBTT offers a fully immersive, interactive environment where users can physically engage with a virtual infant in a three-dimensional space. This embodiment allows for more naturalistic adult-child interactions, helping users refine their nonverbal communication and embodied senses. However, while the VRBTT provides a rich interactive system, it does not yet incorporate the advanced emotional expressivity and cognitive modelling seen in BabyX. As a result, while users can physically engage with the virtual infant, the range of realistic emotional responses and behavioural adaptations remains more limited.

Recognizing these complementary strengths, future collaborations between the BabyX and the VRBTT research teams could aim to integrate key features from both platforms. By combining BabyX’s cognitive and emotional modelling capabilities with the VRBTT’s immersive and embodied interaction framework, the goal is to create a more holistic and effective child simulation tool. This integration could enable users to not only physically interact with a virtual child in an intuitive way but also experience a broader range of emotionally and cognitively nuanced responses, enhancing their ability to recognize and respond to communicative cues. Such advancements could significantly impact professional training in early childhood education, healthcare, and caregiver support, leading to improved real-world caregiving practices.

5.2 Ethical and practical implications of virtual children

The realism and complexity of simulated humans raise numerous ethical concerns, especially when simulating children[58]. Potential biases in a simulation’s cultural or socioeconomic assumptions may compromise both research validity and training inclusivity. For example, an over-representation of Western, middle-class caregiving norms could result in cognitive models or training modules that overlook or misrepresent the diverse ways in which infants communicate and interact across different cultures. Incorporating multicultural perspectives (as discussion below) is one approach to mitigating the risk of perpetuating biases. Future research should focus on identifying additional design principles that reduce this risk and ensure more diverse and accurate representation.

Similarly, the possibility of virtual child mistreatment demands serious consideration, especially regarding the risk that harmful behaviours toward simulated children could transfer to real-world interactions. While research suggests that individuals generally exhibit appropriate behaviour toward virtual agents in VR[59], this is not always the case. For instance, the VRBTT pilot study revealed instances of abusive behaviour toward the simulated child, such as grabbing the infant by one arm and letting it dangle in the air.

Concerns about behavioural transfer from digital environments to real life have been extensively explored in gaming research, particularly regarding violent video games and their potential influence on aggression. While the findings remain mixed[60-63], the debate underscores the broader issue of how virtual experiences can shape real-world behaviours. Similar ethical concerns have been raised regarding virtual child exploitation, including the potential normative impact of virtual child sexual abuse material[64,65] and the creation and use of childlike avatars in sexually explicit contexts[66].

While the normative effects of virtual child abuse are challenging to test empirically, Knott et al.[58] highlight evidence suggesting that realism and immersion may play a critical role in modulating such effects[67,68]. Given the highly realistic and immersive nature of VR, social behaviours practiced within virtual environments may be particularly prone to influencing real-world interactions. Crucially, it is this same mechanism that underpins the argument that VR experiences can enhance relational skills. However, it also applies to negative learning experiences—meaning that harmful behaviours normalized in VR could, in turn, become normalized in reality, with significant real-world consequences. From this perspective alone, the ethical treatment of simulated children demands serious attention.

Another important ethical question concerns the moral status of the simulated child as an agent in its own right. The debate over whether artificial entities deserve moral consideration has persisted for decades[69-74] and the criteria by which we assign moral status to existing entities remain contested[75-78]. While most would intuitively dismiss the idea that a simulated child—essentially a piece of software—requires moral consideration, some argue that this assumption deserves closer scrutiny.

Danaher’s ethical behaviourism[73] challenges the traditional view that moral status should be based on metaphysical properties such as consciousness. He argues that we lack direct access to such states, and instead, behaviour is the only reliable indicator of moral status. As he explains: “The ethical behaviourist points out that our ability to ascertain the existence of each and every one of these metaphysical properties is ultimately dependent on some inference from a set of behavioural representations. Behaviour is then, for practical purposes, the only insight we have into the metaphysical grounding for moral status”.

From this perspective, moral status should be granted based solely on exhibited behaviour. Specifically, if an agent’s behaviour is “roughly performatively equivalent” to that of agent already considered to have moral status, then it should be granted the same moral status. In this context, if a virtual child behaves in a way that closely resembles a biological child, it should be afforded the same moral status. Danaher argues that such an approach would better respect our “epistemic limits”.

While this perspective may be controversial, such questions will become increasingly relevant as advancements in AI lead to the creation of more sophisticated virtual agents. As these technologies become more capable of mimicking human-like behaviour, emotional responses, and cognitive functions—particularly in the context of child development—the boundary between simulation and sentience may become increasingly blurred. This raises critical ethical considerations, including how these agents should be treated.

Taken together, researchers must exercise caution when developing child simulations. As suggested by Knott et al.[58], the design of child simulation software can incorporate safeguards to prevent the mistreatment of its virtual children. For instance, restrictions could be implemented to prohibit undesirable interactions, such as interface mechanics that prevent the simulated child being thrown or touched inappropriately, or by defining a set of user behaviours that trigger automatic shutdown of the session when detected. However, such restrictions may also limit the immersive and realistic aspects of the experience, potentially reducing the scope of learning opportunities available to users. For instance, education providers might want to use the software to help users learn to manage angry outbursts, practice infant handling, or perform diaper changes. Therefore, alternative solutions are needed to balance the utility of this technology with safety for users and the wider community.

Lastly, while simulated child technology offers a safe and low-risk environment to study and practice relational skills, some scenarios, especially emergencies or complex situations, may induce stress in participants. This highlights the importance of designing experiential and psychologically safe learning environments around the technology. As these simulated contexts are further developed for both projects, it is critical to integrate principles of experiential learning (as outlined by Lindsey and Berger[79] and further explored by Hirumi et al.[80]). These principles include providing structured briefing and debriefing phases to facilitate reflection and discussion, as well as carefully determining the appropriate timing for introducing simulated child contexts into relevant curricula.

Future research must focus on (a) defining and enforcing ethical standards for the development of simulated children, (b) understanding the potential negative impact of unethical engagement with virtual children, and (c) developing and implementing of guidelines that ensures the safety of both human participants and virtual children.

5.3 Embedding multicultural perspectives

The VRBTT projects rests on the concept of whanaungatanga, the Māori value of relationships and connectedness. A key outcome of this project is to ensure that culturally grounded approaches are integrated into the training of professionals working with ngā pāpi (infants). The project embeds whanaungatanga into virtual training experiences by enabling kaiako (teachers) to be trained in relational and sensing skills aligned with the mātāpono (princple) of whanaungatanga, which embraces the formation, maintenance and strengthening of relationships. By designing interactions that authentically reflect caregiver-infant dynamics, the project supports Māori relational pedagogies, ensuring that virtual training environments support both professional skill development and Māori ways of knowing and being.

The next phase of the project aims to strengthen its cultural integration by working closely with Māori educators and researchers to ensure that whanaungatanga concepts are represented appropriately. This will involve identifying preferred tikanga-ā-whānau/iwi (family and tribal cultural practices and customs) relevant to ECE and paediatric healthcare training and embedding these into design principles. This will ensure that professionals trained using the VRBTT develop culturally responsive sensing skills that support their ability to engage meaningfully with Māori children and families across different professional environments and contexts.

Similarly, central to the BabyX project is a commitment to developing culturally sensitive assessment processes. Most research on responsiveness in caregiver-infant interactions has been conducted with caregivers and infants from the United States of America or Western Europe. As a result, existing coding schemes used to characterise caregiver-infant interactions may not be sensitive to the diverse patterns of caregiver and infants that are likely to be present in Aotearoa. Recognising this limitation, the BabyX’s Completing the Loop project is working with Māori and Pacific researchers to develop behavioural coding schemes to be better aligned to the NZ context. Further, while BabyX currently lacks the ability to express culturally nuanced behaviours and is visually represented as an ethnically white child, a key future goal is to integrate culturally diverse appearances and culturally responsive interactions.

Current efforts toward Maori representation in these projects are only a starting point. An important question moving forward is how these technologies can be adapted to further enhance their cultural responsiveness—not only for Māori, but also for other cultural communities. As New Zealand and other Western countries grow increasingly diverse, it is essential to ensure that tools and technologies support, reflect and accommodate a range of cultural norms and caregiving practices. It is well established that Western parents tend to adopt more distal parenting practices, characterised by face-to-face interactions and object play, whereas as parents from many non-Western societies favour more proximal parenting practices, which emphasise greater physical contact and body stimulation[81].

For example, research has shown that Western mothers more frequently communicate verbal mind-mindedness—explicitly recognising and talking about their infant’s thoughts, feelings, and desires—compared to mothers from non-Western societies[82,83], who may express mind-mindedness through non-verbal means, such as responsive physical movement or mirroring[81]. Given these differences in parenting approaches, infants likely develop varying expectations of social interactions, which they bring into professional settings.

Ensuring that simulation technologies account for these cultural variations is crucial for promoting greater inclusivity and effectiveness in professional training. For example, incorporating culturally specific interaction patterns—such as caregiver responsiveness through touch-based soothing techniques common in many non-Western cultures—could help educators recognise and respond to different caregiving norms. Future work should prioritise this area, ideally through transdisciplinary and multicultural research collaborations that ensure these tools are representative, inclusive, and meaningful across diverse caregiving environments.

6. Conclusion

Simulation has long been a vital tool for understanding and preparing for complex phenomena, evolving from simple mannequins to sophisticated software models. Each technological leap has expanded our ability to build skills and generate knowledge. The rise of empathic computing—combined with advancements in immersive and interactive technologies such as VR and virtual agents—marks a transformative step forward. These innovations enable the simulation of real-world complexity with remarkable fidelity, unlocking new possibilities in education, healthcare, psychology, and cognitive science.

The BabyX and VRBTT projects exemplify this potential by creating realistic, embodied child simulations. These projects demonstrate how empathic computing technologies can provide researchers and professionals with a safe, controlled environment to study social dynamics and sensing pedagogies that support positive adult-child interactions. While simulated children are not a substitute for real-life experience, they serve as powerful supplementary tools. By overcoming the practical limitations of working with infants and young children, these technologies enable new forms of research and training that would otherwise be difficult—if not impossible—in real-world settings.

Authors contribution

Morrison S: Conceptualisation, Writing Original Draft, review, editing.

Henderson AME, Lukosch H, White EJ, Bednarski F, Sagar M: Writing, review, editing.

All authors approved the final version of the manuscript.

Conflicts of interest

Heide Lukosch is an Associate Editor of Empathic Computing. Other authors declared that there are no conflicts of interest.

Ethical approval

Not applicable.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Availability of data and materials

Not applicable.

Funding

This work was supported by the HIT Lab NZ’s Applied Immersive Gaming Initiative, with funding from the Tertiary Education Commission NZ and the University of Canterbury (The funding number is: Edumis Number 7005).

Copyright

©The Author(s) 2025.

References

-

1. Parrott WG, Gleitman H. Infants’ expectations in play: The joy of peek-a-boo. Cogn Emot. 1989;3(4):291-311. DOI:10.1080/02699938908412710]

-

2. Kleeman JA. The peek-a-boo game: Part I: Its origins, meanings, and related phenomena in the first year. Psychoanal Study Child. 1967;22(1):239-273.[DOI]

-

3. White EJ, Peter M, Redder B. Infant and teacher dialogue in education and care: a pedagogical imperative. Early Child Res Q. 2015;30:160-173.[DOI]

-

4. Sidorkin AM. Pedagogy of relation: education after reform. 1st ed. New York: Routledge;2022:[DOI]

-

5. Recchia SL, Shin M, Loizou E. Relationship-based care for infants and toddlers: fostering early learning and development through responsive practice. Baltimore: Brookes Publishing Co.; 2023.

-

6. White EJ, Novak R, Rarere-Briggs B, Swit CS, Lukosch S. ‘Sensing’ learners through presence: learning relational pedagogies for infants using virtual reality. Video J Educ Pedagog. 2024;8(1):1-19.[DOI]

-

7. White EJ, Novak R, Lukosch H. The promise and potential of sensing technologies: ‘feeling’ our way towards relational pedagogies in the early years. In: White E, Westbrook F, Quinones G. editors. Visual Pedagogies for the Early Years: Presence, Potential and Possibility. Brill; 2025.

-

8. Einarsdóttir J. Research with children: methodological and ethical challenges. Eur Early Child Educ Res J. 2007;15(2):197-211.[DOI]

-

9. White EJ, Peter M, Sims M, Rockel J, Kumeroa M. First-year practicum experiences for preservice early childhood education teachers working with birth-to-3-year-olds: an australasian experience. J Early Child Teach Educ. 2016;37(4):282-300.[DOI]

-

10. White EJ. The work of the eye/i in ‘seeing’ children: visual methodologies for the early years. In: Seeing the World through Children's Eyes. Brill; 2020. p. 1-24.[DOI]

-

11. Bienstock J, Heuer A. A review on the evolution of simulation-based training to help build a safer future. Medicine. 2022;101(25):e29503.[DOI]

-

12. Scharf JL, Bringewatt A, Dracopoulos C, Rody A, Weichert J, Gembicki M. La Machine: obstetric phantoms of madame du coudray. Back to the Roots. J Med Educ Curric Dev. 2022;9:23821205221090168.[DOI]

-

13. Hashmi S, Vanderwert RE, Price HA, Gerson SA. Exploring the benefits of doll play through neuroscience. Front Hum Neurosci. 2020;14:560176.[DOI]

-

14. Stagnitti K, Unsworth C. The importance of pretend play in child development: an occupational therapy perspective. Br J Occup Ther. 2000;63(3):121-127.[DOI]

-

15. Lopreiato JO, Sawyer T. Simulation-based medical education in pediatrics. Acad Pediatr. 2015;15(2):134-142.[DOI]

-

16. Elendu C, Amaechi DC, Okatta AU, Amaechi EC, Elendu TC, Ezeh CP, et al. The impact of simulation-based training in medical education: a review. Medicine. 2024;103(27):e38813.[DOI]

-

17. Cangelosi A, Schlesinger M. Developmental robotics: from babies to robots. MIT Press. 2015.[DOI]

-

18. Flatebø S, Tran VNN, Wang CEA, Bongo LA. Social robots in research on social and cognitive development in infants and toddlers: a scoping review. PLoS One. 2024;19(5):1-22.[DOI]

-

19. Istituto Italiano di Tecnologia. iCub Robot [Internet]. Available from: https://icub.iit.it/products/icub-robot

-

20. Metta G, Natale L, Nori F, Sandini G, Vernon D, Fadiga L, et al. The icub humanoid robot: an open-systems platform for research in cognitive develop ment. Neural Netw. 2010;23(8-9):1125-1134.[DOI]

-

21. Natale L, Bartolozzi C, Nori F, Sandini G, Metta G. iCub. In: Goswami A, Vadakkepat P, editors. Humanoid Robotics: A Reference. Dordrecht: Springer; 2018. p. 1-33.[DOI]

-

22. Istituto Italiano di Tecnologia. iCub [Internet]. Available from: https://icub.iit.it/

-

23. Sagar M, Henderson AME, Takac M, Morrison S, Knott A, Moser A, et al. Deconstructing and reconstructing turn-taking in caregiver-infant interactions: a platform for embodied models of early cooperation. J R Soc N Z. 2022;53(1):148-168.[DOI]

-

24. Sagar M, Seymour M, Henderson A. Creating connection with autonomous facial animation. Commun ACM. 2016;59(12):82-91.[DOI]

-

25. Sagar M, Moser A, Henderson AM. A platform for holistic embodied models of infant cognition, and its use in a model of event processing. IEEE Trans Cogn Dev Syst. 2022;15(4):1916-1927.[DOI]

-

26. Oztop E, Bradley NS, Arbib MA. Infant grasp learning: a computational model. Exp Brain Res. 2004;158:480-503.[DOI]

-

27. Blandón MAC, Cristia A, Räsänen O. Introducing meta-analysis in the evaluation of computational models of infant language development. Cogn Sci. 2023;47(7):e13307.[DOI]

-

28. Wintner S. Computational models of language acquisition. In: Gelbukh A, editor. Computational Linguistics and Intelligent Text Processing; 2010 Mar 21-27; Iasi, Romania. Berlin: Springer; 2010. p. 86-99.[DOI]

-

29. Montag JL, Jones MN, Smith LB. Quantity and diversity: simulating early word learning environments. Cogn Sci. 2018;42(S2):375-412.[DOI]

-

30. Theuer JK, Koch NN, Gumbsch C, Elsner B, Butz MV. Infants infer and predict coherent event interactions: modeling cognitive development. PLoS One. 2024;19(10):e0312532.[DOI]

-

31. Shultz TR, Nobandegani AS. A computational model of infant learning and reasoning with probabilities. Psychol Rev. 2022;129(6):1281-1295.[DOI]

-

32. Spivey MJ. Cognitive science progresses toward interactive frameworks. Top Cogn Sci. 2023;15(2):219-254.[DOI]

-

33. Sagar M, Moser A, Henderson AME, Morrison S, Pages N, Nejati A, et al. A platform for embodied models of infant cognition, and its use in a model of event perception. In: 2021 IEEE International Conference on Development and Learning (ICDL); 2021 Aug 23-26; Beijing, China. New York: IEEE; 2021. p. 1-7.[DOI]

-

34. Mattern D, Schumacher P, López MF, Raabe MC, Ernst MR, Aubret A, et al. MIMo: a multimodal infant model for studying cognitive development. IEEE Trans Cogn Dev Syst. 2024;16(4):1291-1301.[DOI]

-

35. Gallese V. Embodied simulation: from neurons to phenomenal experience. Phenom Cogn Sci. 2005;4:23-48.[DOI]

-

36. Nicely P, Tamis-LeMonda CS, Bornstein MH. Mothers’ attuned responses to infant affect expressivity promote earlier achievement of language milestones. Infant Behav Dev. 1999;22(4):557-568.[DOI]

-

37. Nicely P, Tamis-LeMonda CS, Grolnick WS. Maternal responsiveness to infant affect: stability and prediction. Infant Behav Dev. 1999;22(1):103-117.[DOI]

-

38. Landry SH, Smith KE, Miller-Loncar CL, Swank PR. The relation of change in maternal interactive styles to the developing social competence of full-term and preterm children. Child Dev. 1998;69(1):105-123.[DOI]

-

39. Dunst CJ, Kassow DZ. Caregiver sensitivity, contingent social responsiveness, and secure infant attachment. J Early Intensive Behav Interv. 2008;5(1):40-56.[DOI]

-

40. Landry SH, Smith KE, Swank PR, Miller-Loncar CL. Early maternal and child influences on children’s later independent cognitive and social functioning. Child Dev. 2000;71(2):358-375.[DOI]

-

41. Landry SH, Smith KE, Swank PR, Assel MA, Vellet S. Does early responsive parenting have a special importance for children’s development or is consistency across early childhood necessary? Dev Psychol. 2001;37(3):387-403.[DOI]

-

42. Vygotsky LS. Mind in society: development of higher psychological processes. Cambridge: Harvard University Press; 1978.

-

43. Rogoff B, Wertsch JV. Children’s learning in the “zone of proximal development”. San Francisco: Jossey-Bass; 1984.

-

44. Bornstein MH, Tamis-LeMonda CS. Mother-infant interaction. In: Bremner G, Fogel A, editors. Blackwell handbook of infant development. Hoboken: mBlackwell Publishing; 2004. p. 269-295.[DOI]

-

45. Madigan S, Prime H, Graham SA, Rodrigues M, Anderson N, Khoury J, et al. Parenting behavior and child language: a meta-analysis. Pediatrics. 2019;144(4):e20183556.[DOI]

-

46. Wade M, Jenkins JM, Venkadasalam VP, Binnoon-Erez N, Ganea PA. The role of maternal responsiveness and linguistic input in pre- academic skill development: a longitudinal analysis of pathways. Cogn Dev. 2018;45:125-140.[DOI]

-

47. Tronick E, Als H, Adamson L, Wise S, Brazelton TB. The infant’s response to entrapment between contradictory messages in face-to-face interaction. J Am Acad Child Psychiatry.1978;17(1):1-13.[DOI]

-

48. Wang D, Guo Y, Liu S, Zhang Y, Xu W, Xiao J. Haptic display for virtual reality: progress and challenges. Virtual Real Intell Hardw. 2019;1(2):136-162.[DOI]

-

49. Cooper N, Milella F, Pinto C, Cant I, White M, Meyer G. The effects of substitute multisensory feedback on task performance and the sense of presence in a virtual reality environment. PLoS One. 2018;13(2):e0191846.[DOI]

-

50. Zhang Y, Fernando T, Xiao H, Travis ARL. Evaluation of auditory and visual feedback on task performance in a virtual assembly environment. Presence: Virtual Augment Real. 2006;15(6):613-626.[DOI]

-

51. Biocca F, Kim J, Choi Y. Visual touch in virtual environments: an exploratory study of presence, multimodal interfaces, and cross-modal sensory illusions. Presence: Virtual Augment Real. 2001;10(3):247-265.[DOI]

-

52. Hamilton D, McKechnie J, Edgerton E, Wilson C. Immersive virtual reality as a pedagogical tool in education: a systematic literature review of quantitative learning outcomes and experimental design. J Comput Educ. 2021;8(1):1-32.[DOI]

-

53. Slater M. Immersion and the illusion of presence in virtual reality. Br J Psychol. 2018;109(3):431-433.[DOI]

-

54. White EJ, Redder B. A dialogic approach to understanding infant interactions. In: Gunn AC, Hruska CA, editors. Interactions in Early Childhood Education. Singapore: Springer Singapore; 2017. p. 81-98.[DOI]

-

55. Thelen E, Smith LB. A dynamic systems approach to the development of cognition and action. Cambridge: MIT Press; 1994.[DOI]

-

56. Johnsen K, Raij A, Stevens A, Lind DS, Lok B. The validity of a virtual human experience for interpersonal skills education. In: Proceedings of the SIGCHI Conference on Human Factors in Computing Systems; 2007 Apr 28; California, USA. New York: Springer; 2007. p. 1049-1058.[DOI]

-

57. Bloom BS, Krathwohl DR. Taxonomy of educational objectives; the classification of educational goals. New York: Longmans, Green; 1956.

-

58. Knott A, Sagar M, Takac M. The ethics of interaction with neurorobotic agents: a case study with BabyX. AI Ethics. 2022;2(1):115-128.[DOI]

-

59. Slater M, Antley A, Davison A, Swapp D, Guger C, Barker C, et al. A virtual reprise of the stanley milgram obedience experiments. PLoS One. 2006;1(1):1-10.[DOI]

-

60. Greitemeyer T. The dark and bright side of video game consumption: effects of violent and prosocial video games. Curr Opin Psychol. 2022;46:101326.[DOI]

-

61. Drummond A, Sauer JD, Ferguson CJ. Do longitudinal studies support long-term relationships between aggressive game play and youth aggressive behaviour? A meta-analytic examination. R Soc Open Sci. 2020;7(7):200373.[DOI]

-

62. Kuhn S, Kugler DT, Schmalen K, Weichenberger M, Witt C, Gallinat J. Does playing violent video games cause aggression? A longitudinal intervention study. Mol Psychiatry. 2019;24(8):1220-1234.[DOI]

-

63. Przybylski AK, Weinstein N. Violent video game engagement is not associated with adolescents’ aggressive behaviour: evidence from a registered report. R Soc Open Sci. 2019;6(2):171474.[DOI]

-

64. Christensen LS, Moritz D, Pearson A. Psychological perspectives of virtual child sexual abuse material. Sexuality Cult. 2021;25(4):1353-1365.[DOI]

-

65. Levy N. Virtual child pornography: the eroticization of inequality. Ethics Inf Technol. 2002;4:319-323.[DOI]

-

66. Reeves C. The virtual simulation of child sexual abuse: online gameworld users’ views, understanding and responses to sexual ageplay. Ethics Inf Technol. 2018;20(2):101-113.[DOI]

-

67. Barlett CP, Rodeheffer C. Effects of realism on extended violent and nonviolent video game play on aggressive thoughts, feelings, and physiological arousal. Aggress Behav. 2009;35(3):213-224.[DOI]

-

68. Persky S, Blascovich J. Immersive virtual environments versus traditional platforms: effects of violent and nonviolent video game play. Media Psychol. 2007;10(1):135-156.[DOI]

-

69. Floridi L, Sanders JW. On the morality of artificial agents. Minds Mach. 2004;14:349-379.[DOI]

-

70. Sullins JP. When is a robot a moral agent? In: Anderson M, Anderson SL, editors. Machine Ethics. New York: Cambridge University Press; 2011. p. 151-161.[DOI]

-

71. Gunkel DJ. The machine question: critical perspectives on AI, robots, and ethics. Cambridge: MIT Press; 2018.[DOI]

-

72. Gunkel DJ. Robot rights. Cambridge: MIT Press; 2018.[DOI]

-

73. Danaher J. Welcoming robots into the moral circle: a defence of ethical behaviourism. Sci Eng Ethics. 2020;26(4):2023-2049.[DOI]

-

74. Harris J, Anthis JR. The moral consideration of artificial entities: a literature review. Sci Eng Ethics. 2021;27(4):53.[DOI]

-

75. Jaworska A, Tannenbaum J. The grounds of moral status. In: Zalta EN, Nodelman U, editors. The Stanford Encyclopedia of Philosophy. Stanford: Stanford University Press; 2023.

-

76. DeGrazia D. Moral status as a matter of degree? South J Philos. 2008;46(2):181-198.[DOI]

-

77. Singer P. Speciesism and moral status. Metaphilosophy. 2009;40(3-4):567-581.[DOI]

-

78. Warren MA. Moral status: obligations to persons and other living things. United Kingdom: Oxford University Press; 2000.[DOI]

-

79. Lindsey L, Berger N. Experiential approach to instruction. In: Reigeluth CM, Carr-Chellman AA, editors. Instructional-Design Theories and Models, Volume III. United Kingdom: Routledge; 2009. p. 129-154.

-

80. Hirumi A, Johnson T, Reyes RJ, Lok B, Johnsen K, Rivera-Gutierrez DJ, et al. Advancing virtual patient simulations through design research and interPLAY: part II-integration and field test. Educ Tech Res Dev. 2016;64:1301-1335.[DOI]

-

81. Bigelow AE. Call for non-verbal mind-mindedness measures for use in infancy and across cultures. Child Dev Perspect. 2025.[DOI]

-

82. Bozicevic L, Hill J, Chandra PS, Omirou A, Holla C, Wright N, et al. Cross-cultural differences in early caregiving: levels of mind-mindedness and instruction in UK and India. Front Child Adolesc Psychiatry. 2023;2:1124883.[DOI]

-

83. Dai Q, McMahon C, Lim AK. Cross-cultural comparison of maternal mind-mindedness among Australian and Chinese mothers. Int J Behav Dev. 2020;44(4):365-370.[DOI]

Copyright

© The Author(s) 2025. This is an Open Access article licensed under a Creative Commons Attribution 4.0 International License (https://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, sharing, adaptation, distribution and reproduction in any medium or format, for any purpose, even commercially, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

Publisher’s Note

Share And Cite