Abstract

Affective Computing aims to build systems that can sense, interpret, and influence human emotions. In parallel, virtual reality (VR) has matured into a powerful solution for immersion, presence, and interaction. When combined, these two technologies enable a new generation of emotionally adaptive systems that can recognize users’ inner states and react in real time. In this article, we explore the opportunities and challenges of emotion recognition and emotion elicitation in VR, focusing on the Virtual Emotion, Elicitation and Recognition Loop, a closed-loop framework where virtual environments adapt based on recognized and targeted emotions. We discuss applications in manufacturing, product design, and education, and highlight key ethical and privacy concerns, especially in light of recent regulations such as the European Commission released the proposed Regulation on Artificial Intelligence (EU AI Act). Finally, we outline open research directions toward responsible, privacy-aware, and scalable affective VR systems, suggesting that the convergence between VR and Affective Computing can become a crucial foundation for the next generation of human-centric interactive technologies.

Keywords

1. Introduction

Emotions are not just background noise in human life: they shape attention, learning, decision making, and social interaction. They influence which information we notice, how we interpret ambiguous situations, and when we decide to keep going or give up. For decades, Affective Computing has tried to capture this emotional dimension with computational systems by asking the question: can machines sense and respond to how we feel? While the definitive answer is still taking shape, progress in sensing, modeling, and interpreting affective signals has been substantial, leveraging advances in machine learning, physiological sensing, and multimodal signal fusion[1,2].

At the same time, virtual reality (VR) has evolved from a niche curiosity into a widely used vehicle for education, training, gaming, design, and prototyping[3,4]. VR offers three ingredients that are particularly attractive for Affective Computing. First, it provides a strong experience of immersion, as users feel surrounded and enveloped by a virtual world rather than simply looking at a screen. Second, it supports a sense of presence, meaning that the virtual world is experienced as “real enough” for the mind to react with genuine emotions, despite the user knowing that everything is simulated[5]. Recent findings confirm that VR can effectively generate emotional states that are comparable in intensity to real-world affective experiences[6-8]. Third, virtual environments are highly adaptable: VR worlds can be modified on the fly in response to user behavior, performance, or emotional state, without the physical constraints that apply to the real world.

When we combine these two disciplines, we obtain a powerful setting: systems that can recognize the user’s emotional state and adapt the VR simulation accordingly, for example, to facilitate mental well-being, personalize training, or boost user experience[9]. VR thus becomes not only a display technology, but also a laboratory for emotional reactions where experiences can be carefully designed, repeated, and modified in ways that are difficult or impossible in the physical world.

In this article, we introduce the basic concepts of emotion recognition (ER) and emotion elicitation (EE), discuss how VR changes the landscape for Affective Computing, highlighting both opportunities and challenges, and presenting the Virtual Emotion, Elicitation and Recognition Loop (VEE-Loop)[10,11] as a conceptual framework for closing the loop between ER and EE within VR. We then explain how this loop can be instantiated across a range of application domains, specifically manufacturing, product design, and education. Finally, we discuss privacy, ethical, and regulatory considerations, particularly regarding biometric and affective data[12,13], and outline future research directions toward responsible, privacy-preserving, and scalable affective VR systems.

2. Affective Computing Meets VR

Affective Computing and VR have traditionally developed as largely independent research areas. ER research focuses on inferring human affective states from behavioral and physiological signals, while EE studies how specific stimuli can induce emotional responses. VR research, in contrast, has primarily investigated immersion, interaction, and presence within computer-generated environments. The convergence of these domains introduces a new paradigm: emotions are no longer only measured or elicited in isolation, but become embedded within interactive digital environments that simultaneously sense, interpret, and influence users’ affective states. In VR systems, ER and EE can be integrated into a continuous feedback loop where the user’s emotional responses are monitored in real time, and the virtual environment adapts accordingly. This integration transforms VR into a powerful experimental and technological platform for Affective Computing, enabling emotionally adaptive and context-aware interactive experiences.

2.1 ER in VR

ER refers to the computational process of inferring an individual’s affective state from observable behavioral or physiological signals. In Affective Computing, these states are typically modeled either as discrete emotion categories (e.g., joy, fear, anger) or as continuous dimensions such as valence and arousal[14-16]. The underlying assumption is that emotions produce measurable signatures across multiple expressive channels, including facial activity, speech, body movements, and autonomic physiological responses.

Traditional ER pipelines consist of multiple stages, including signal acquisition, preprocessing, feature extraction, and machine learning-based inference.

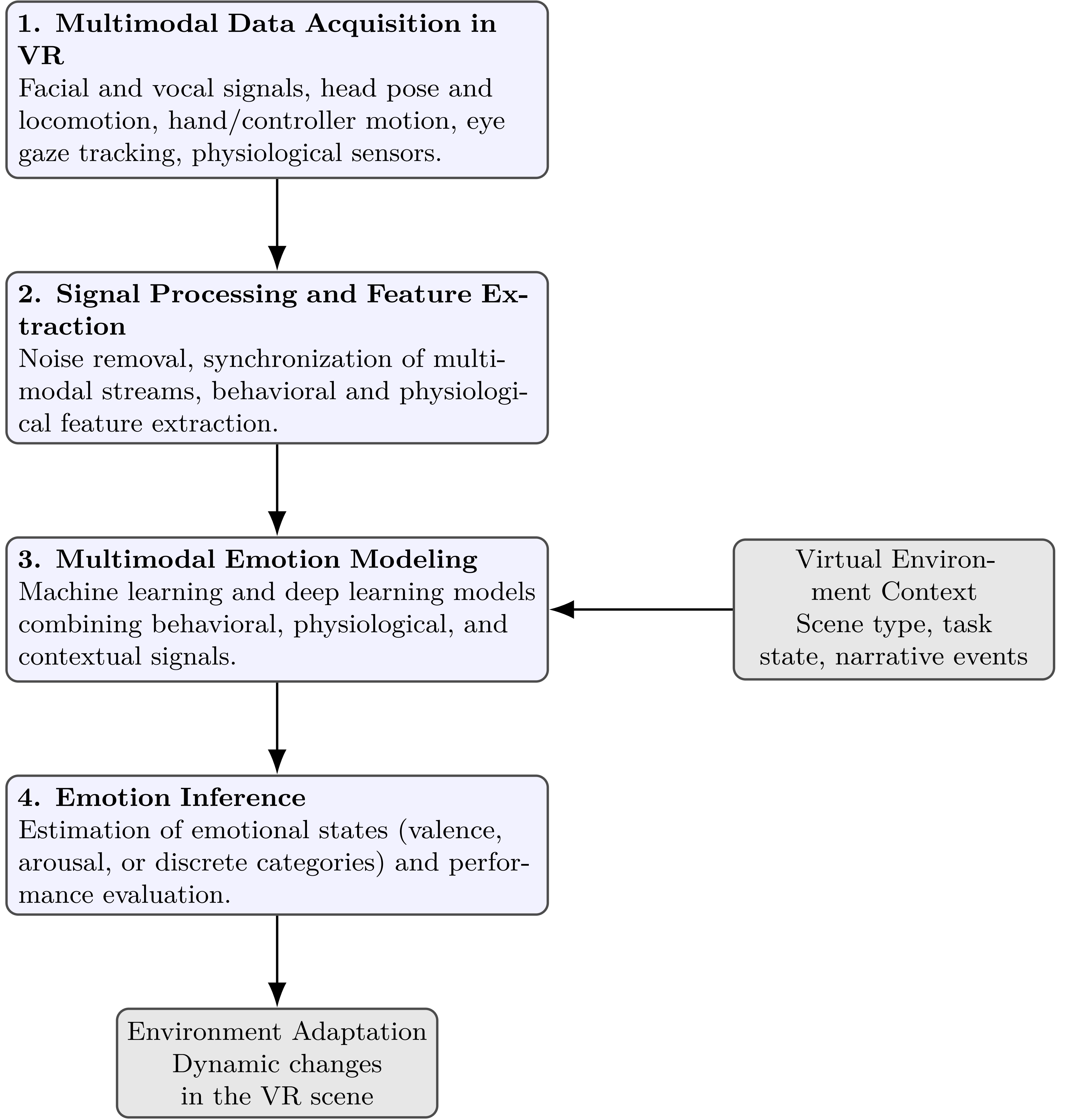

However, when ER is deployed within VR environments, the nature of available signals and contextual information changes significantly. In addition to traditional physiological or audiovisual signals, VR systems provide continuous behavioral telemetry describing how users move, explore, and interact with the environment. These additional signals enable richer interpretations of affective states within interactive contexts (see Figure 1).

Figure 1. ER pipeline within a VR environment, integrating behavioral telemetry and contextual information from the virtual scene. ER: emotion recognition; VR: virtual reality.

VR environments introduce new sensing opportunities that extend beyond traditional ER modalities. Modern head-mounted displays and motion controllers continuously capture behavioral telemetry such as head orientation, body movement, hand interactions, gaze direction, and locomotion patterns. These signals provide rich information about how users explore and interact with the environment, enabling the detection of subtle affect-related behaviors such as avoidance, approach tendencies, or exploratory patterns.

Another key characteristic of ER in VR is the importance of contextual information. Emotional responses cannot be interpreted independently from the surrounding scenario; the same physiological response can correspond to excitement in a game environment or anxiety in a threatening simulation. Consequently, VR-based ER systems increasingly incorporate contextual features derived from the virtual environment itself, such as scene characteristics, task progression, or interactions with virtual agents.

Finally, VR enables the development of real-time affective feedback loops. Once the system estimates the user’s emotional state, the virtual environment can be dynamically modified to respond to it, for example by adjusting environmental conditions, narrative progression, or the behavior of virtual characters.

2.2 EE in VR

EE is the complementary process to ER: instead of inferring an existing emotional state, EE aims to induce specific affective responses through carefully designed stimuli[17,18].

Traditional EE paradigms often rely on passive exposure to images, videos, or audio stimuli. While these methods have proven effective in controlled laboratory settings, they provide limited opportunities for interaction and often fail to reproduce the complexity of real-world emotional experiences. VR significantly expands these possibilities by transforming emotional stimuli into immersive and interactive environments.

In VR-based EE, emotional stimuli emerge from interactive scenarios rather than static media. Users can navigate virtual spaces, manipulate objects, and interact with virtual characters, creating emotionally meaningful experiences through embodied participation. This active engagement can produce stronger and more ecologically valid emotional responses compared to conventional laboratory stimuli.

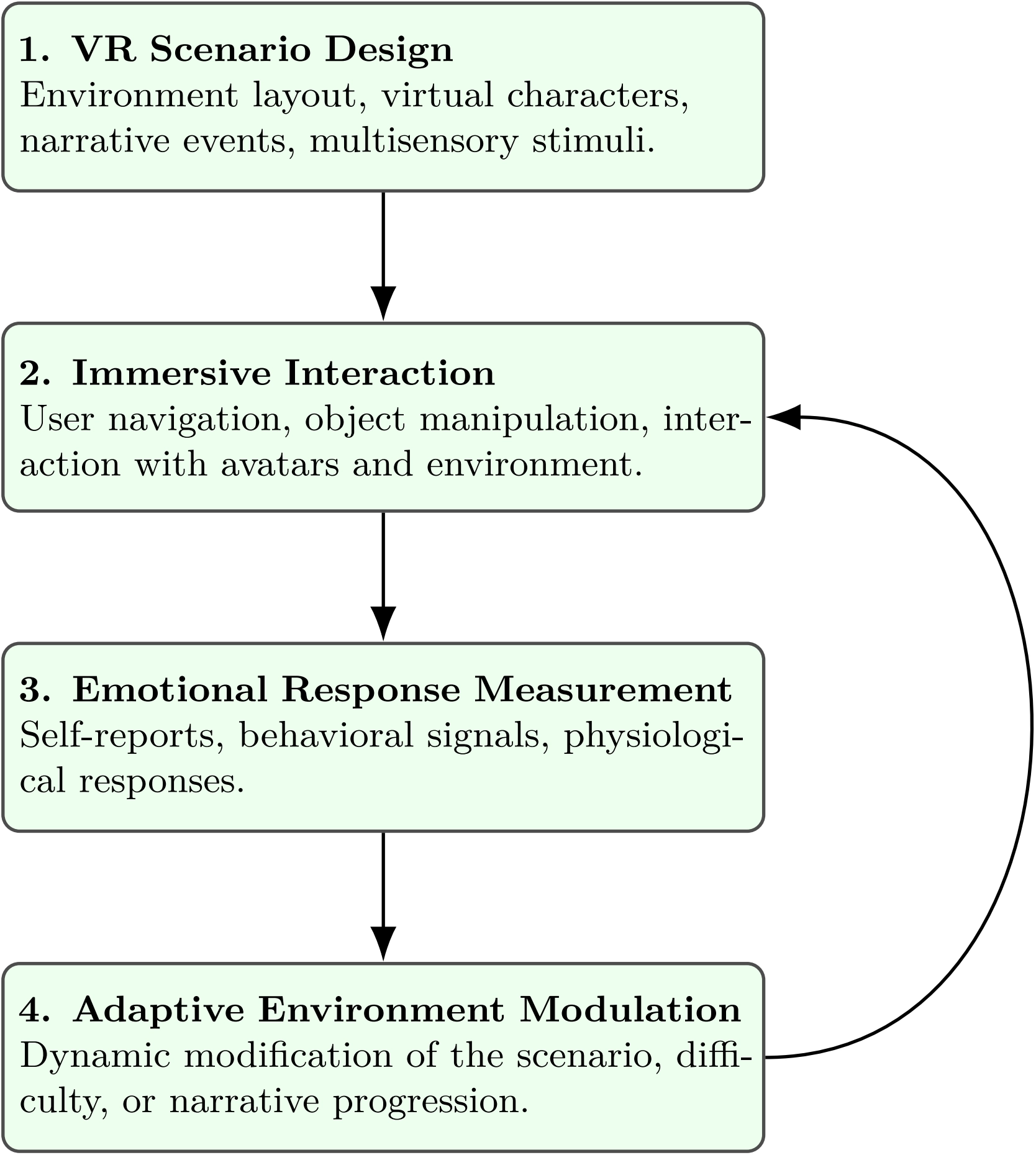

Furthermore, VR enables adaptive EE, where the environment dynamically adjusts its stimuli based on the user’s ongoing responses. For example, exposure therapy applications may gradually increase the intensity of feared stimuli, while training simulations may regulate difficulty to maintain engagement without inducing excessive stress. As illustrated in Figure 2, this closed-loop pipeline allows emotional responses to be measured and used to dynamically adapt the virtual environment.

Figure 2. EE pipeline in VR. Emotional responses can be measured and used to dynamically adapt the environment, enabling closed-loop affective interaction. EE: emotion elicitation; VR: virtual reality.

2.3 Toward closed-loop affective virtual environments

When ER and EE are integrated within VR platforms, they form the basis of closed-loop affective systems. In such systems, the virtual environment continuously senses the user’s behavioral and physiological signals, estimates their emotional state, and dynamically adjusts the experience to influence or regulate the user’s affective response.

This closed-loop paradigm transforms VR from a passive presentation medium into an emotion-aware interactive system. Such systems have potential applications in mental health therapy, education and training, entertainment, and human–computer interaction research. At the same time, the integration of continuous sensing and adaptive interaction raises important methodological and ethical challenges, including issues related to privacy, data management, and responsible use of emotionally sensitive information.

3. VR for ER: Opportunities and Challenges

Table 1 summarizes the main opportunities and challenges associated with the use of VR for ER. The table is not intended to represent a strict one-to-one correspondence between individual opportunities and challenges. Rather, the two columns provide complementary perspectives on the same technological landscape: the factors that enable new methodological capabilities often introduce additional technical or methodological complexity. The side-by-side layout is therefore meant to facilitate comparison between enabling factors and the corresponding research difficulties that must be addressed.

| Opportunities | Challenges |

| Contextual grounding: Precise control of scenes, events, and tasks enables more meaningful label interpretation and reduced contextual ambiguity. | Dynamic emotional transitions: Rapid, non-linear affective changes require robust temporal modeling and fine-grained segmentation. |

| Controlled and repeatable experiences: Identical VR scenarios across participants improve reproducibility and experimental rigor. | Multimodal integration complexity: Fusion of physiological, behavioral, and kinematic data involves heterogeneous sampling rates, noise, and real-time constraints. |

| Embodied interaction: Full-body movement, posture, and object interaction provide rich behavioral cues unavailable in 2D setups. | Ground-truth ambiguity: Hard to define emotion onset/offset in interactive experiences; continuous annotations are noisy and difficult to align. |

| Native multimodal data fusion: Synchronized VR sensor streams support scalable, real-time ER. | Measurement artifacts: Motion-induced noise, tracking loss, and optical interference degrade physiological and gaze signals. |

| Authentic expression and personalization: Immersion reduces self-consciousness and enables individualized ER models. | High computational load: Real-time inference with multimodal signals requires efficient models and robust resource management. |

VR: virtual reality; ER: emotion recognition.

3.1 Opportunities

VR can considerably enhance the methodological and technical foundations of ER by providing controlled, immersive, and context-rich environments in many experimental contexts, which are difficult to achieve in traditional laboratory setups[1,9,19]. VR considerably enhances the methodological and technical foundations of ER by providing controlled, immersive, and context-rich environments that are difficult to achieve in traditional laboratory setups. One major opportunity lies in contextual grounding. In conventional ER, similar facial expressions or physiological responses can correspond to different inner states depending on setting-specific factors. VR enables precise control over environmental context, task structure, and event timing, thereby reducing contextual ambiguity and enabling more semantically grounded labels[1,19].

A second opportunity is the ability to deliver controlled and repeatable experiences. VR allows researchers to replay identical scenarios across participants, including spatial layouts, event timing, and audiovisual stimuli, which can improve reproducibility under well-controlled conditions; however, individual behavioral differences or interaction idiosyncrasies may still introduce variability[18,20].

Third, VR promotes presence-driven interaction. Traditional ER paradigms often rely on face- or voice-based signals, whereas VR emphasizes body posture, movements, and spatial displacements as integral components of interaction. These human behaviors offer rich affective cues, enabling ER models to profit from gestures, movement patterns, and body reflexes as indicators of emotional state[9,21].

Another key opportunity lies in multimodal data fusion. Modern VR systems combine head and hand tracking, eye and gaze metrics, facial expression estimation, and physiological measurements in a natively synchronized data stream. This greatly simplifies multimodal ER pipelines and supports high-resolution temporal modeling [17,22]. VR thus makes multimodal emotional sensing more scalable, practical, and aligned with real-time applications.

Finally, VR can promote authentic expression and personalized calibration. Immersion can reduce self-awareness and performative bias in many users, allowing more spontaneous reactions compared to traditional observation methods[23], though this effect may vary depending on prior VR experience, scenario design, and individual differences. Over multiple sessions, VR systems can learn individual emotional baselines and expressive idiosyncrasies, leading to more robust, user-adaptive ER models[1,23].

3.2 Challenges

Despite its advantages, VR also introduces new methodological and technical challenges for ER. A first challenge emerges from dynamic emotional transitions. In interactive VR environments, emotions can rapidly change in response to user actions or system events. ER models must therefore work with dense time series, managing smooth or sudden emotional shifts, with correct multimodal alignment[24].

A second challenge concerns multimodal signal integration. The real-time fusion of heterogeneous signals, such as physiological data, motion, gaze, facial cues, and contextual information, is both computationally demanding and prone to errors. Sensors operate at different sampling rates and may intermittently fail, requiring fusion models that are latency-aware, robust to missing data, and able to perform online inference[9,22].

A third difficulty pertains to ground-truth acquisition and labeling. In immersive and interactive experiences, the beginning and the ending of emotional episodes can be ambiguous. Continuous annotation tools can help, but they are subject to annotator bias, temporal lag, and inconsistencies across individuals[1,20]. As scenarios become more complex and emotionally rich, annotation protocols must be redesigned to balance realism with accuracy.

Finally, VR introduces significant measurement artifacts. Headset motion, sensor displacement, and optical interference can degrade physiological signals such as heart rate (HR), electrodermal activity (EDA), or electroencephalography (EEG), as well as eye-tracking quality. Motion-induced noise, inertial drift, and hardware heterogeneity further complicate preprocessing and model reliability[21,22]. Adaptive sensor calibration and robust artifact mitigation are therefore essential for dependable VR-based ER.

3.3 Summary

In summary, VR provides a powerful technological framework for advancing ER research by enabling richer contextual grounding, embodied interaction, and synchronized multimodal sensing. At the same time, the same properties that make VR attractive, such as high interactivity, complex sensor ecosystems, and dynamic environments, also introduce new methodological challenges related to temporal modeling, data integration, and annotation reliability. Future research will need to address these challenges through improved multimodal fusion techniques, standardized annotation protocols, and robust sensing pipelines capable of operating reliably in highly dynamic immersive environments.

4. VR for EE: Opportunities and Challenges

Table 2 summarizes the main opportunities and challenges associated with the use of VR for EE. As in the previous section, the two columns do not represent a strict one-to-one mapping; instead they highlight complementary aspects of the same technological landscape. Many of the opportunities enabled by immersive environments introduce new design, methodological, or ethical considerations that must be addressed when deploying VR-based elicitation systems.

| Opportunities | Challenges |

| High ecological validity: VR enables realistic, interactive, and immersive scenarios difficult or impossible to reproduce physically, improving emotional authenticity. | Calibrating emotional intensity: Difficult to balance effective elicitation with user safety; risks of overwhelming or insufficient stimuli across diverse populations. |

| Balance of realism and experimental control: VR maintains strict control over spatial, temporal, and narrative variables while enabling natural interaction. | Physical and simulator effects: Cybersickness, discomfort, and sensor misalignment can confound emotional and physiological measurements. |

| Consistency and reproducibility: Identical core scenarios can be presented across sessions and participants, improving cross-study comparability. | Ambiguity in interpretation: Hard to distinguish desired emotional reactions from unintended responses to interface friction or motion artifacts. |

| Personalization and adaptivity: Stimuli can adapt in real time based on physiological or behavioral signals, enabling closed-loop EE. | Ethical and psychological safety: Strong negative emotions raise consent, exposure, and post-session care issues, especially outside controlled labs. |

| Safe simulation of dangerous or sensitive scenarios: Emergency, industrial, or socially delicate situations can be recreated safely. | Data protection concerns: VR systems capture extensive behavioral, spatial, and physiological data requiring strict privacy safeguards. |

VR: virtual reality; EE: emotion elicitation.

4.1 Opportunities

VR is an increasingly powerful medium for EE, offering enhanced experiential richness and ecological validity for certain tasks, while maintaining experimental control. Effectiveness may vary depending on scenario design, task type, and user engagement[17,18,25]. A first major opportunity lies in VR’s ability to balance realism with experimental control. Classical EE techniques typically rely on static or pre-recorded stimuli (e.g., images, short videos, sound clips), which are easy to standardize but often feel artificial, detached from context, and limited in their ability to evoke strong or sustained affective responses. In contrast, VR environments allow researchers to precisely orchestrate the spatial, temporal, and narrative structure of an experience while simultaneously enabling naturalistic user interaction. For example, a fear-inducing scene need not merely present a threatening stimulus; it can allow the participant to approach, avoid, or manipulate it, producing richer behavioral and emotional responses[26]. This interactivity contributes directly to enhanced ecological validity, enabling the simulation of situations that would be infeasible, dangerous, or unethical to reproduce in the physical world (such as emergency evacuations, industrial hazards, or socially sensitive environments) while preserving many sensory and embodied cues that drive authentic emotional reactions[25]. VR thus can provide a combination of safety, reproducibility, and emotional potency in specific experimental setups, narrowing the gap between laboratory-based elicitation and some aspects of everyday human experience, while generalization to all real-world contexts should be made cautiously[26]. A further opportunity concerns consistency and adaptivity. VR systems can deliver identical baseline scenarios across participants, improving reproducibility and between-group comparisons, yet they can also personalize stimulus dynamics (adjusting pacing, challenge level, or intensity) to match each user’s abilities, preferences, or tolerance[18]. Moreover, VR enables closed-loop elicitation: environments can adapt in real time based on behavioral or physiological responses, sustaining a target emotional intensity or guiding the user through a desired affective trajectory, such as gradual exposure in anxiety disorders or flow induction in learning contexts[1]. These capabilities make VR well suited for training, assessment, therapeutic, and entertainment applications where emotional engagement and adaptation are central.

4.2 Challenges

Despite these advantages, deploying VR for EE also introduces several methodological and ethical challenges. A primary issue involves the calibration of emotional intensity. Adaptive VR systems must ensure that stimuli remain effective without causing undue distress, especially in clinical or high-stakes applications. Overestimating a user’s tolerance may trigger overwhelming emotions or disengagement, whereas underestimation may lead to weak or inconsistent affective responses[26]. Achieving appropriate calibration requires careful scenario design, individualized exposure protocols, and often human supervision.

Another challenge concerns VR-induced physical effects, such as motion sickness, visual fatigue, or vestibular discomfort. These side effects can confound emotion measurements by mimicking physiological correlates of stress or anxiety. Disentangling target emotional responses from hardware-induced discomfort requires both thoughtful VR design (e.g., minimizing sensory mismatches) and robust multimodal measurement strategies that can differentiate emotional arousal from physical strain[27,28].

Finally, VR-based EE raises significant ethical and psychological safety considerations. Because VR experiences may feel highly realistic and immersive, inducing strong negative emotions (fear, stress, sadness) must be approached cautiously. Informed consent must clearly articulate the nature and intensity of potential experiences, and users must retain full control to pause or terminate the session at will. Post-experience debriefing and access to psychological support may be necessary for particularly intense scenarios. These issues are amplified when VR systems extend beyond controlled laboratory research into schools, workplaces, or therapeutic settings[29].

4.3 Summary

Overall, VR represents a significant evolution in EE methodologies, enabling immersive, interactive, and highly controllable emotional experiences that bridge the gap between laboratory stimuli and real-world situations. However, achieving reliable and ethically responsible elicitation requires careful calibration of emotional intensity, mitigation of hardware-related effects such as cybersickness, and strict attention to user safety and informed consent. Addressing these challenges will be essential for deploying VR-based elicitation systems in clinical, educational, and large-scale research settings.

5. VR for Data Collection

Table 3 provides a structured overview of the principal opportunities and challenges associated with VR-based data collection for Affective Computing. Similar to the previous tables, the two columns are not intended to represent direct correspondences between individual items but rather to contrast enabling technological capabilities with the methodological and ethical constraints they introduce.

| Opportunities | Challenges |

| Scalable data generation: VR environments can be reused at low marginal cost and deployed across institutions and countries, enabling large-scale and diverse data collection. | Annotation complexity: Continuous, time-synchronized labeling of multimodal VR data is difficult; semi-automatic annotation raises issues of uncertainty, cultural variation, and model-induced bias. |

| Diverse participant inclusion: Remote and distributed data collection reduces reliance on homogeneous laboratory samples, supporting cross-cultural and cross-population emotion studies. | Privacy and consent risks: Motion, gaze, and physiological data collected in VR are highly identifying; robust governance and informed consent are necessary. |

| Automated multimodal capture: VR platforms naturally synchronize head/body motion, gaze, interaction events, and contextual data, simplifying multimodal acquisition. | Data security and storage: Large, rich datasets require secure handling, anonymization strategies, and long-term storage infrastructures. |

| Longitudinal tracking: Identical VR scenarios can be reused across sessions, enabling longitudinal studies on learning, habituation, affect regulation, or therapeutic progression. | Interpretation challenges: High-context VR data complicates emotion inference; it can be difficult to disentangle emotional signals from task difficulty or interface challenges. |

| Standardized scenario deployment: Highly reproducible virtual settings improve cross-study comparability and benchmarking. | Technical variability: Differences in headsets, tracking quality, and environmental conditions may introduce noise in remote data collection. |

VR: virtual reality.

Beyond isolated experiments, VR can function as a scalable and instrumented data collection platform for Affective Computing, enabling the creation of richer, more diverse, and more ecologically valid emotion datasets[1,17,30]. As VR hardware becomes increasingly affordable and widely distributed (particularly within emerging metaverse-like social and shared virtual environments), the potential for large-scale, multimodal, and longitudinal emotion data collection has grown substantially.

5.1 Opportunities

A first major opportunity is scalable data generation. Traditional affective datasets tend to be limited in size, diversity, and contextual richness because they rely on in-lab data acquisition, controlled recording setups, limited staff resources, and often homogeneous participant pools. In contrast, once developed, a VR environment can be reused at minimal additional cost across multiple contexts. Participants can contribute data from homes, clinics, workplaces, or distributed research centers, provided they have compatible headsets[25]. This opens the door to culturally and demographically diverse datasets and enables large-scale cross-population studies that would be prohibitively expensive or logistically impossible with traditional equipment.

VR can substantially reduce collection costs and improve sample diversity, provided participants have access to compatible VR hardware. Initial setup costs or hardware availability may limit these benefits in some contexts[25]. This helps move beyond the well-known over-reliance on WEIRD (Western, Educated, Industrialized, Rich, and Democratic) samples[31], enabling the study of emotion in broader populations and domains: children, older adults, clinical groups, multilingual or multicultural communities, or specialized professional populations such as first responders or industrial workers.

A further advantage lies in automated multimodal capture and longitudinal tracking. Modern VR platforms provide synchronized, fine-grained logs of head and hand motion, gaze direction, controller inputs, interaction events, and sometimes physiological signals through integrated or companion sensors[17]. This comes with detailed contextual metadata, such as the spatial layout of the virtual room, object states, and task progress. Unlike traditional lab studies, VR systems can easily track the same participants across repeated sessions, enabling longitudinal analyses of behavioral adaptation, learning curves, emotional regulation, and therapeutic progression[26]. VR-based ER and EE may support mental health assessment, cognitive-affective monitoring, and personalized interventions, with preliminary evidence indicating potential benefits for certain disorders, though effectiveness depends on scenario design, user familiarity, and individual variability[32,33].

5.2 Challenges

The richness and scale enabled by VR-based data collection come at the cost of new methodological and technical challenges.

A central issue is annotation complexity. As VR datasets become increasingly multimodal, high frequency, and context-dependent, the burden of generating meaningful labels grows. Continuous, time-aligned emotional annotation is difficult even with small cohorts: at large scale, it becomes a significant bottleneck. Semi-automatic labeling methods that fuse self-report, behavioral cues, and algorithmic estimators are promising[17], but they raise methodological concerns: how to represent uncertainty, how to prevent label contamination when model-based predictions inform training data, and how to ensure cross-cultural and inter-rater consistency? These concerns are amplified by the dynamic and interactive nature of VR scenarios, where emotional boundaries are fluid and context-dependent.

Another major challenge concerns privacy, consent, and data governance. VR systems collect continuous streams of highly sensitive information (full-body motion trajectories, gaze patterns, interaction histories, and sometimes physiological or facial data), which are uniquely identifying and difficult to anonymize[34]. Prior work shows that VR motion data alone can be used to re-identify users with high accuracy[35]. Emotion-related signals may also reveal health status, cognitive load, and personal preferences. Robust privacy-preserving pipelines are therefore essential, including clear consent processes, secure storage, differential access, minimization of raw identifiable data, and user control over retention and downstream usage. These issues become even more critical as VR-based data collection moves outside controlled laboratories into home or workplace environments.

5.3 Summary

In summary, VR platforms offer unprecedented opportunities for large-scale, multimodal, and longitudinal emotion data collection. Their ability to capture rich behavioral telemetry within controlled yet immersive environments opens new directions for dataset creation and cross-population studies. However, these advantages also raise significant challenges related to annotation scalability, privacy protection, and data governance. Developing robust frameworks for privacy-preserving data collection, standardized annotation practices, and interoperable datasets will be critical for fully realizing the potential of VR as a data infrastructure for Affective Computing research.

6. The VEE-Loop

The VEE-Loop is a general framework for integrating emotion elicitation, recognition, and adaptation within immersive VR environments.

Before detailing the framework, it is important to clarify the rationale for its introduction. Following the discussion of opportunities and challenges in VR-based emotion research (Section 3, Section 4, and Section 5), several key issues emerge: while VR enables rich EE and recognition, real-time integration of these processes to inform adaptive systems remains underexplored. The VEE-Loop addresses this gap by providing a structured closed-loop framework that connects elicitation, recognition, and adaptation, explicitly designed to handle rapid emotional transitions, multimodal signal fusion, and context-aware environment modification. This positions the VEE-Loop as a bridge between descriptive VR-based affective studies and actionable, responsive VR applications.

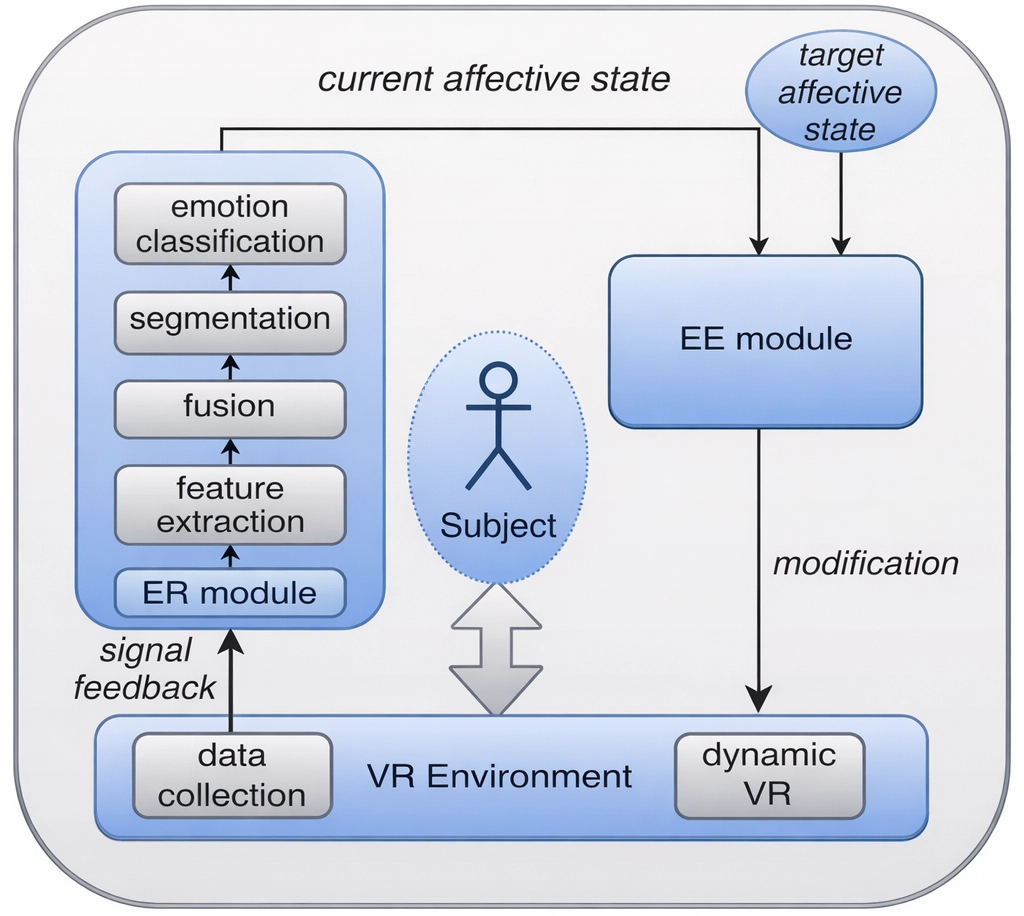

As illustrated in Figure 3, the loop connects three components: (1) an elicitation module, where VR scenarios trigger affective responses through tasks, narratives, or environmental stimuli; (2) a real-time or offline ER module that infers the user’s affective state from behavioral, physiological, and contextual signals; and (3) an adaptation module, where either the system or a human operator modifies the virtual environment according to emotional estimates and target outcomes. This creates a continuous feedback mechanism in which VR becomes responsive to the user’s emotional trajectory.

Figure 3. The VEE-Loop framework connecting EE in immersive VR, affect recognition, and adaptive virtual environment modification. VEE-Loop: Virtual Emotion, Elicitation and Recognition Loop; EE: emotion elicitation; VR: virtual reality.

While several prior frameworks have aimed to integrate affect recognition and VR adaptation, such as the Virtual Reality Analytics Map proposed by Chitale et al.[36], these approaches primarily focus on mapping user interactions and affective responses without fully operationalizing a continuous, closed-loop integration of EE, recognition, and real-time adaptation.

In contrast, the VEE-Loop framework introduced in this article explicitly formalizes the continuous loop connecting VR-based EE, multimodal ER, and adaptive modification of the virtual environment. This allows both offline analysis for research and iterative design, as well as online, real-time interventions to guide emotional states or optimize user engagement. Hence, the VEE-Loop provides a more comprehensive and actionable pipeline for emotion-aware VR systems, bridging gaps between sensing, interpretation, and adaptive VR delivery.

The VEE-Loop introduces several novel aspects compared to existing closed-loop affective VR frameworks. First, it explicitly formalizes the triadic connection between elicitation, recognition, and adaptation, rather than focusing solely on ER or system adaptation. Second, it is designed to operate in both offline and online modes, making it suitable for both experimental research and real-time application scenarios. Finally, it integrates multimodal and context-aware data streams in a unified loop, supporting adaptive interventions grounded in both behavioral and physiological indicators.

The VEE-Loop can operate in two modes. In offline mode, data is recorded during VR interaction, automatically annotated through ER models, and later analyzed by instructors, designers, or researchers for insight. In online mode, the ER module drives real-time adaptation, enabling immediate responses such as adjusting task difficulty, triggering supportive guidance, or alerting a supervisor when signs of cognitive overload appear. Although the timescale differs, the underlying closed-loop architecture remains the same.

In the following, we illustrate how this general loop instantiates in four application domains (industry, design, education, and health), drawing from several European research projects that have explored emotion-aware VR systems.

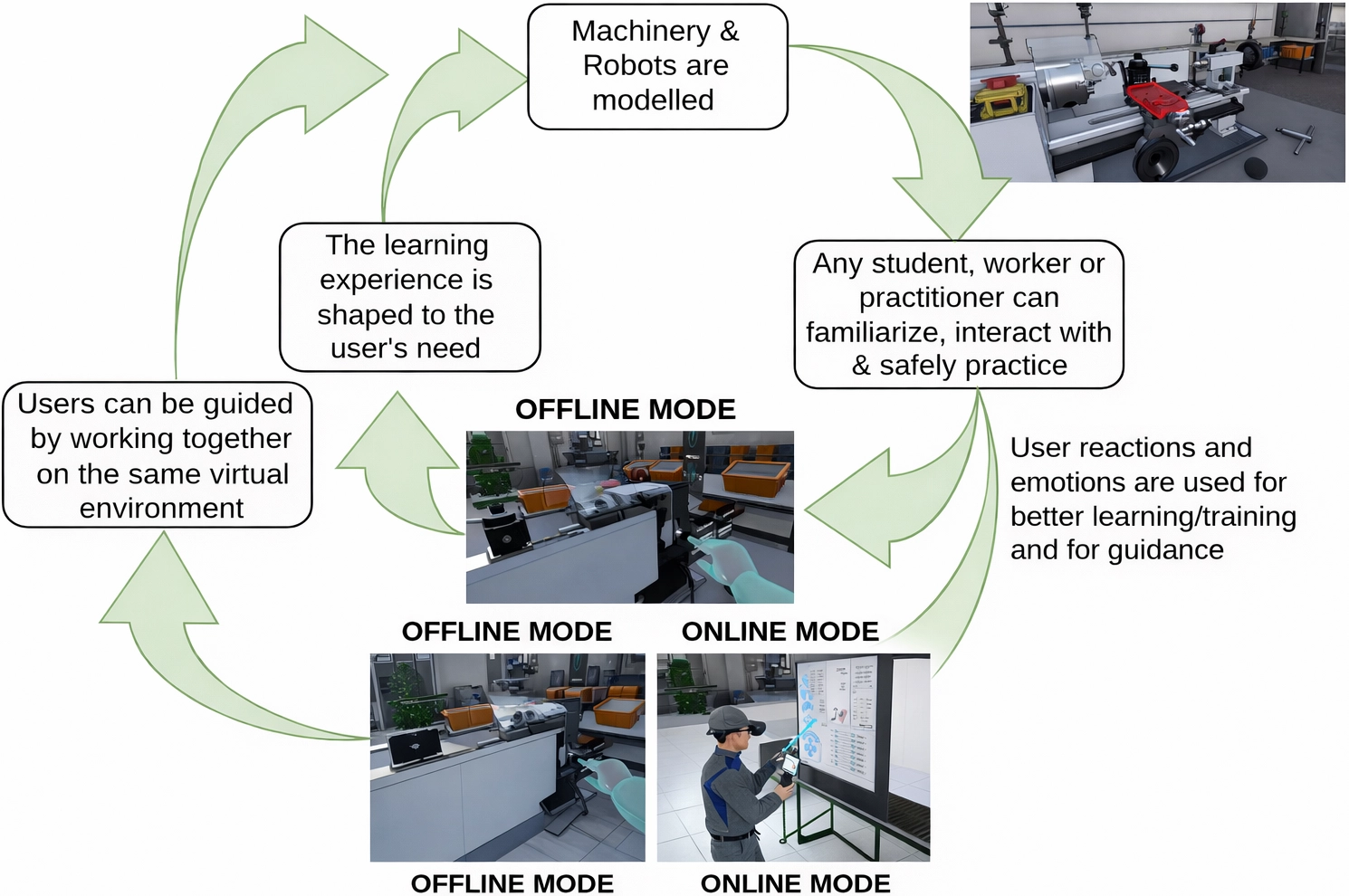

6.1 Industry: Emotion-aware training with virtual machina

The Virtual Machina project (https://v-machina.supsi.ch/) (EIT Manufacturing, European Union) leverages digital twins of industrial machines to deliver safe, high-fidelity training in VR. Trainees practice operating complex robotic systems, performing safety procedures, and diagnosing faults in realistic virtual factories, without the risks or costs associated with physical equipment.

Instructors and domain experts first design structured VR modules that define actions, constraints, and success conditions. During execution, trainees manipulate virtual machinery using their hands, controllers, or tracked tools. Performance feedback (capturing task completion, errors, and timing) is generated automatically.

The VEE-Loop augments this workflow with emotional insight. An ER module analyzes body movements (e.g., hesitation, abrupt motions), gaze patterns, and physiological indicators to infer emotional states such as confusion, stress, or confidence. These signals are then used in two complementary ways, as shown in Figure 4. In offline mode, instructors review emotional annotations to identify difficult or confusing steps, enabling iterative refinement of workflows, instructions, and user interfaces. In online mode, trainers or the VR system adapt the experience in real time by slowing the task, providing hints, or repeating steps when repeated markers of frustration appear.

Figure 4. The virtual machina VEE-Loop with offline and online modes. VEE-Loop: Virtual Emotion, Elicitation and Recognition Loop.

This approach aligns with recent advances in VR-based industrial training that integrate behavioral analytics and physiological monitoring to support adaptive instruction and skill acquisition[17,37]. Experimental evidence shows that such emotion-sensitive loops can detect transitions between mental states within seconds, enabling practically useful adaptive support for learners.

6.2 Design: Emotion-driven product evaluation in VR+ 4CAD

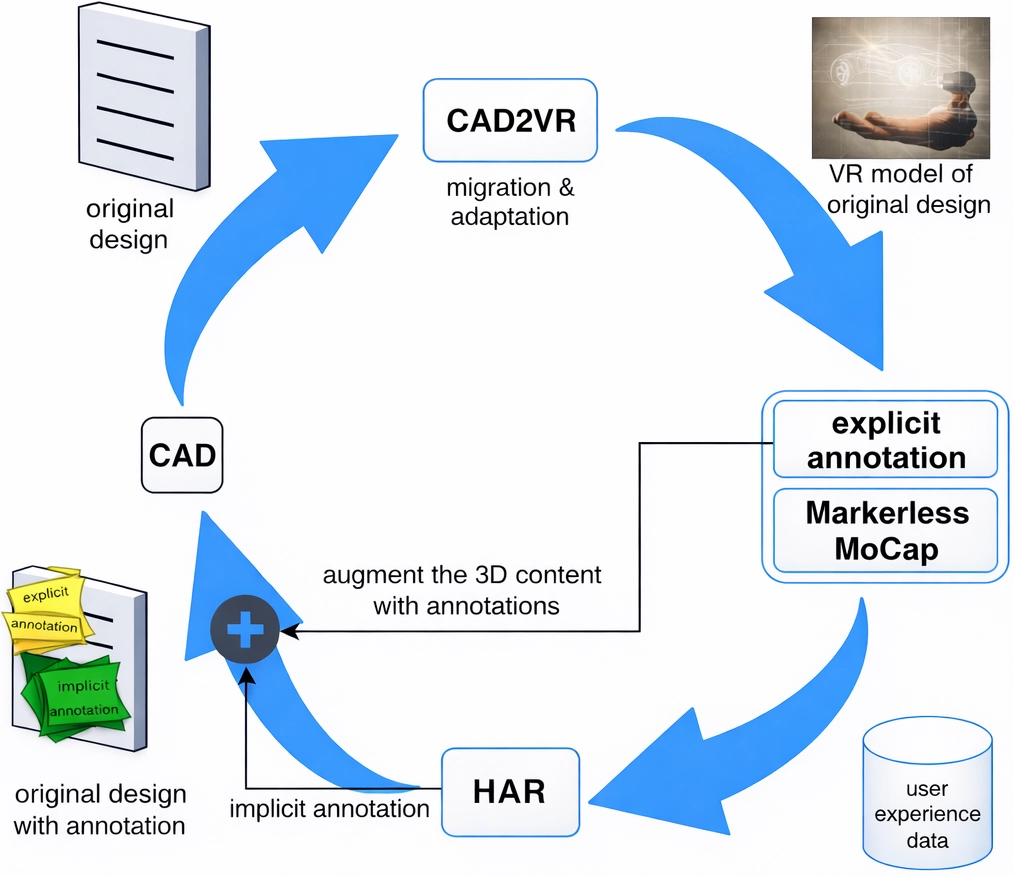

The VR+ 4CAD project (https://artanim.ch/project/vr4cad/) explores how immersive VR prototypes can enhance product evaluation by capturing users’ emotional responses during interaction with computer-aided design (CAD)-derived virtual objects. Traditional design evaluations rely on interviews or task-based usability tests, which rarely capture moment-to-moment affective fluctuations. Subtle discomfort, uncertainty, or satisfaction often goes unreported.

VR+ 4CAD transforms CAD models into interactive VR prototypes. Mechanics and designers perform maintenance tasks (e.g., removing components, inspecting connectors) in a virtual workspace. Throughout the session, an ER module monitors in real-time behavior and affect, connecting emotional indicators to specific manipulations or spatial configurations (Figure 5).

Figure 5. The VR+ 4CAD VEE-Loop from the original CAD to the annotated design back to CAD. VR: virtual reality; VEE-Loop: Virtual Emotion, Elicitation and Recognition Loop; CAD: computer-aided design.

In the VEE-Loop’s offline mode, the system generates an emotion report mapping affective signals to timestamps, objects, and actions. Designers use these reports to identify “emotional hotspots”: points where frustration spikes or uncertainty increases. These correlate strongly with ergonomic or usability issues such as awkward grips, hard-to-reach components, or visually ambiguous cues. Designers can refine the prototype accordingly and re-test it in the same VR scenario, supporting iterative, evidence-driven design.

This process resonates with contemporary research on emotion-aware VR prototyping, which highlights how affective feedback can identify latent usability problems earlier and more efficiently than interview-based methods[1]. The approach generalizes to consumer products, medical devices, industrial tools, and architectural spaces.

6.3 Education: Flow-based adaptation in XR2Learn and the Magic XRoom

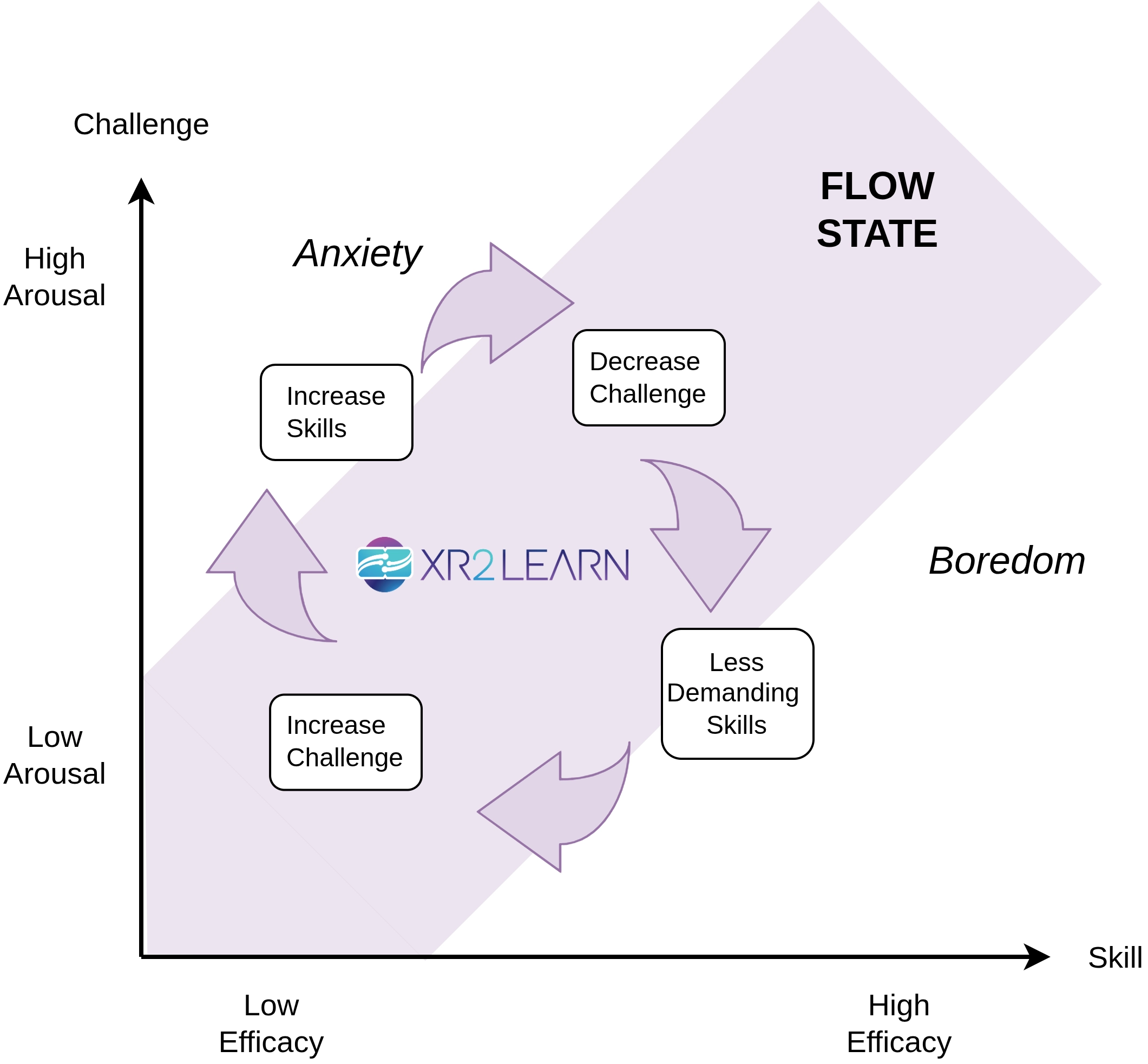

The XR2Learn3 (https://xr2learn.eu/) initiative and the Magic XRoom platform[30] investigate how the VEE-Loop can support emotionally adaptive learning experiences. These environments embed learners in interactive VR scenarios, ranging from problem-solving tasks to procedural training, whose difficulty and pacing can be dynamically adjusted using the online mode of the VEE-Loop.

ER combines behavioral signals (e.g., gaze dispersion, head movement dynamics), physiological measures (e.g., HR, optionally EDA), and contextual information. The adaptation logic draws on flow theory, which posits that engagement peaks when challenge matches skill. If difficulty is too high, learners experience stress or frustration; if too low, boredom emerges.

The VEE-Loop orchestrates the following process, as illustrated in Figure 6:

Figure 6. The XR2Learn VEE-Loop that keeps users within the flow by measuring their reactions. VEE-Loop: Virtual Emotion, Elicitation and Recognition Loop.

1) learners begin with a moderately challenging task;

2) the ER module infers whether they remain within the optimal engagement zone;

3) the system adjusts difficulty, pacing, hints, or environmental characteristics accordingly;

4) behavioral and affective data are logged for later analysis.

This fine-grained adaptation supports personalized learning trajectories and more stable engagement patterns. Recent work in immersive learning environments confirms that integrating ER into adaptation policies leads to more robust flow induction, improved performance, and deeper learning outcomes[23,26].

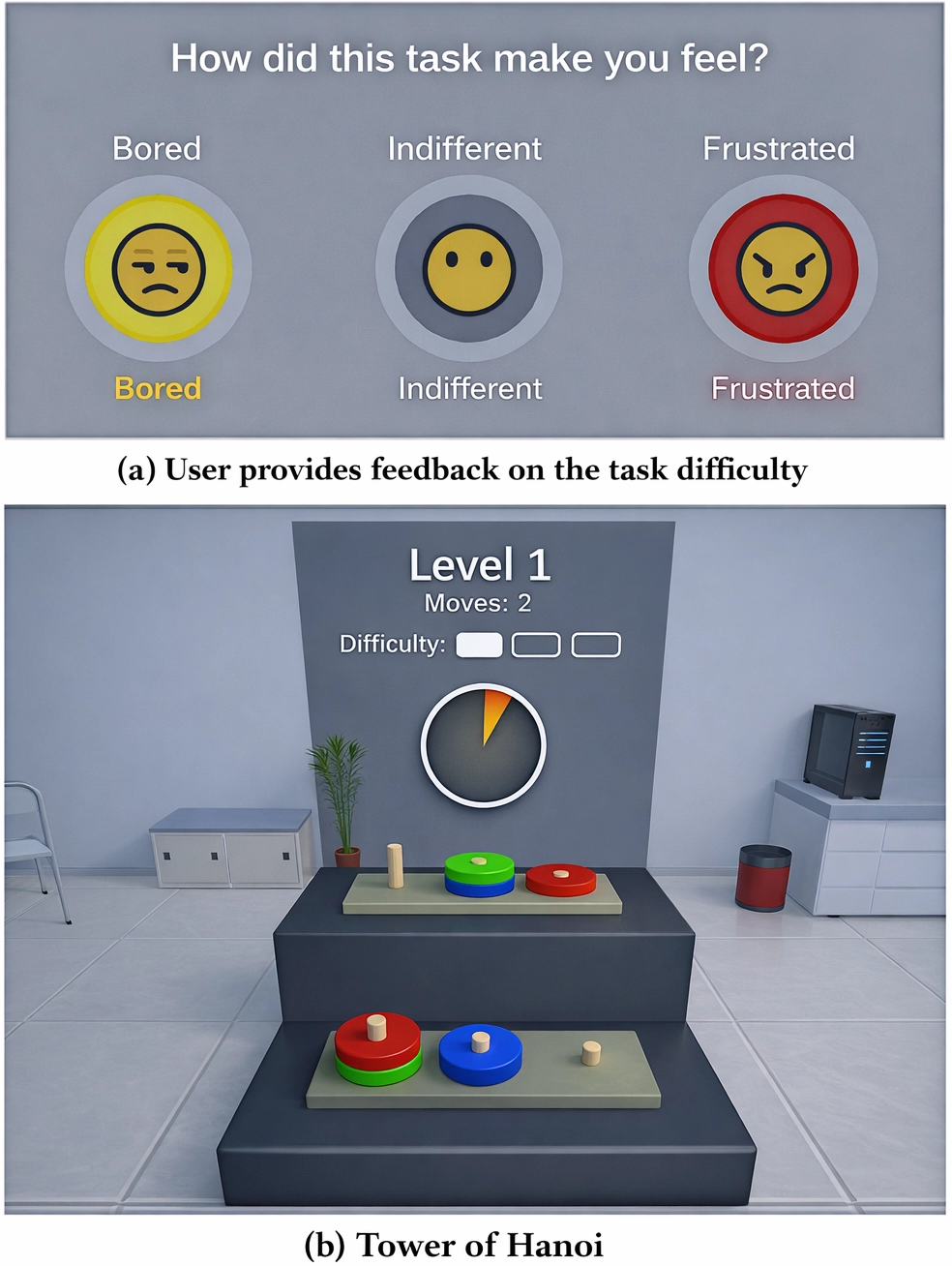

The Magic XRoom is a VR platform developed to support controlled EE and ER research[30]. It is designed to create immersive VR experiences that can systematically evoke specific emotional responses while simultaneously collecting sensor data for analysis. An example experience is presented in Figure 7.

Figure 7. Example of a Magic XRoom session using the Tower of Hanoi task, where task difficulty is dynamically adapted to the user’s performance to elicit specific emotional states.

The Magic XRoom offers a highly immersive and controllable VR environment for systematic and repeatable EE, and adapts task difficulty in real time to steer users toward targeted affective states. Crucially, it enables automatic (or semi-automatic) emotion annotation by linking task dynamics and performance metrics to expected emotional states, reducing reliance on post-hoc self-reports. Together with multimodal sensor integration, this makes it especially powerful for scalable and reliable emotion-recognition research. Further, the systematic data collected in these sessions enables large-scale studies of the relationship between emotion, cognitive load, and learning dynamics.

6.4 Health: Emotion-aware VR for mental health assessment and support

While industry, design, and education are key application areas, the VEE-Loop is equally relevant in health contexts. VR-based ER and EE can support mental health assessment, monitor cognitive-affective states, and deliver personalized interventions. For instance, patients with anxiety or depression can engage in VR scenarios that evoke specific emotional responses, while the ER module tracks physiological and behavioral markers of stress, engagement, or emotional regulation. The adaptation module can then guide the patient through tailored coping exercises, exposure therapy, or relaxation tasks.

Recent reviews demonstrate the potential of VR for mental health: Baghaei et al. provide a scoping review on VR for supporting treatment of depression and anxiety, while Omisore et al. review extended reality applications for mental health evaluation[32,33]. Integrating the VEE-Loop in such interventions enables continuous, data-driven adaptation and more precise emotional assessment, extending the framework’s utility beyond industrial, design, and educational domains into clinical practice.

6.5 Synthesis of VR affective modalities

To provide a compact overview of VR Affective Computing modalities, Table 4 summarizes the common sensor types and behavioral modalities, the typical labeling strategies, deployment modes (offline or online), key constraints, and typical metrics. This synthesis enables readers to quickly understand methodological options and trade-offs when designing VR-based ER and EE studies.

| VR Modality | Labeling Strategy | Deployment Mode | Constraints | Typical Metrics |

| Head/Hand Motion, Posture | Behavioral coding, algorithmic inference | Offline/Online | Latency in motion capture, drift, tracking loss | Kinematics, gesture frequence, amplitude, velocity |

| Eye Gaze/Pupillometry | Automated annotation, manual mapping to AOIs | Offline/Online | Sensor occlusion, calibration drift, light conditions | Fixation duration, saccade velocity, pupil dilation |

| Facial Expressions/FACS | Automatic recognition, manual FACS coding | Offline/Online | Occlusion from headset, low-light noise | Action unit intensity, emotion probabilities |

| Physiological Signals (HR, EDA, EEG) | Self-report fusion, thresholding, ML models | Offline/Online | Motion artifacts, sensor misalignment, signal noise | HRV metrics, skin conductance peaks, EEG band power |

| Contextual/Event Data | Event-driven annotation, scenario logging | Offline/Online | Temporal alignment, logging frequency, context ambiguity | Event counts, durations, sequence patterns |

VR: virtual reality; AOI: areas of interest; FACS: facial action coding system; HR: heart rate; EDA: electrodermal activity; EEG: electroencephalography; HRV: heart rate variability; ML: machine learning.

7. Privacy, Ethics, and Responsible Affective VR

Affective VR systems operate at the intersection of two highly sensitive domains: immersive behavioral tracking and emotion inference. ER relies on data that is inherently intimate and strongly identifying, such as heart-rate variability, facial micro-expressions, vocal prosody, breathing patterns, eye movements, and fine-grained motor behavior. Within VR, such signals are collected continuously and embedded in rich contextual and spatial information, significantly amplifying their sensitivity and re-identification potential[38,39]. Recent studies demonstrate that full-body motion trajectories in VR can uniquely identify users with extremely high accuracy after only a few seconds of interaction, even in the absence of explicit demographic data[40]. Consequently, affective VR systems can reveal not only transient emotional states but also long-term psychological traits, preferences, and vulnerabilities[34].

This sensitivity has attracted the attention of policymakers. The 2024 EU Artificial Intelligence Act explicitly classifies ER in contexts such as education, workplaces, and law enforcement as high-risk or even prohibited in some circumstances, requiring stringent safeguards[41]. These include explicit and informed consent for emotion tracking, transparency regarding what data is collected and for what purposes, strict data minimization, traceability of automated decisions, human oversight, and demonstrable risk management procedures. For affective VR developers, this implies that compliance is not merely a technical requirement but an organizational one: systems must incorporate robust governance mechanisms for data access, retention, sharing, and auditing[42,43].

Beyond regulatory compliance, deeper normative questions arise. Who ultimately controls the emotional data produced through immersive interaction? Under what conditions is it ethically appropriate to adapt educational content, workplace evaluations, or product designs based on inferred emotions? How can we prevent the repurposing of emotional signals for manipulation, targeted persuasion, discrimination, or surveillance, particularly for vulnerable populations such as children, neurodivergent users, or individuals undergoing therapeutic interventions[44]? These concerns underscore the need for user empowerment, transparency, contestability, and participatory design practices in affective VR[45]. From a technical standpoint, a variety of privacy-enhancing technologies (PETs) can mitigate, but not eliminate, the risks associated with affective VR. Homomorphic encryption (HE) enables the evaluation of emotion models on encrypted inputs, preventing servers from accessing raw sensor streams[46]. Secure multi-party computation (MPC) allows device vendors, application developers, and cloud services to jointly compute affective inferences without revealing their private data to one another[47]. Differential privacy (DP) provides mathematically rigorous guarantees when aggregating affective datasets, reducing the likelihood of recovering individual users’ emotional responses[48]. Federated learning (FL) enables decentralized training of ER models, keeping raw emotional signals on local devices and sharing only privacy-preserving model updates[49]. Complementarily, synthetic emotional data generated using diffusion models or generative adversarial networks can support model development while reducing reliance on genuine user signals[50]. Still, PETs alone cannot ensure responsible affective VR. They must be embedded within a broader socio-technical framework consisting of clear communication practices, meaningful user choice, transparent data flows, robust auditing, and mechanisms for accountability.

Only by combining technical safeguards with thoughtful governance and ethical design can affective VR systems respect user dignity, autonomy, and psychological safety while realizing their potential for learning, training, and human-centric applications.

8. Future Research Directions

The long-term research agenda for affective VR points toward the development of responsible, privacy-preserving, and adaptive systems that can be reliably deployed in high-stakes domains such as mental health, education, workforce training, and human–robot interaction. Achieving this vision requires coordinated advances in algorithmic design, system-level engineering, and socio-technical governance frameworks. Here, we highlight three key avenues for future work.

8.1 Privacy-preserving ER

ER models have traditionally assumed access to raw, high-resolution signals such as facial expressions, body kinematics, physiological responses, and voice features. In VR, these signals are captured continuously and embedded within a rich spatial–temporal context, greatly amplifying privacy risks compared to traditional sensing modalities[51,52]. As a promising direction, future ER algorithms should compute affective inferences without exposing raw data, relying instead on privacy-preserving machine-learning frameworks.

Recent advances in HE, MPC, and trusted execution environments have demonstrated the feasibility of running inference on encrypted or hardware-isolated data, albeit with latency and accuracy trade-offs[53,54]. Integrating these techniques into VR ER pipelines remains largely unexplored. Complementary approaches, such as FL with DP, could further reduce risks by keeping affective signals on-device and ensuring that aggregated updates do not leak identifiable emotional patterns[55]. The open challenge is to maintain real-time responsiveness (crucial for adaptive VR) while offering mathematically grounded privacy guarantees. This requires benchmarking ER models under privacy constraints and establishing formal guidelines for which inferences can be safely extracted from continuous multimodal VR data.

8.2 Synthetic emotion data and affective style transfer

High-quality emotion datasets remain scarce, expensive to collect, culturally narrow, and ethically sensitive[2,56]. Advances in generative modeling (including diffusion models and cross-modal style transfer) offer a compelling opportunity to produce synthetic physiological, behavioral, and movement data that preserve affective structure while reducing reliance on real users. Such synthetic data could enhance representativeness (e.g., rare emotional states, under-sampled populations), support safer data sharing, and accelerate research across institutions[57].

However, new methodological questions emerge. From a technical standpoint, future work must determine how faithfully synthetic samples preserve emotion–signal relationships and how synthetic augmentation affects generalization in multimodal ER models. From an ethical standpoint, researchers must ensure that synthetic data do not inadvertently leak private attributes of the original dataset or reproduce harmful demographic or cultural biases[58]. Transparent reporting standards, uncertainty quantification, and systematic audits will be essential as synthetic affective datasets grow more prevalent.

8.3 Generative AI for adaptive VR

The integration of advanced generative AI models (spanning 3D scene synthesis, procedural content generation, narrative generation, and multimodal diffusion models) opens new possibilities for emotion-adaptive VR. Instead of merely adjusting parameters (e.g., task difficulty or pacing), future systems may dynamically reconfigure entire environments or narratives based on users’ emotional trajectories[59,60]. This could enable richer and more personalized applications in therapeutic exposure, stress inoculation training, affective tutoring, and adaptive game-based learning.

In such a framework, designers specify high-level affective goals (e.g., “induce mild challenge while maintaining engagement”), and generative models propose environment variants, which are then refined through real-time emotional feedback. However, powerful generative tools also increase risks of unintentional affective manipulation, inconsistent experience quality, or inappropriate content generation. Future research must therefore develop guardrails, including constrained generation spaces, human-in-the-loop oversight, safety filters, and continuous monitoring of affective impact. Establishing guidelines for permissible adaptation (drawing from affective neuroscience, ethics, and human–computer interaction) will be essential for deploying generative AI responsibly in emotionally sensitive VR contexts.

9. Conclusion

VR and Affective Computing are converging toward a powerful vision: interactive systems that feel with the user and adapt accordingly. Through the lens of the VEE-Loop, we have seen how VR can enhance both ER and EE, and how this closed loop can be applied in industrial training, product design, and education to create more responsive, informative, and human-centric experiences.

At the same time, this vision raises deep questions about privacy, ethics, and responsibility. Emotion data is among the most intimate forms of personal information, and affective VR systems must be designed with strong safeguards, regulatory alignment, and user agency at their core. The same features that make VR a powerful laboratory for emotions also make it a potentially invasive technology if misused.

The path forward lies in a combination of technical innovation, privacy-preserving ER, synthetic emotion data, generative AI for adaptive VR, and careful human-centered design. If researchers, designers, policymakers, and users manage to work together, we may end up with systems that not only understand our emotions, but also help us regulate, explore, and harness them in ways that are safe, transparent, and genuinely beneficial. In that sense, affective VR can be more than a technological curiosity: it can become a tool for fostering more empathetic, responsive, and responsible digital experiences.

Acknowledgements

Parts of the manuscript’s initial structure and outline were generated with the assistance of ChatGPT, using content derived from a previous webinar presentation. The scientific content, interpretation, and conclusions were developed entirely by the authors.

Authors contribution

Andreoletti D, Leidi T, Peternier A, Giordano S: Conceptualization, methodology, data curation, formal analysis, investigation, writing-original draft, writing-review & editing.

Conflicts of interest

Silvia Giordano is an Editorial Board Member of Empathic Computing. The other authors declare no conflicts of interest.

Ethical approval

Not applicable.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Availability of data and materials

Not applicable.

Funding

This work was partially supported by the Staatsekretariat für Bildung, Forschung und Innovation (SBFI) (Grant No. 22.00594) and Horizon Europe (Grant No. 101092851).

Copyright

© The Author(s) 2026.

References

-

1. Wang Y, Song W, Tao W, Liotta A, Yang D, Li X, et al. A systematic review on affective computing: Emotion models, databases, and recent advances. Inf Fusion. 2022;83:19-52.[DOI]

-

2. Shehu HA, Browne WN, Eisenbarth H. Emotion categorization from facial expressions: A review of datasets, methods, and research directions. Neurocomputing. 2025;624:129367.[DOI]

-

3. Dzardanova E, Kasapakis V. Virtual reality: A journey from vision to commodity. IEEE Ann Hist Comput. 2023;45(1):18-30.[DOI]

-

4. Papandrea M, Peternier A, Frei D, La Porta N, Gelsomini M, Allegri D, et al. V-cockpit: A platform for the design, testing, and validation of car infotainment systems through virtual reality. Appl Sci. 2024;14(18):8160.[DOI]

-

5. Klein Schaarsberg RE, van Dam L, Widdershoven GAM, Lindauer RJL, Popma A. Ethnic representation within virtual reality: A co-design study in a forensic youth care setting. BMC Digit Health. 2024;2:25.[DOI]

-

6. Standen B, Anderson J, Sumich A, Heym N. Effects of system- and media-driven immersive capabilities on presence and affective experience. Virtual Real. 2023;27(1):371-384.[DOI]

-

7. Lemmens JS, Simon M, Sumter SR. Fear and loathing in VR: The emotional and physiological effects of immersive games. Virtual Real. 2022;26(1):223-234.[DOI]

-

8. Linares-Vargas BGP, Cieza-Mostacero SE. Interactive virtual reality environments and emotions: A systematic review. Virtual Real. 2025;29:3.[DOI]

-

9. Mousavi SMH, Khaertdinov B, Jeuris P, Hortal E, Andreoletti D, Giordano S. Emotion recognition in adaptive virtual reality settings: Challenges and opportunities. In: 2023 Workshop on Advances of Mobile and Wearable Biometrics; 2023 Sep 26; Athens, Greece. Aachen: CEUR-WS.org; 2023. p. 1-20. Available from: https://ceur-ws.org/Vol-3517/paper1.pdf

-

10. Andreoletti D, Luceri L, Peternier A, Leidi T, Giordano S. The virtual emotion loop: Towards emotion-driven product design via virtual reality. In: 2021 16th Conference on Computer Science and Intelligence Systems (FedCSIS); 2021 Sep 02-05; Sofia, Bulgaria. Piscataway: IEEE; 2021. p. 371-378.[DOI]

-

11. Andreoletti D, Paoliello M, Luceri L, Leidi T, Peternier A, Giordano S. A framework for emotion-driven product design through virtual reality. In: Ziemba E, Chmielarz W, editors. Information technology for management: Business and social issues. Cham: Springer; 2022. p. 42-61.[DOI]

-

12. Giaretta A. Security and privacy in virtual reality: A literature survey. Virtual Real. 2025;29:10.[DOI]

-

13. McStay A. Emotional AI, soft biometrics and the surveillance of emotional life: An unusual consensus on privacy. Big Data Soc. 2020;7(1):2053951720904386.[DOI]

-

14. Fox E. Perspectives from affective science on understanding the nature of emotion. Brain Neurosci Adv. 2018;2:2398212818812628.[DOI]

-

15. Kisley MA, Shulkin J, Meza-Whitlatch MV, Pedler RB. Emotion beliefs: Conceptual review and compendium. Front Psychol. 2024;14:1271135.[DOI]

-

16. Calvo RA, D’Mello S, Gratch J, Kappas A, editors. The oxford handbook of affective computing. Oxford: Oxford University Press; 2015.[DOI]

-

17. Somarathna R, Bednarz T, Mohammadi G. Virtual reality for emotion elicitation–A review. IEEE Trans Affect Comput. 2022;14(4):2626-2645.[DOI]

-

18. Jinan UA, Heidarikohol N, Borst CW, Billinghurst M, Jung S. A systematic review of using immersive technologies for empathic computing from 2000-2024. Empath Comput. 2025;1:202501.[DOI]

-

19. Cornelio P, Velasco C, Obrist M. Multisensory integration as per technological advances: A review. Front Neurosci. 2021;15:652611.[DOI]

-

20. Castiblanco Jimenez IA, Olivetti EC, Vezzetti E, Moos S, Celeghin A, Marcolin F. Effective affective EEG-based indicators in emotion-evoking VR environments: An evidence from machine learning. Neural Comput Applic. 2024;36(35):22245-22263.[DOI]

-

21. Gupta K, Lazarevic J, Pai YS, Billinghurst M. AffectivelyVR: Towards VR personalized emotion recognition. In: VRST ’20: Proceedings of the26th ACM Symposium on Virtual Reality Software and Technology; 2020 Nov 01-04; Virtual event, Canada. New York: Association for Computing Machinery; 2020. p. 1-3.[DOI]

-

22. Polo EM, Iacomi F, Rey AV, Ferraris D, Paglialonga A, Barbieri R. Advancing emotion recognition with Virtual Reality: A multimodal approach using physiological signals and machine learning. Comput Biol Med. 2025;193:110310.[DOI]

-

23. Romero-Ramos L, González-Serna G, López-Sánchez M, González-Franco N, Valenzuela-Robles B. Emotion recognition in virtual reality learning environments: A multimodal machine learning approach. In: Martínez-Villaseñor L, Ochoa-Ruiz G, Montes Rivera M, Barrón-Estrada ML, Acosta-Mesa HG, editors. Advances in computational intelligence. MICAI 2024 International Workshops. Cham: Springer; 2025. p. 45-52.[DOI]

-

24. Kim MK, Kim M, Oh E, Kim SP. A review on the computational methods for emotional state estimation from the human EEG. Comput Math Meth Med. 2013;2013:573734.[DOI]

-

25. Warsinke M, Kojić T, Vergari M, Spang R, Voigt-Antons JN, Möller S. Comparing continuous and retrospective emotion ratings in remote VR study. In: 2024 16th International Conference on Quality of Multimedia Experience (QoMEX); 2024 Jun 18-20; Karlshamn, Sweden. Piscataway: IEEE; 2024. p. 139-145.[DOI]

-

26. Maksatbekova A, Argan M. Virtual reality’s dual edge: Navigating mental health benefits and addiction risks across timeframes. Curr Psychol. 2025;44(7):6469-6480.[DOI]

-

27. Kim H, Kim DJ, Chung WH, Park KA, Kim JDK, Kim D, et al. Clinical predictors of cybersickness in virtual reality (VR) among highly stressed people. Sci Rep. 2021;11:12139.[DOI]

-

28. Cebeci B, Celikcan U, Capin TK. A comprehensive study of the affective and physiological responses induced by dynamic virtual reality environments. Comput Anim Virtual Worlds. 2019;30:e1893.[DOI]

-

29. Lavoie R, Main K, King C, King D. Virtual experience, real consequences: The potential negative emotional consequences of virtual reality gameplay. Virtual Real. 2021;25(1):69-81.[DOI]

-

30. Mousavi SMH, Besenzoni M, Andreoletti D, Peternier A, Giordano S. The magic XRoom: A flexible VR platform for controlled emotion elicitation and recognition. In: Proceedings of the 25th International Conference on Mobile Human-Computer Interaction; 2023 Sep 26-29; Athens, Greece. New York: Association for Computing Machinery; 2023. p. 1-5.[DOI]

-

31. Henrich J, Heine SJ, Norenzayan A. The weirdest people in the world? Behav Brain Sci. 2010;33:61-83.[DOI]

-

32. Baghaei N, Chitale V, Hlasnik A, Stemmet L, Liang HN, Porter R. Virtual reality for supporting the treatment of depression and anxiety: Scoping review. JMIR Ment Health. 2021;8(9):e29681.[DOI]

-

33. Omisore OM, Odenigbo I, Orji J, Beltran AIH, Meier S, Baghaei N, et al. Extended reality for mental health evaluation: Scoping review. JMIR Serious Games. 2024;12:e38413.[DOI]

-

34. Fabiano N. Affective computing and emotional data: Challenges and implications in privacy regulations, the AI act, and ethics in large language models. arXiv:2509.20153v2 [Preprint]. 2025.[DOI]

-

35. Wu Y, Shi C, Zhang T, Walker P, Liu J, Saxena N, et al. Privacy leakage via unrestricted motion-position sensors in the age of virtual reality: A study of snooping typed input on virtual keyboards. In: 2023 IEEE Symposium on Security and Privacy (SP); 2023 May 21-25; San Francisco, USA. Piscataway: IEEE; 2023. p. 3382-3398.[DOI]

-

36. Chitale V, Henry JD, Liang HN, Matthews B, Baghaei N. Virtual reality analytics map (VRAM): A conceptual framework for detecting mental disorders using virtual reality data. New Ideas Psychol. 2025;76:101127.[DOI]

-

37. Di Pasquale V, Cutolo P, Esposito C, Franco B, Iannone R, Miranda S. Virtual reality for training in assembly and disassembly tasks: A systematic literature review. Machines. 2024;12(8):528.[DOI]

-

38. Kulal S, Li Z, Tian X. Security and privacy in virtual reality: A literature review. Issues Inf Syst. 2022;23(2):185-192.[DOI]

-

39. Olade I, Fleming C, Liang HN. BioMove: Biometric user identification from human kinesiological movements for virtual reality systems. Sensors. 2020;20(10):2944.[DOI]

-

40. Nair V, Guo W, Mattern J, Wang R, O’Brien JF, Rosenberg L, et al. Unique identification of 50,000+ virtual reality users from head & hand motion data. In: 32nd USENIX Security Symposium; 2023 Aug 09-11; Anaheim, USA. Berkeley: USENIX Association; 2023. p. 895-910.[DOI]

-

41. Regulation of the European Parliament and of the Council Laying Down Harmonised Rules on Artificial Intelligence (EU AI Act) [Internet]. Luxembourg: Publications Office of the European Union; 2024.[DOI]

-

42. Kokina J, Blanchette S, Davenport TH, Pachamanova D. Challenges and opportunities for artificial intelligence in auditing: Evidence from the field. Int J Account Inf Syst. 2025;56:100734.[DOI]

-

43. Chesterman S. From ethics to law: Why, when, and how to regulate AI. In: Gunkel DJ, editor. Handbook on the ethics of artificial intelligence. Cheltenham: Edward Elgar Publishing; 2023. p. 113-127.[DOI]

-

44. Barker D, Tippireddy MKR, Farhan A, Ahmed B. Ethical considerations in emotion recognition research. Psychol Int. 2025;7(2):43.[DOI]

-

45. Sigfrids A, Leikas J, Salo-Pöntinen H, Koskimies E. Human-centricity in AI governance: A systemic approach. Front Artif Intell. 2023;6:976887.[DOI]

-

46. Podschwadt R, Takabi D, Hu P, Rafiei MH, Cai Z. A survey of deep learning architectures for privacy-preserving machine learning with fully homomorphic encryption. IEEE Access. 2022;10:117477-117500.[DOI]

-

47. Knott B, Venkataraman S, Hannun A, Sengupta S, Ibrahim M, van der Maaten L. CrypTen: Secure multi-party computation meets machine learning. Adv Neural Inf Process Syst. 2021;34:4961-4973.[DOI]

-

48. Dwork C. The promise of differential privacy: A tutorial on algorithmic techniques. In: 2011 IEEE 52nd Annual Symposium on Foundations of Computer Science; 2011 Oct 22-25; Palm Springs, USA. Los Alamitos: IEEE Computer Society; 2011. p. 1-2.[DOI]

-

49. Guo W, Zhuang F, Zhang X, Tong Y, Dong J. A comprehensive survey of federated transfer learning: Challenges, methods and applications. Front Comput Sci. 2024;18(6):186356.[DOI]

-

50. Zhu J. Synthetic data generation by diffusion models. Natl Sci Rev. 2024;11(8):nwae276.[DOI]

-

51. Hine E, Rezende IN, Roberts H, Wong D, Taddeo M, Floridi L. Safety and privacy in immersive extended reality: An analysis and policy recommendations. Digit Soc. 2024;3(2):33.[DOI]

-

52. Raja US, Al-Baghli R. Ethical concerns in contemporary virtual reality and frameworks for pursuing responsible use. Front Virtual Real. 2025;6:1451273.[DOI]

-

53. Boemer F, Lao Y, Cammarota R, Wierzynski C. nGraph-HE: A graph compiler for deep learning on homomorphically encrypted data. In: Proceedings of the 16th ACM International Conference on Computing Frontiers; 2019 Apr 30-May 02; Alghero, Italy. New York: Association for Computing Machinery; 2019. p. 3-13.[DOI]

-

54. Gilad-Bachrach R, Dowlin N, Laine K, Lauter K, Naehrig M, Wernsing J. Cryptonets: Applying neural networks to encrypted data with high throughput and accuracy. In: Proceedings of the 33rd International Conference on Machine Learning; 2016 Jun 19-24; New York, USA. New York: PMLR; 2016. p. 201-210. Available from: https://proceedings.mlr.press/v48/gilad-bachrach16.html?utm_source=chatgpt.com

-

55. Wei K, Li J, Ding M, Ma C, Yang HH, Farokhi F, et al. Federated learning with differential privacy: Algorithms and performance analysis. IEEE Trans Inf Forensics Secur. 2020;15:3454-3469.[DOI]

-

56. Dhall A, Singh M, Goecke R, Gedeon T, Zeng D, Wang Y, et al. EmotiW 2023: Emotion recognition in the wild challenge. In: Proceedings of the 25th International Conference on Multimodal Interaction; 2023 Oct 09-13; Paris, France. New York: Association for Computing Machinery; 2023. p. 746-749.[DOI]

-

57. Wang X, Chen C, Yang F, Gong X, Zhao S. SRMER: Synthetic-to-real multimodal emotion recognition. Inf Fusion. 2026;127:103869.[DOI]

-

58. Hao S, Han W, Jiang T, Li Y, Wu H, Zhong C, et al. Synthetic data in AI: Challenges, applications, and ethical implications. arXiv:2401.01629v1 [Preprint]. 2024.[DOI]

-

59. Ning X, Zhuo Y, Wang X, Sio CID, Lee LH. When generative artificial intelligence meets extended reality: A systematic review. Int J Hum. 2025.[DOI]

-

60. Freiknecht J, Effelsberg W. A survey on the procedural generation of virtual worlds. Multimodal Technol Interact. 2017;1(4):27.[DOI]

Copyright

© The Author(s) 2026. This is an Open Access article licensed under a Creative Commons Attribution 4.0 International License (https://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, sharing, adaptation, distribution and reproduction in any medium or format, for any purpose, even commercially, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

Publisher’s Note

Share And Cite